Key Takeaways

- The number of ratings a review average is based on is key information for users in product lists

- For many users, a product with few reviews isn’t worth exploring

- Providing the number of reviews alongside the ratings average gives users the information they need to make a decision about whether to explore a product in more detail or not

Video Summary

Updated with new data in 2025

Throughout multiple rounds of Baymard’s large-scale testing, it’s clear that user ratings and reviews are critical to users’ purchasing decisions (e.g., see our article on responding to negative reviews or designing the ratings distribution summary).

However, it was also observed in testing that only displaying user ratings averages — the “star” average — in list items was not enough.

Indeed, without knowledge of how many users had rated products, a substantial number of participants distrusted the validity of user ratings averages.

It’s therefore key to display not only the review average but also the number of ratings a review average is based on in product list items.

Now, ever since we first documented this issue in 2015, our e-commerce UX benchmark has shown that this issue has improved over time, from 25% of sites having this issue in 2015 to only 5% in 2022.

However, while the vast majority of sites now display the number of ratings an average is based on in product list items, a few sites still don’t.

This leaves their users with an even worse experience, as by this point displaying the number of ratings alongside the review average in product list items has become an e-commerce convention — bucking this will surprise and dismay users who’ve come to expect this information will be provided.

In this article we’ll discuss our Premium research findings related to ratings in product list items:

- How only showing the star average hampers users scanning product lists

- How including the number of reviews the average is based on factors into users’ perception of the average

The Star Average for Reviews Is Less Useful When Presented Alone

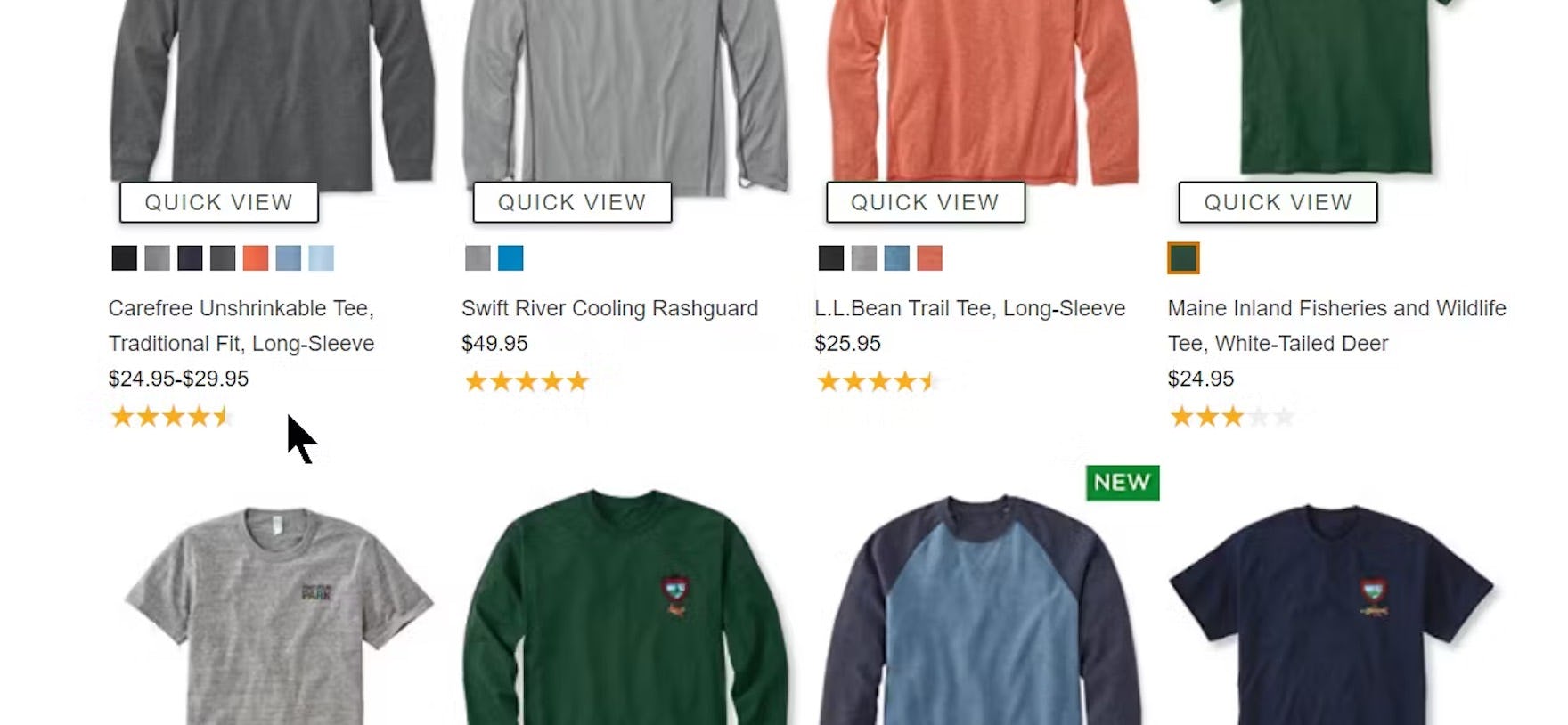

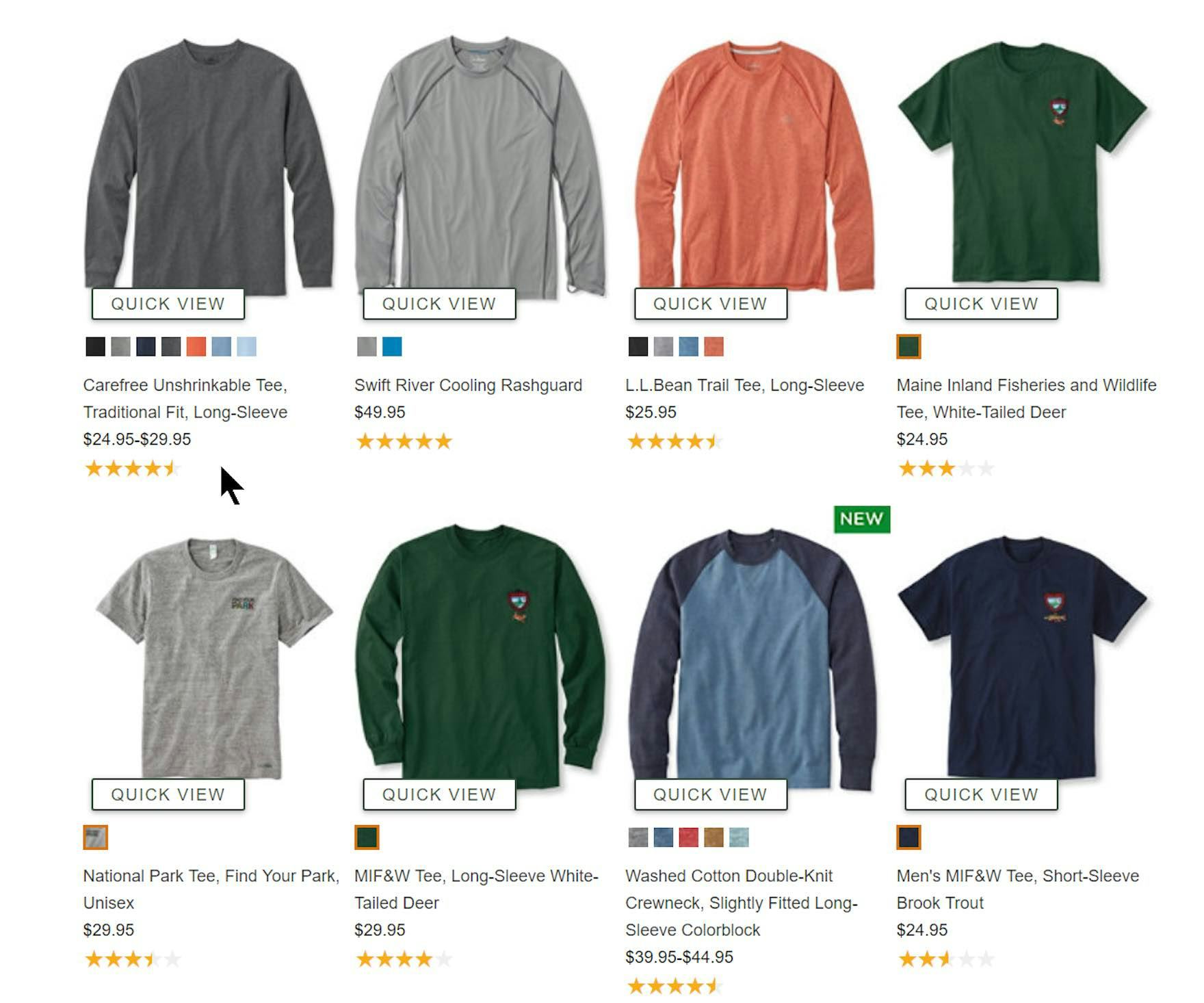

The number of ratings is not shown in L.L. Bean’s list items. The first item in the product list (first image) has a high number of ratings (1,387) as seen on the product details page (second image), but the absence of the count from the product list takes an important decision-making element away from users.

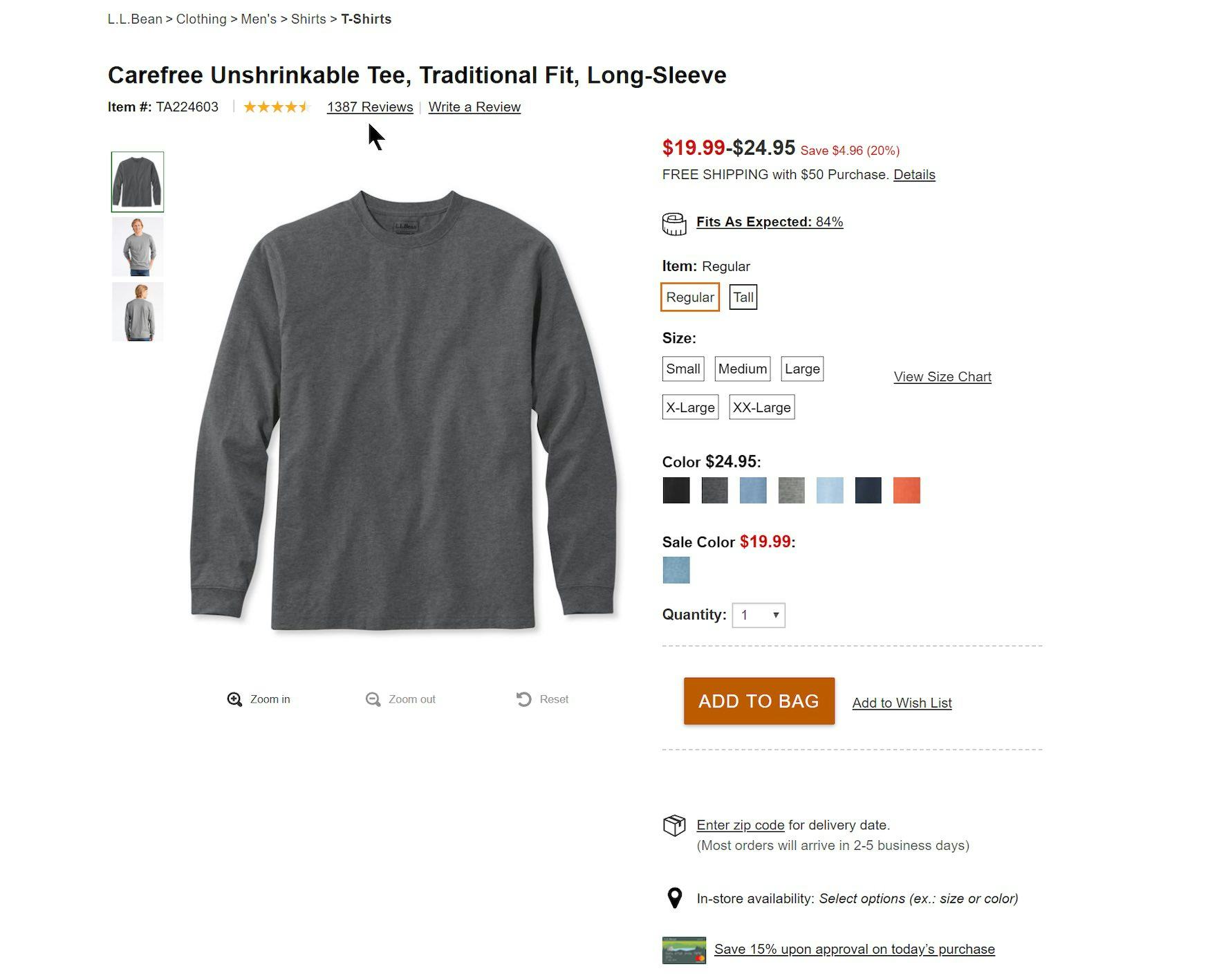

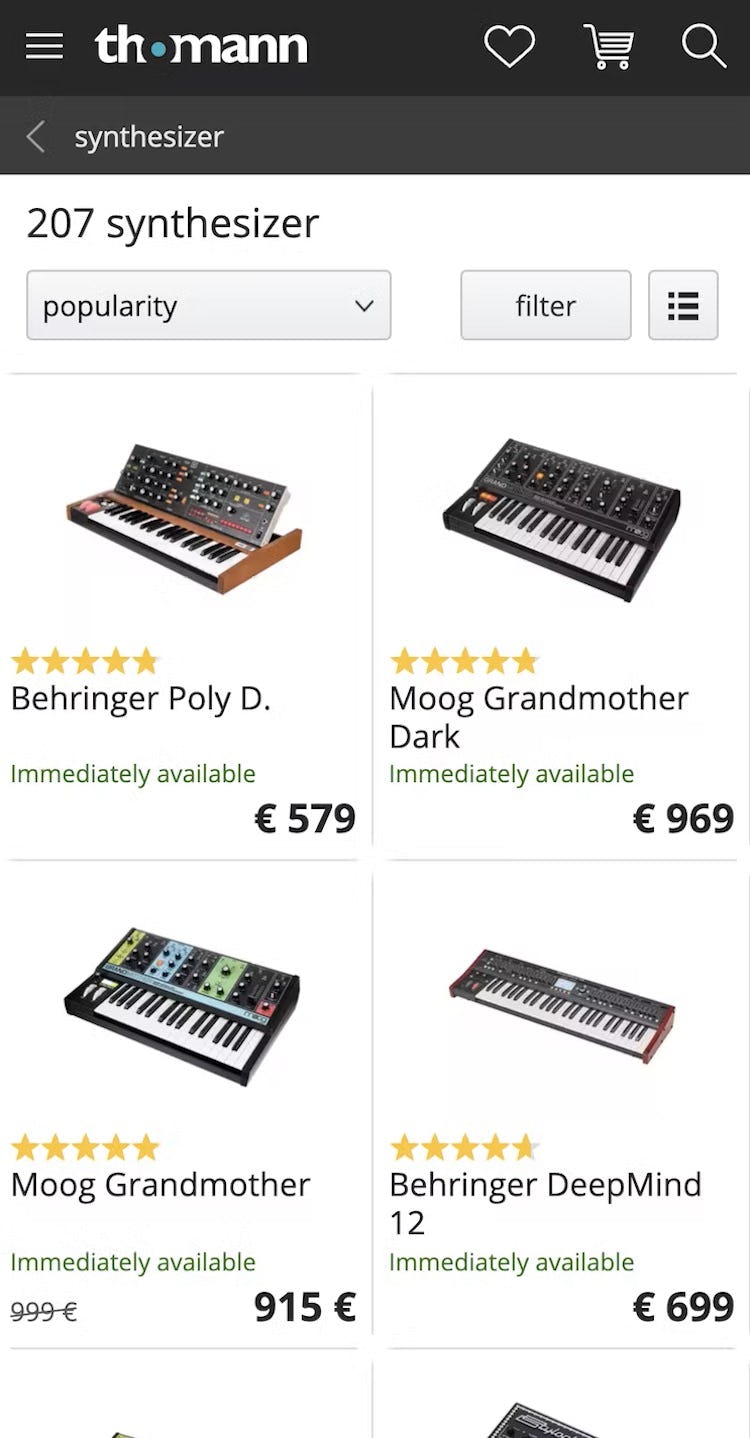

Without any indication of the number of user ratings, users on both Marks & Spencer (first image) and Thomann (second image) will find it harder to assess items. Without this information, similarly rated items of interest can’t be compared without visiting product pages.

If only the star average is provided in product list items, users will be unable to accurately judge how reliable the average is.

After all, a 4.5-star average based on 5 reviews is quite different from a 4.5-star average based on 1,000 reviews.

If users choose an item in the product list based on a high ratings average, only to find on the product page that it has a low rating count, they could lose interest in the product and feel that the trip to the product page was a waste of time.

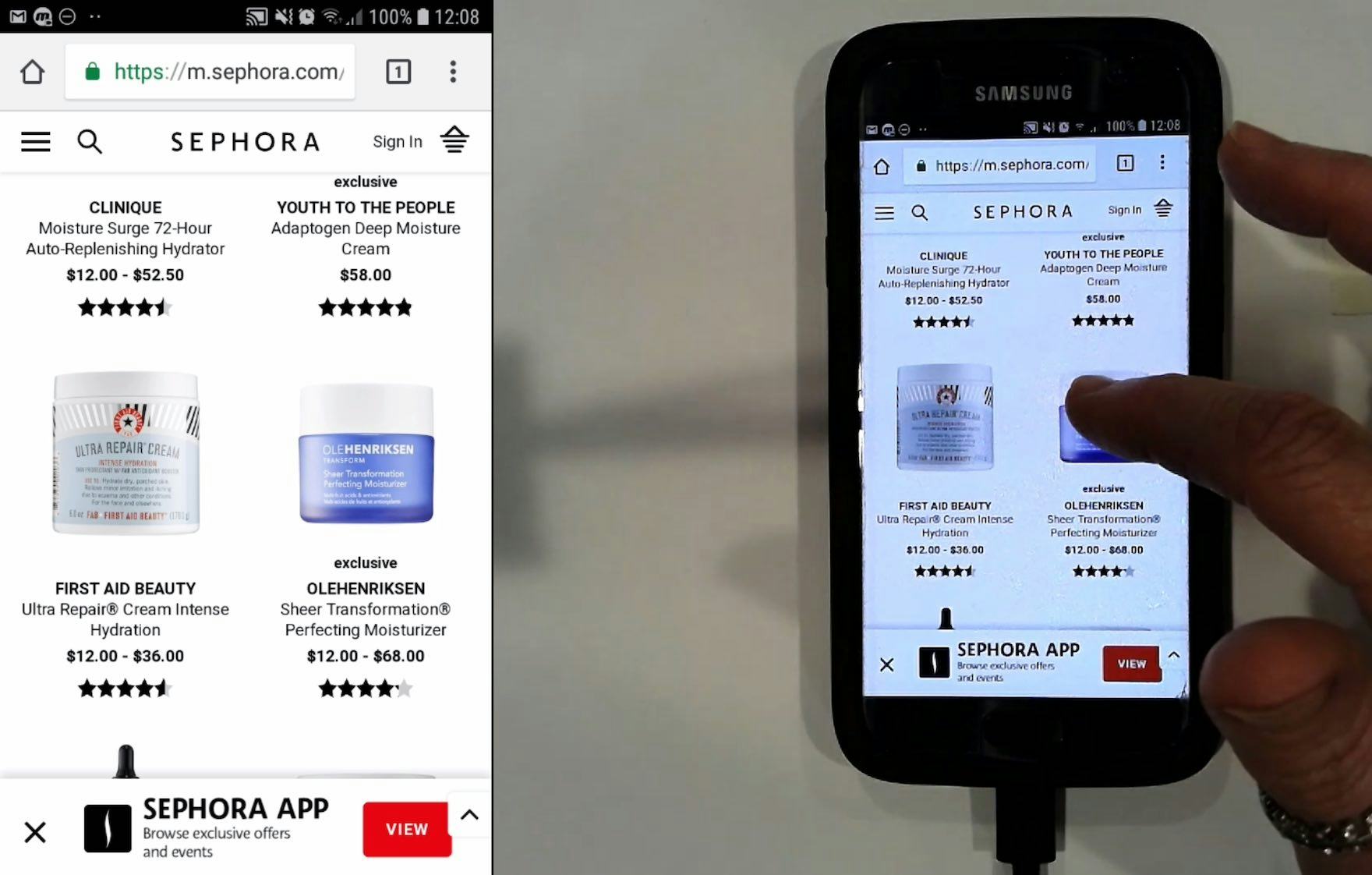

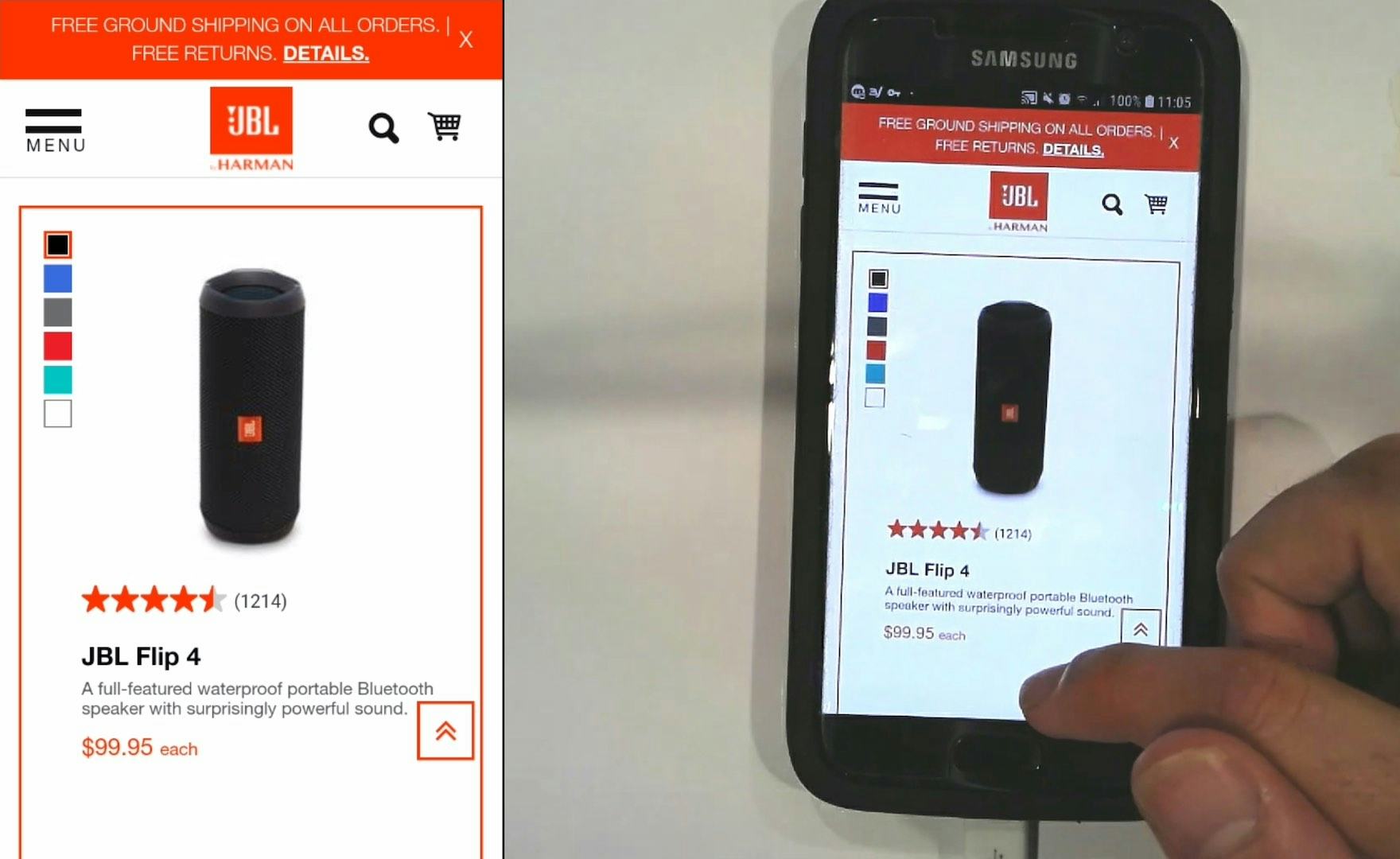

Without a count of ratings, it is hard for users on Sephora’s mobile site to compare ratings for items that have averages all in the range of 4 to 4.5.

Another consequence of only showing ratings averages in the product list is that it is often not easy to differentiate between items with closely matched ratings averages.

For example, if there are 10 items with ratings averages between 4 and 4.5, it is quite difficult for users to mentally rank them if they must rely only on the visual provided by the “star” average.

As a consequence, the ratings averages are less useful in comparing the popularity of similarly rated products.

Including the Number of Reviews along with the “Star” Average Gives Users a Full Picture

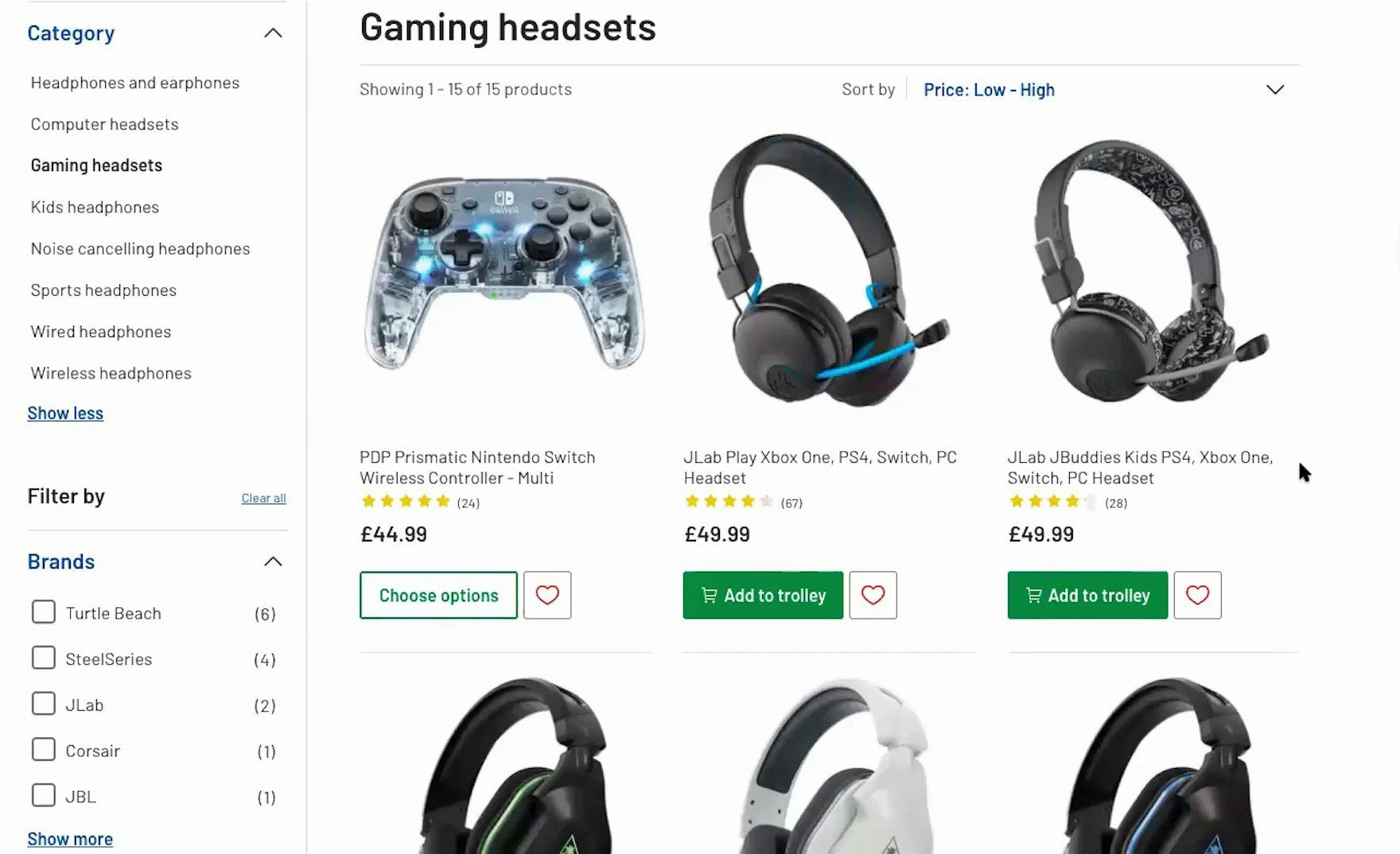

“So here I can see not only the review, but also the number of reviews. I don’t like when websites don’t include the number of reviews, because then if it has five stars, but it’s just one person — I’d rather have something that has, I dunno, 700 reviews and four stars because then I know people still buy it.” Being able to see the number of user ratings in list items, as seen on Argos UK, increases trust in both the ratings and the site.

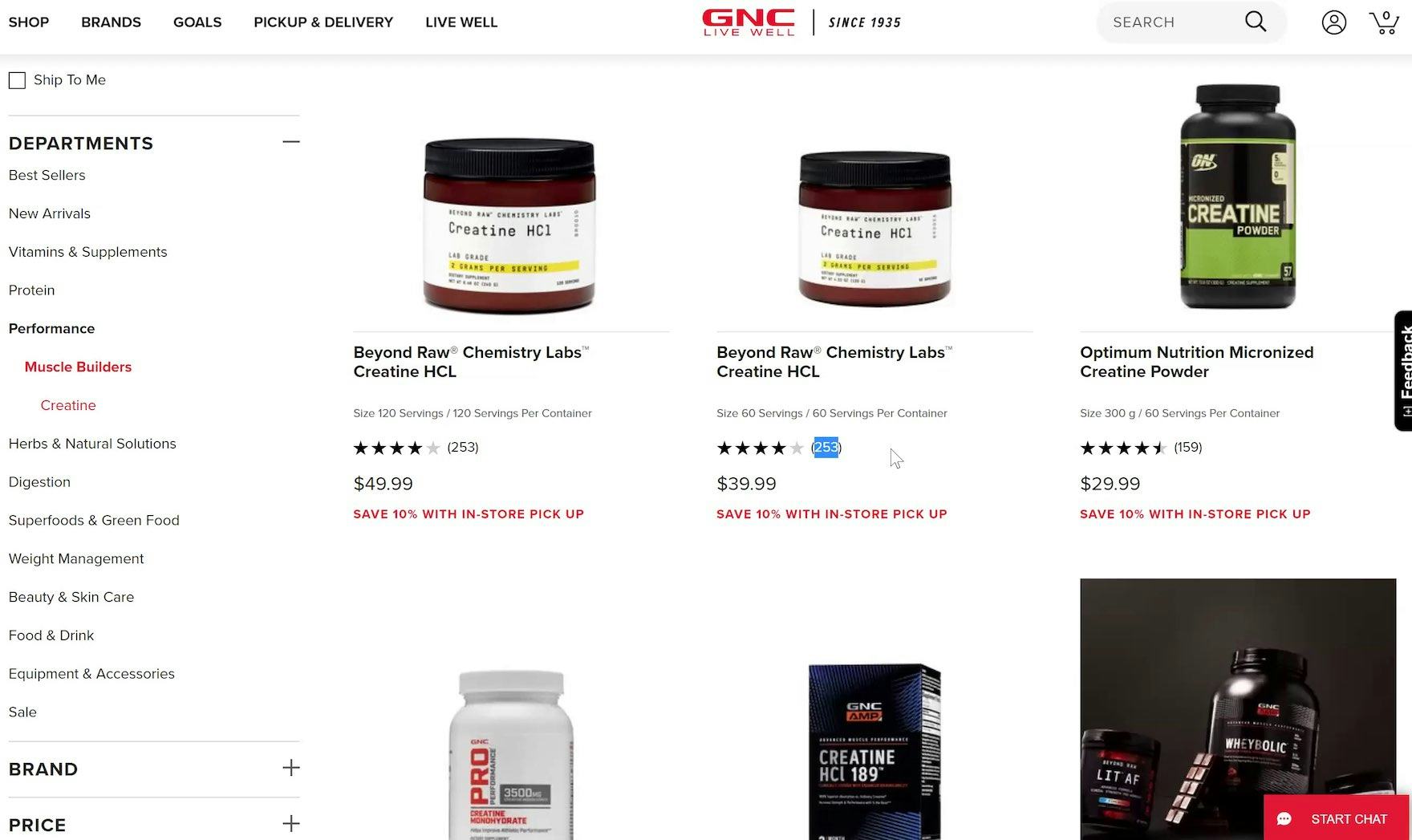

“Oh wow, and this has way more product reviews than the other websites I was looking at. I like that.” Prominent user ratings averages, along with the number of ratings, on GNC help users assess the ratings of others at a glance.

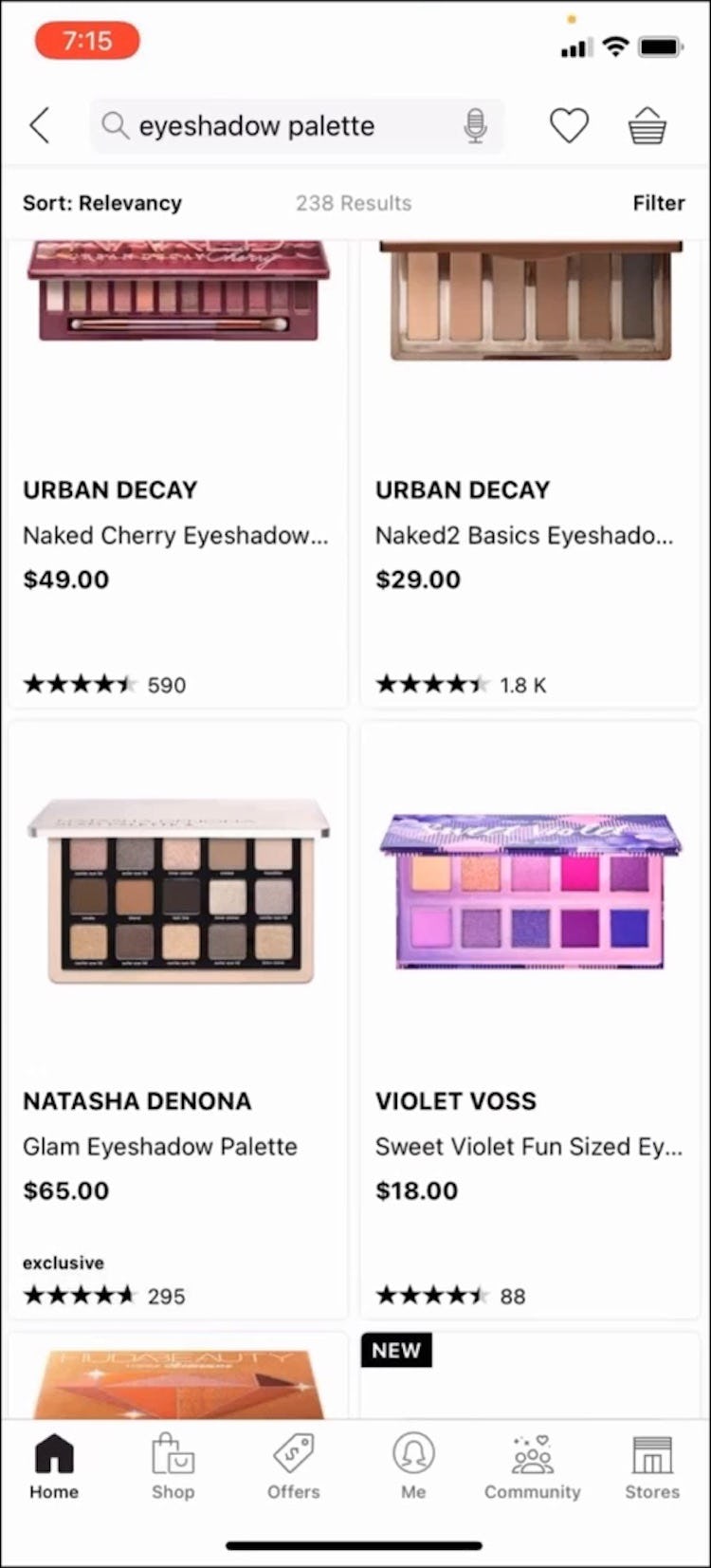

“The more people that are rating it makes me feel like a more credible site it is.” Showing the number of ratings can help boost the credibility of the site, such as JBL, particularly when the count is high, or if users are unfamiliar with a site.

It is therefore worth investing time and effort into increasing the number of ratings and reviews, and displaying the number of user ratings for each product in list items.

As one test participant stated, “So what I’m looking at is not just the price but also the star rating, with the number of reviews it has. So if it has a high star rating but has a low number of reviews, that’s not good.”

Moreover, to better understand how users’ perceive reviews, at Baymard we regularly conduct a survey to get a sense of how the number of reviews for a product affects users’ perception of the ratings average.

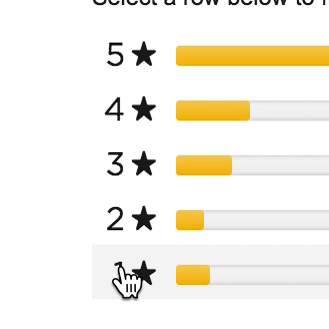

The result from our survey, showing a clear user bias towards strongly reviewed products with numerous ratings over products with perfect reviews based on just a few ratings. Methodology: each survey showed the respondents two list items (shown in the result graphs) and asked them to pick which one they would purchase. Price and product description were kept identical — the difference between the two list items was a combination of user rating average and the number of ratings. To avoid sequencing bias, the display sequence for the answer options was randomized for each respondent.

In this latest survey, results indicate that, as in our 4 previous surveys (over 5,170 respondents in total), nearly twice as many respondents favor a product with a 4.5-star average rating from 57 users over a product with a 5-star average rating from just 4 users.

These results indicate how important the number of ratings is to users — they clearly want and need to see the number of ratings alongside the average.

Not only do users judge the reliability of product ratings from the ratings count, but also high numbers of ratings positively affect the perceived credibility of the site.

In other words, users see safety in numbers, and not knowing the number of ratings while in the product list detracts from users’ confidence in the ratings average shown there.

Always Include the Number of Reviews an Average Is Based on in Product List Items

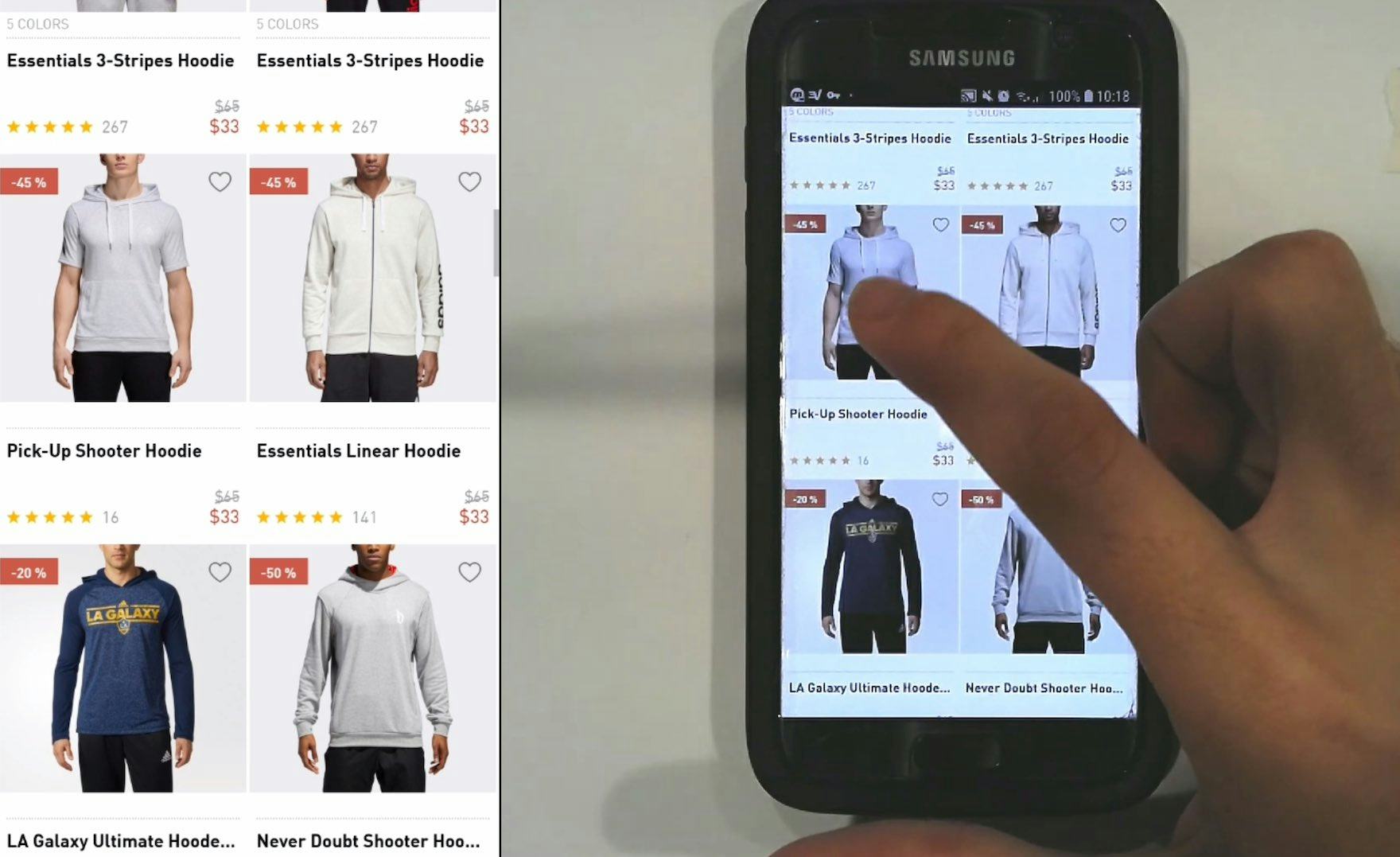

“It’s relative to the other ratings on the site. This has about a tenth the number of ratings as the item next to it — it does give me pause because there aren’t enough data points to have a clear image of the quality of the product.” While a relatively low count of user ratings might lead users to favor one product less than another, such as the count of 16 vs 141 for the lower 2 items here on Adidas, finding out at this point is better for users than having to do so by visiting multiple product pages.

Including the number of reviews a review average is based on has been a positive trend in e-commerce UX.

Over time, many sites have understood that this piece of information is critical to users making decisions in the product list on which products to explore in further detail.

Yet as more sites provide this information in the product list, a few laggards still don’t — risking users perceiving their product list information as insufficient or, worse, purposefully misleading.

This article presents the research findings from just 1 of the 700+ UX guidelines in Baymard – get full access to learn how to create a “State of the Art” ecommerce user experience.

If you want to know how your desktop site, mobile site, or app performs and compares, then learn more about getting Baymard to conduct a UX Audit of your site or app.