Key Takeaways

- Our 2022 mobile UX benchmark includes ratings of 17,500+ across 71 top-grossing e-commerce sites

- Compared to 2020, e-commerce mobile UX performance hasn’t changed drastically, with the average mobile site performance remaining “mediocre”

- Avoid the 15 common UX pitfalls identified in the article to begin improving your site’s mobile UX

At Baymard we’ve just completed a new e-commerce Mobile UX Benchmark.

The benchmark of Mobile e-commerce UX — based on our extensive Premium research findings — contains 17,500+ Mobile site elements that have been manually reviewed and scored by Baymard’s team of UX researchers (embedded below).

Additionally, we’ve added 12,000+ worst and best practice mobile examples from the top-grossing e-commerce sites in the US and Europe (performance verified).

In this article we’ll analyze this dataset to provide you with the current state of Mobile UX, and outline 15 common mobile UX pitfalls and corresponding mobile e-commerce best practices applicable to most Mobile e-commerce sites.

The Mobile UX Benchmark Scatterplot

For this analysis we’ve summarized the 17,500+ Mobile Usability Scores across 46 topics and plotted the 71 benchmarked mobile sites across these in the scatterplot above.

These individual site scores are represented in the scatterplot. Each dot, therefore, represents the summarized UX score of one site, across the guidelines within that respective topic of the Mobile e-commerce experience.

The overall Mobile e-commerce UX performance of each individual site is listed in the first row. The following rows are the UX performance breakdowns within the 46 topics that constitute the overall Mobile e-commerce performance.

The Current State of Mobile UX

As the scatterplot shows, the Mobile e-commerce UX performance for the average top-grossing US and European e-commerce site is “mediocre”.

Indeed, nearly all sites are in a tight cluster of “mediocre” (54%) and “acceptable” (37%).

That said, while there aren’t any standout performances, there are also very few “poor” experiences (9%).

The most noticeable change from the previous benchmark is the decrease in sites that perform “poor”, with the percentage dropping from 20% in 2020 to 9% in this year’s benchmark.

Yet the improvement is slight at best, as the percent of “mediocre” sites increased from 42% to 54%, while the percent of “decent” sites stayed largely unchanged (36% in 2020 to 37% in 2022).

Also, similar to the 2020 benchmark, no sites perform either “good” or “perfect”.

This indicates that there remains ample room for improvement when it comes to the e-commerce Mobile user experience.

In the following we’ll provide a more detailed walkthrough of Mobile e-commerce UX performances and the competitive landscape, along with “missed opportunities” to be extra alert to.

In particular, we’ll discuss 15 general mobile UX pitfalls to be aware of for 6 of the 46 topics, across 4 different themes, of Mobile UX:

- Mobile Homepage & Category: Main Navigation

- Mobile Search: Autocomplete

- Mobile Search: Results Logic & Guidance

- Mobile Checkout: Validation Errors & Data Persistence

- Mobile Sitewide Design: Sitewide Design & Interaction

- Mobile Sitewide Design: Touch Interfaces

(Note: these topics were chosen as they are the most interesting or the most suitable for discussion in an article. Premium members can access the full list by navigating to the Mobile study. If you’re interested in trying out a Premium subscription, head over to our Premium research page for more details.)

Mobile Homepage & Category: Main Navigation

The Mobile Main Navigation is the weakest topic within the Mobile Homepage & Category Navigation theme, with 50% of benchmarked sites performing “poor” or that can be considered outright “broken”, and only 33% performing “decent” or higher.

This is broadly due to users struggling more to navigate and understand a mobile site’s structure compared to on desktop, which has a more even spread in performance.

This indicates that there are potentially helpful design elements not being provided to mobile users.

In particular, there are 3 issues sites get wrong when it comes to the Mobile Main Navigation.

1) 90% of Mobile Sites Don’t Highlight the Current Scope in the Main Navigation

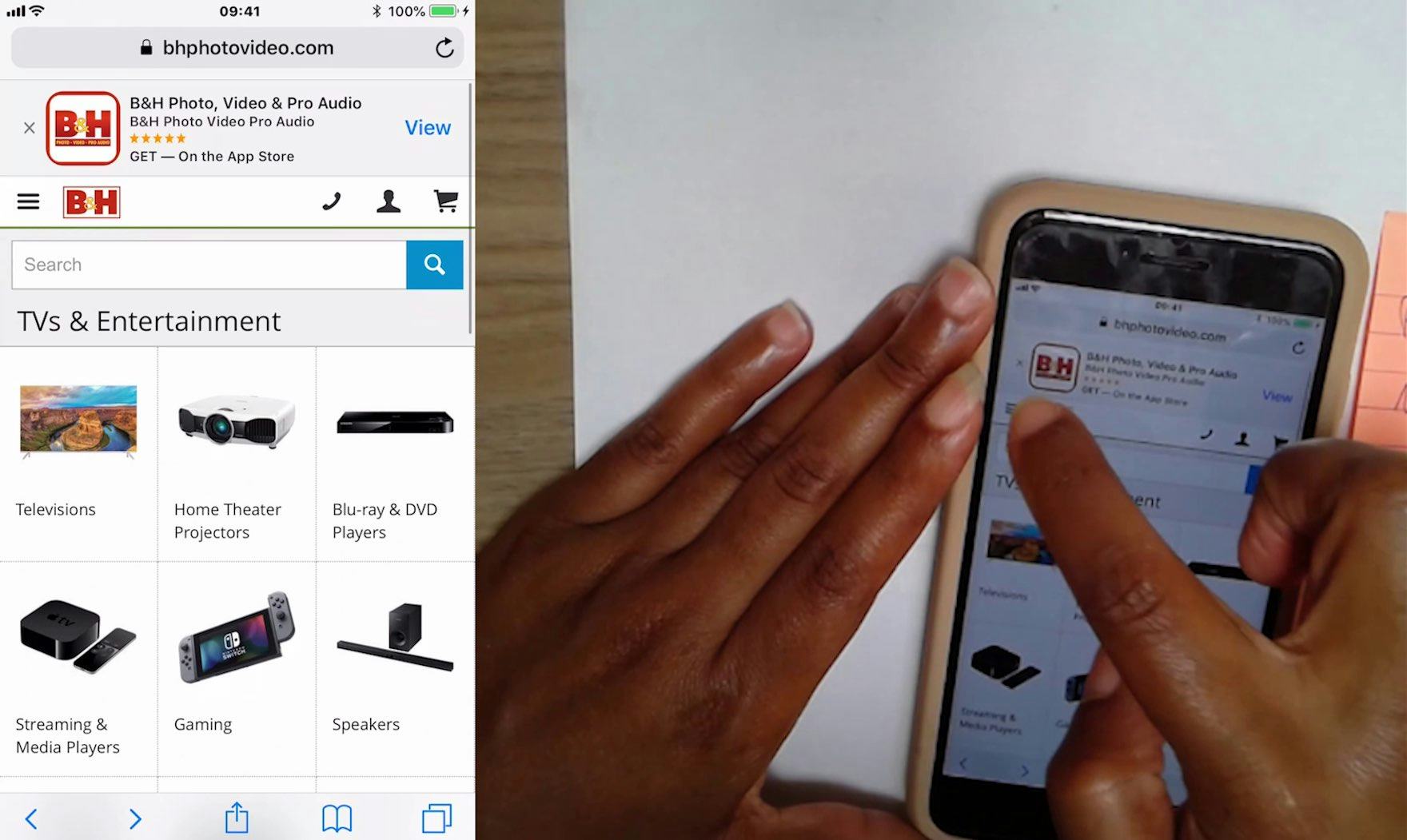

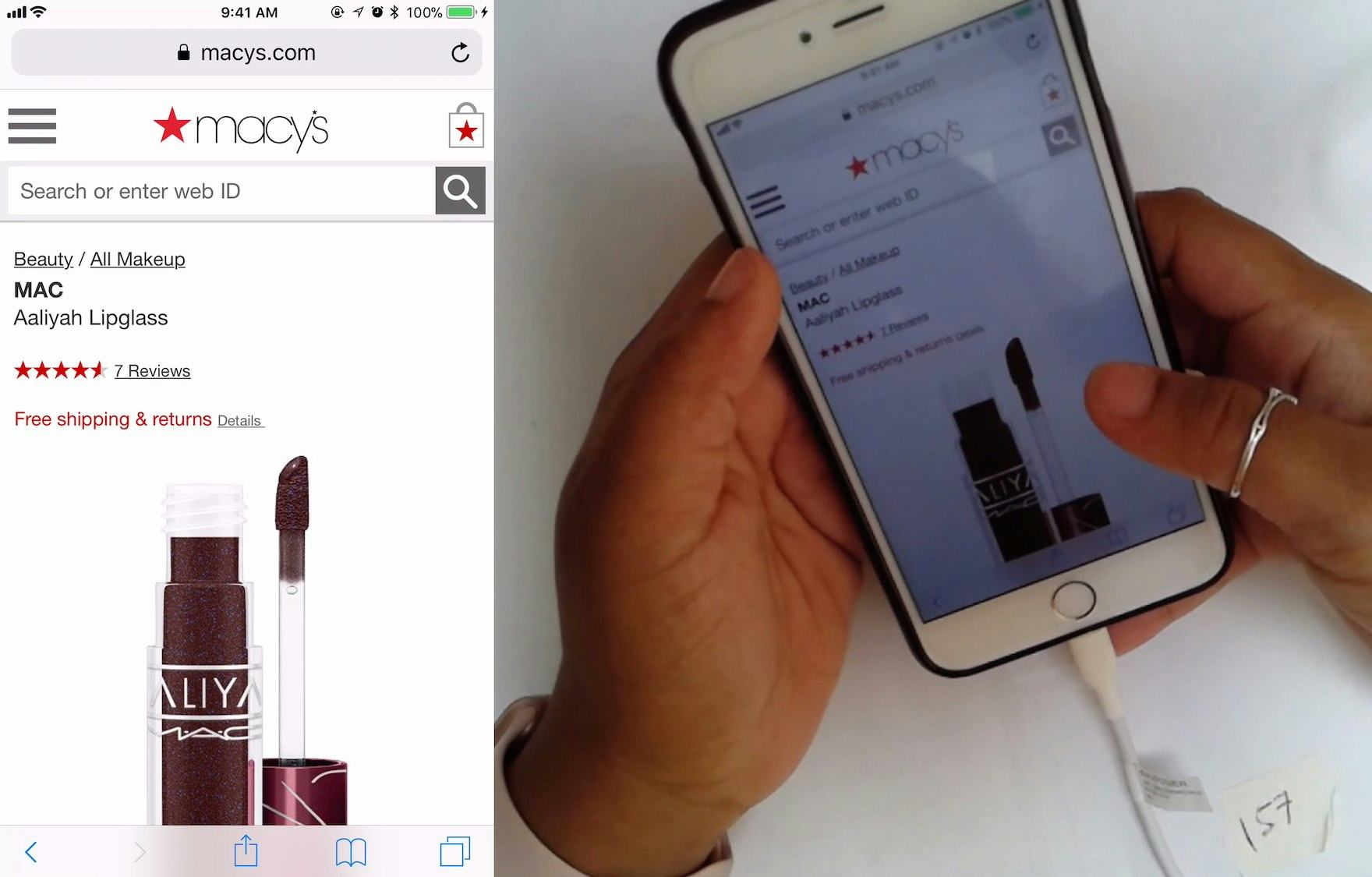

This participant arrived directly at B&H’s “TVs & Entertainment” category page from off-site (first image). She experienced severe disorientation, as she was unfamiliar with the site and struggled with the terminology. Looking for wireless speakers, she opened the main navigation and tapped the “TVs & Entertainment” category (the category page she was already on; second image), which simply closed the main navigation. On mobile, even though the main navigation isn’t permanently displayed, some users still rely on it to give them a sense of where they are on a site.

On mobile there’s typically no permanently visible main navigation.

However, we observed that the mobile user behavior is similar to the user behavior observed on desktop sites, as several participants during mobile testing tried to open the main navigation to get a sense of where they were within the site hierarchy (especially mobile participants who arrived directly on a product page from off-site).

When the current scope wasn’t highlighted in the main navigation, participants had a more difficult time figuring out where they were within the site hierarchy — putting more strain on breadcrumbs (which were often absent, inconsistently implemented, or truncated) and terminology to perform perfectly to help them learn the site hierarchy.

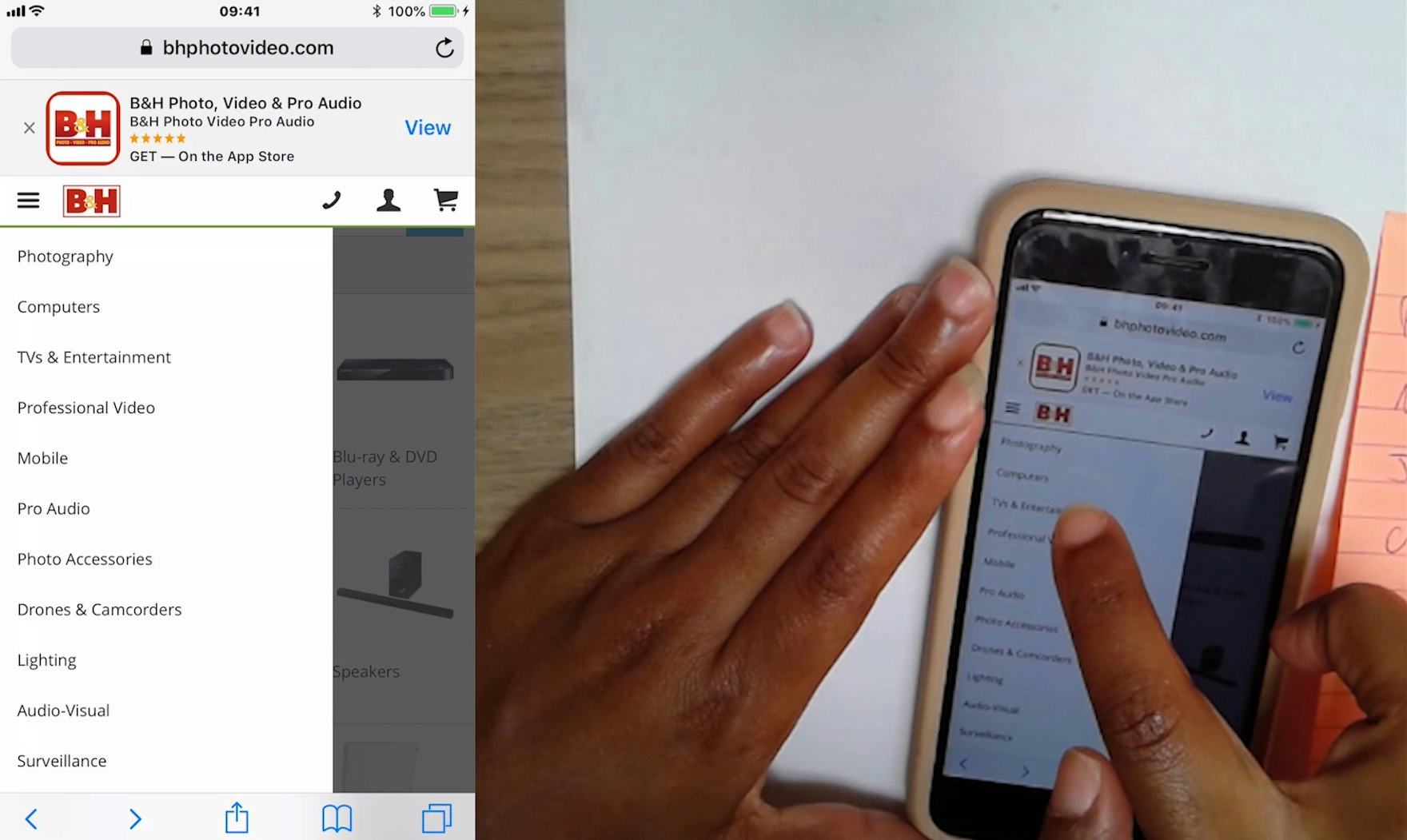

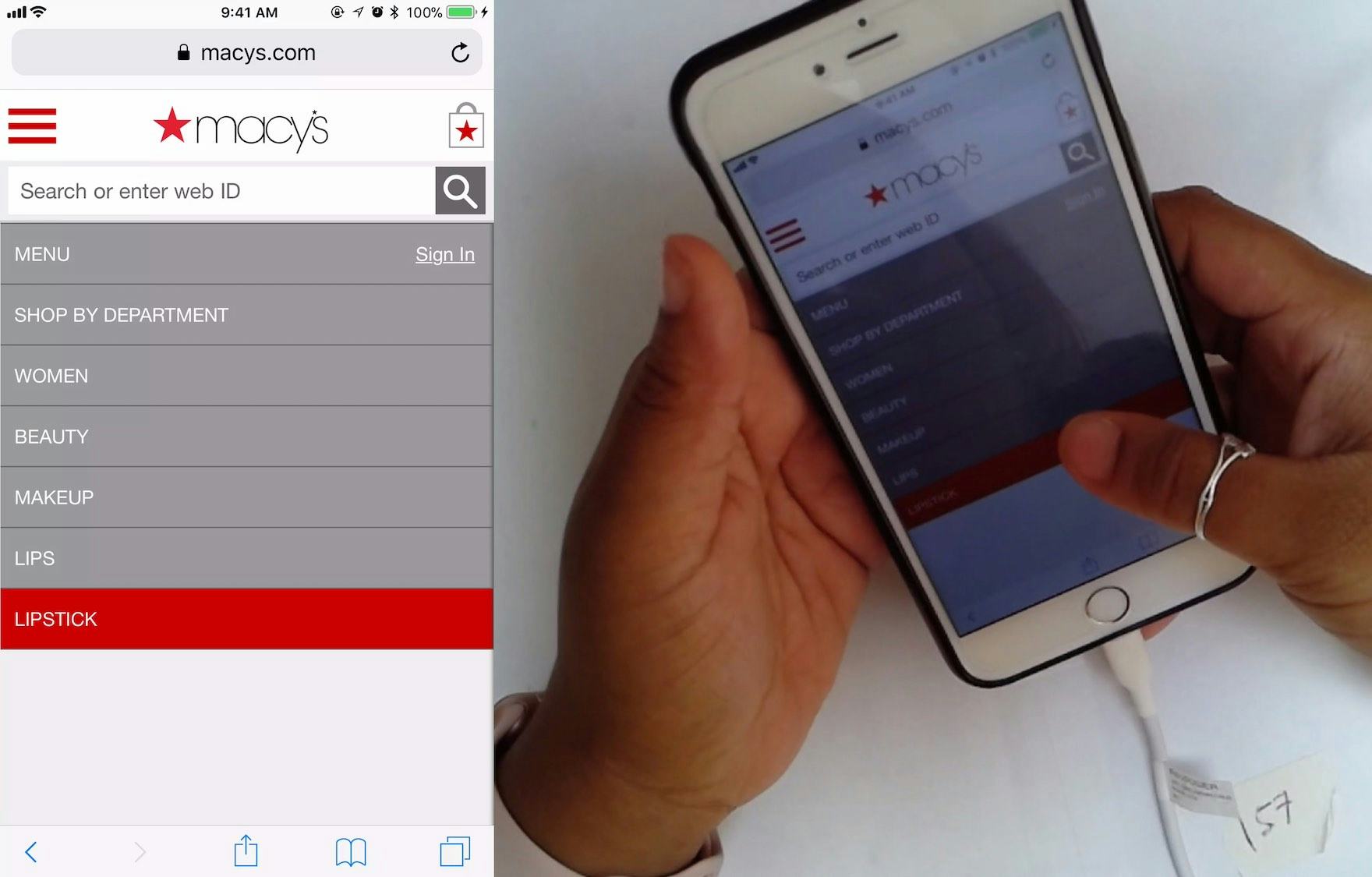

At Macy’s, a participant arrived directly to the product page for a lipstick (first image). Wanting to explore more lipsticks, she opened the main navigation and immediately understood where she was in the site hierarchy, as the trail of the top-level category and subcategories were displayed, and the current scope was highlighted with a red background. She then tapped the highlighted “Lipstick” subcategory to get a lipstick product list. (Note how the mobile breadcrumbs on the product page wouldn’t have allowed her to do this as quickly, as the only breadcrumbs provided were “Beauty” and “All Makeup”.) On mobile, when there’s sufficient space in the main navigation (e.g., the main navigation is the entire viewport), users can be shown a more detailed hierarchy structure.

Fortunately, providing information on where users are in the main navigation has a somewhat “low-cost” solution: simply highlighting their current scope in the main navigation.

On mobile, this also means styling the current scope differently from the other main navigation options within the main navigation viewport (rather than in the header, as on desktop).

A prerequisite for both desktop and mobile implementations, however, is that the main navigation must be the first level of product categories; otherwise, the navigation item that will be highlighted for users is simply “Shop” or “Products”, which doesn’t help users determine where they are.

2) 32% of Mobile Sites Don’t Make Product Categories the First Level of the Main Navigation

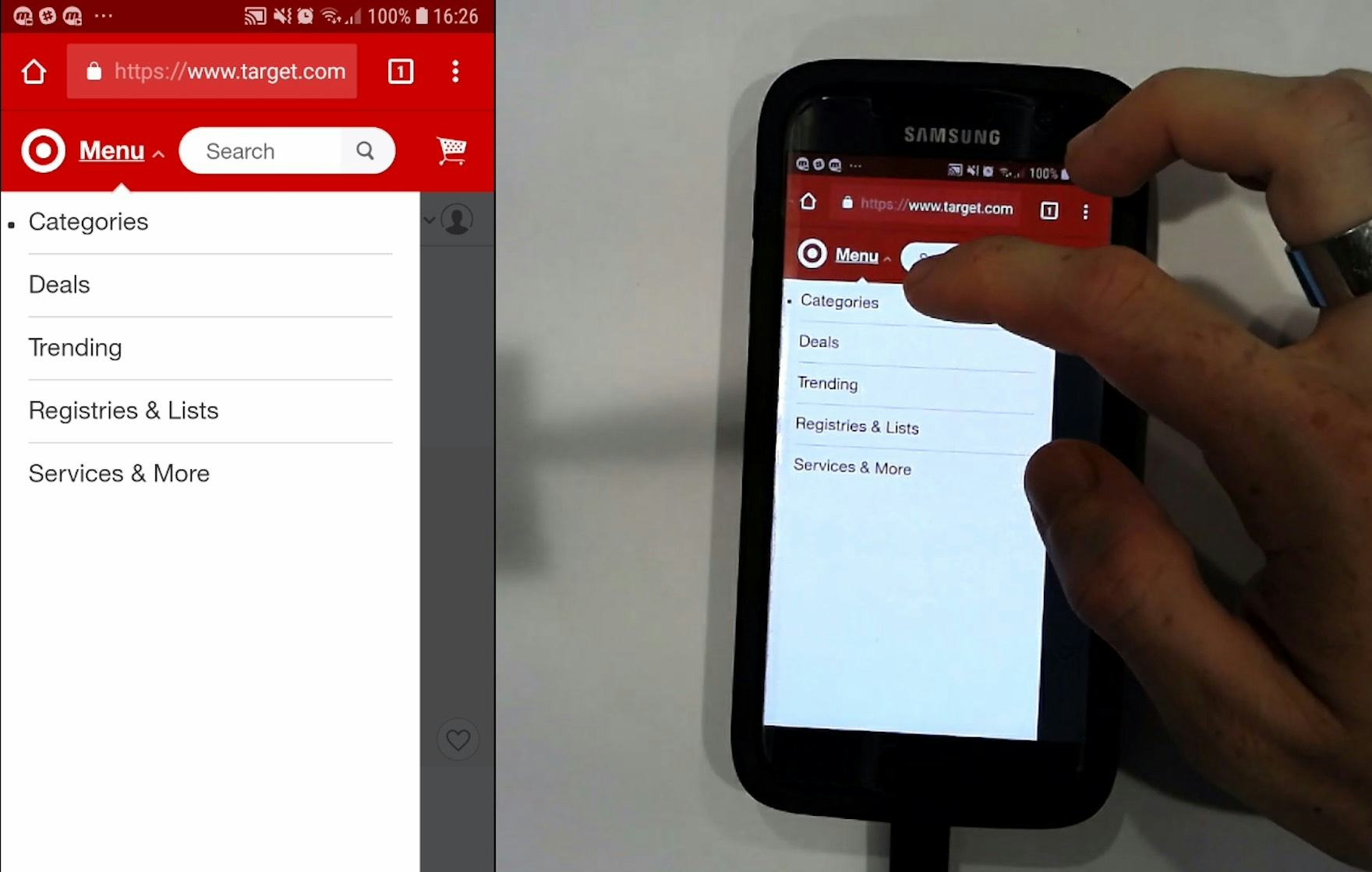

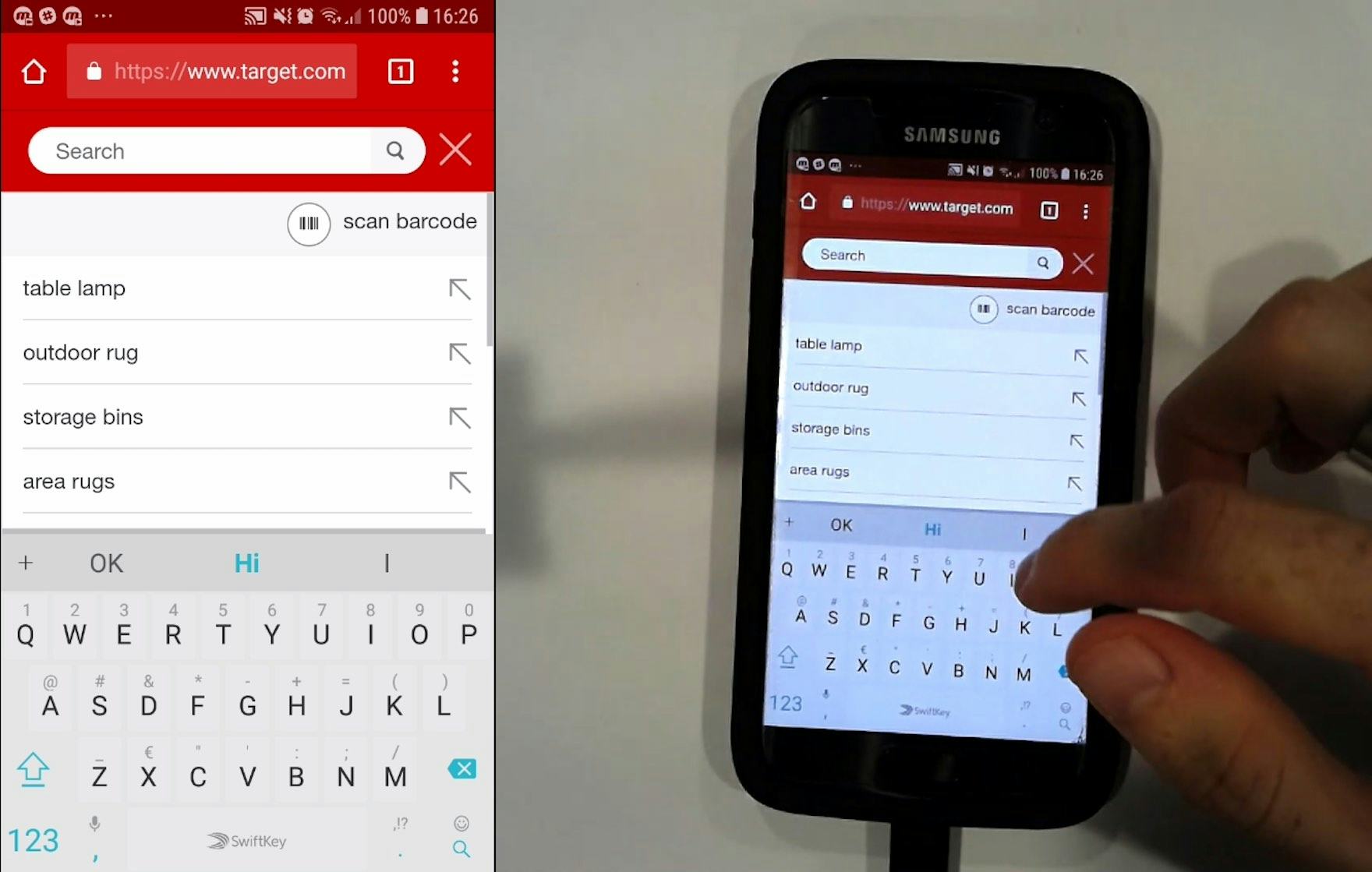

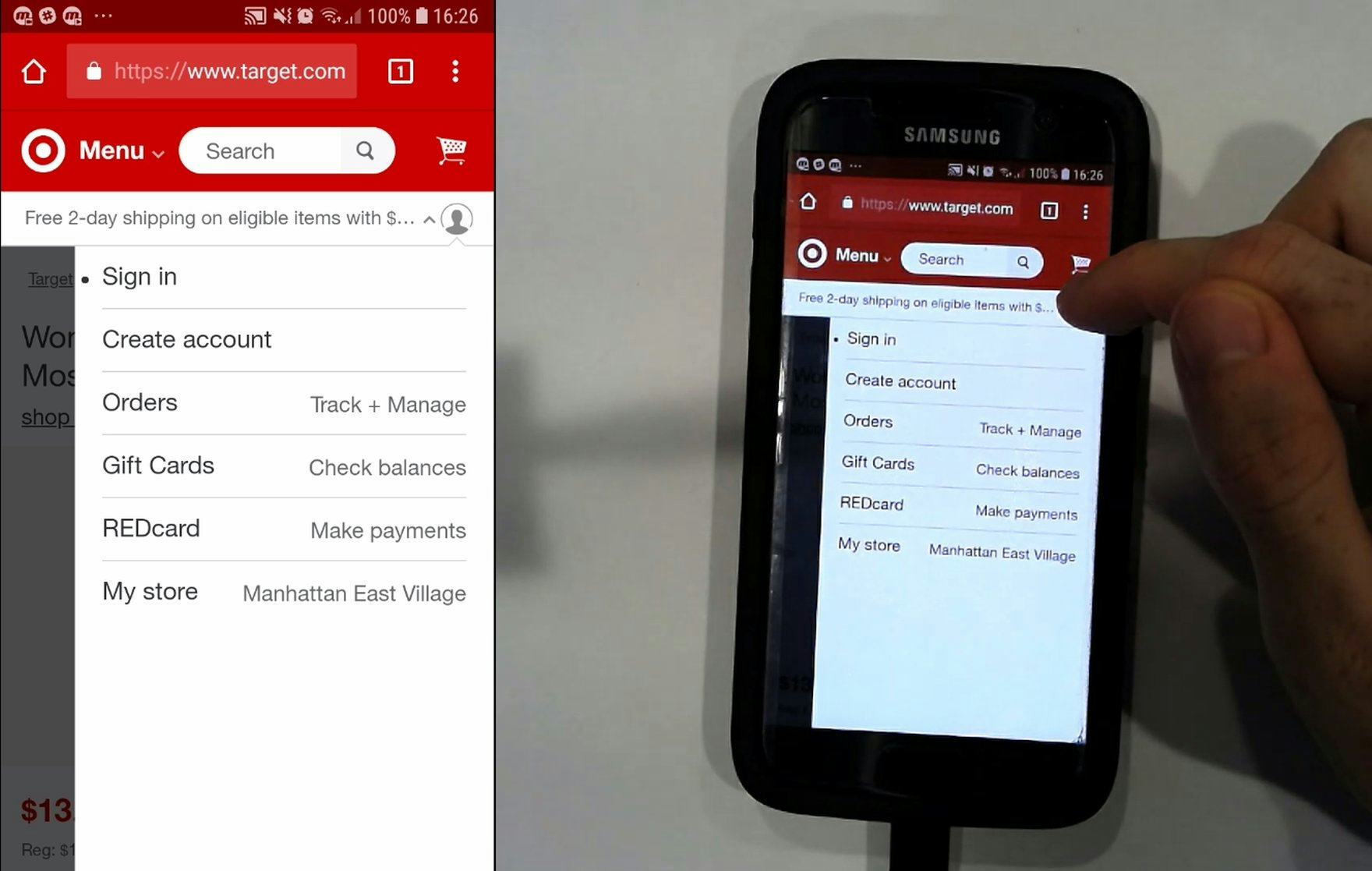

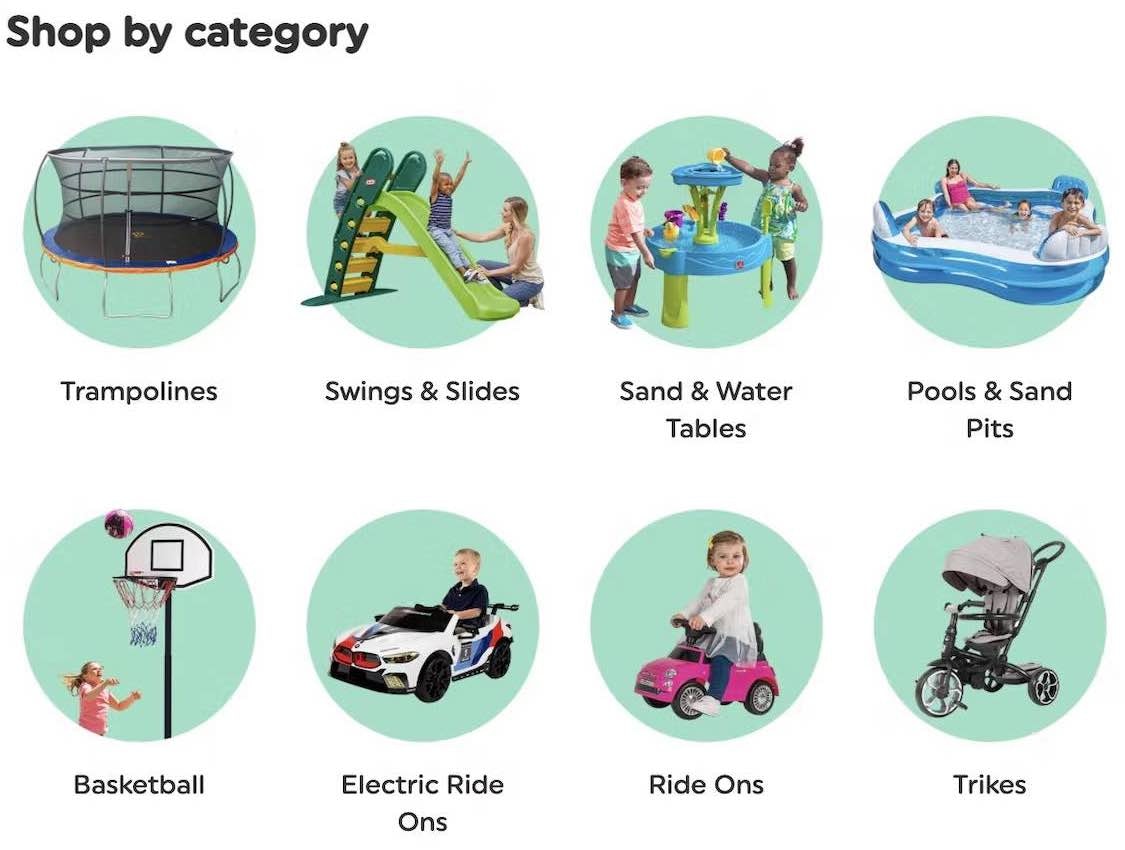

“This is very confusing.” A participant at Target opens the main navigation (first image), scans the options, and decides to search instead (second image). There’s no immediate entry point for users looking to browse products, as all product categories are collapsed into “Categories”. Furthermore, note the white space — product categories could easily have been provided here to help users get started browsing products.

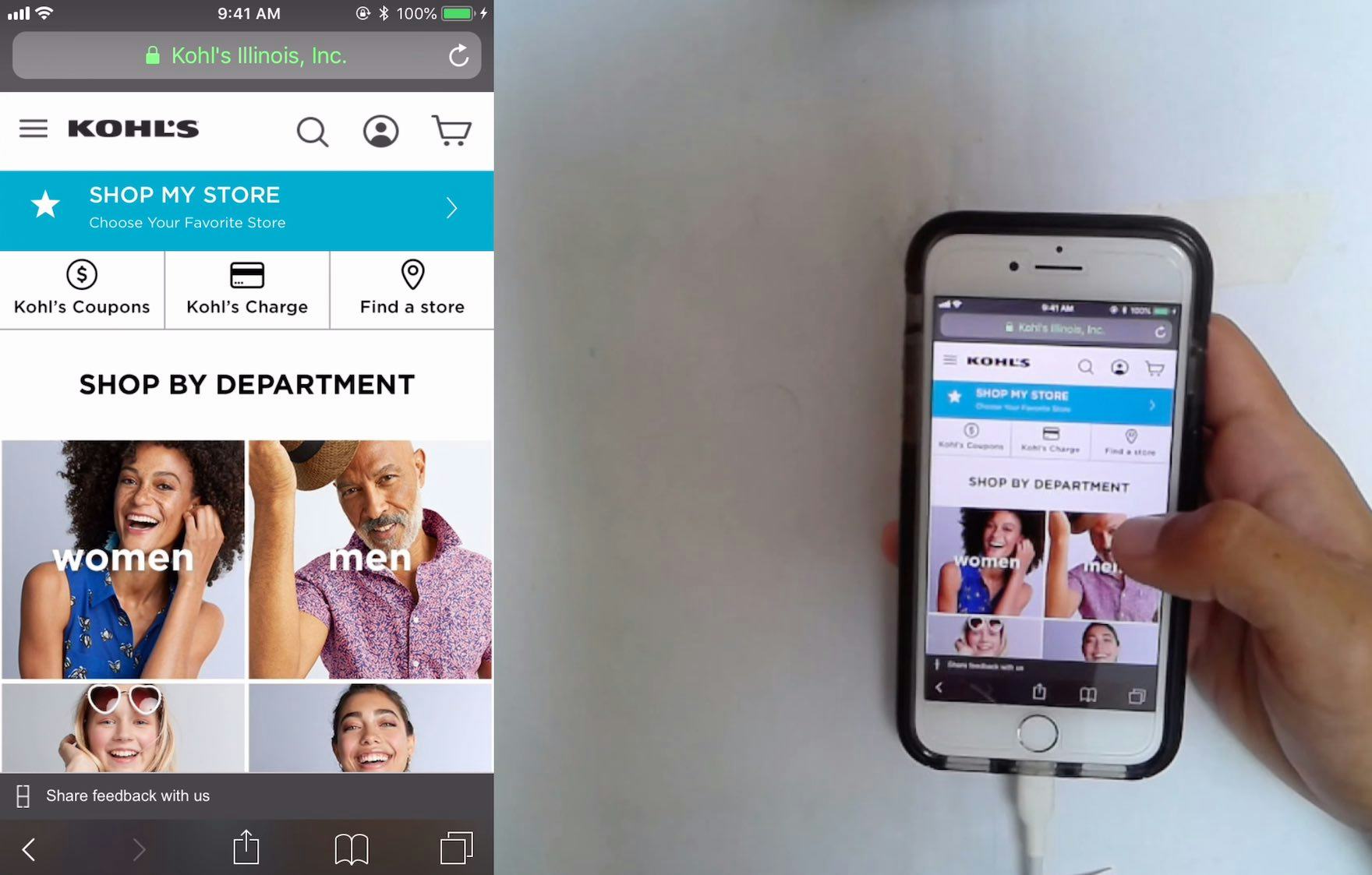

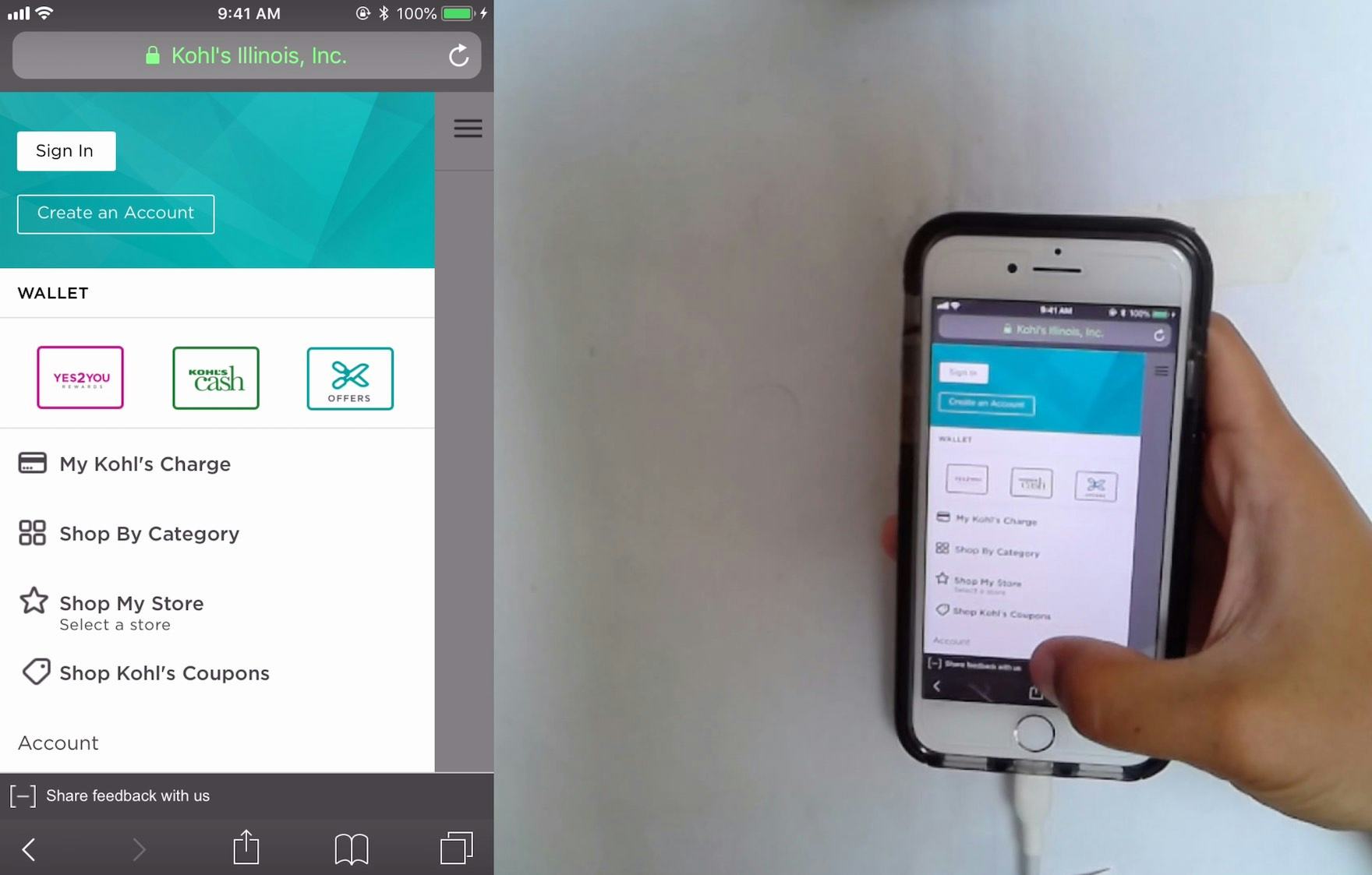

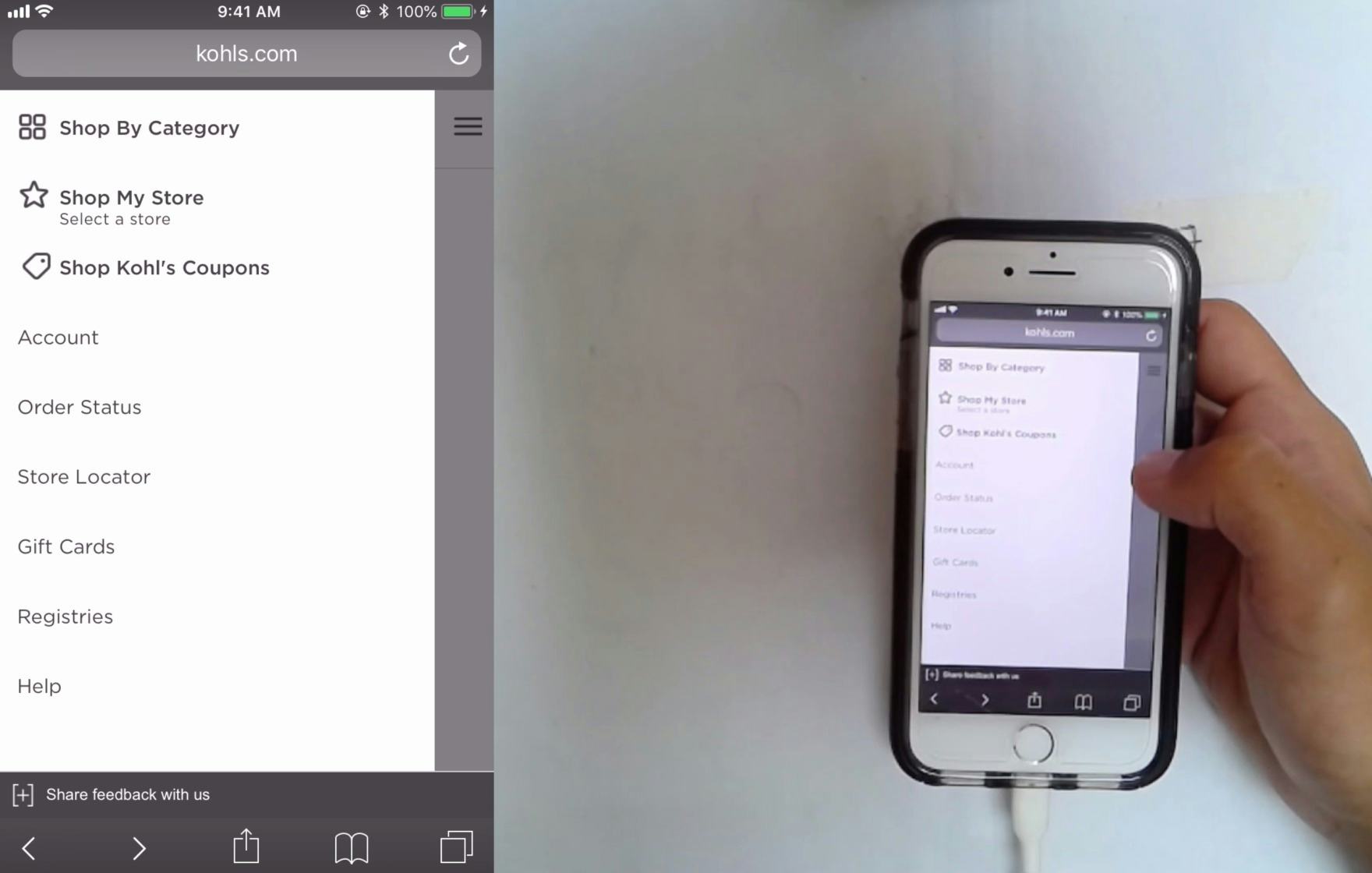

“Whatever this is here [the homepage categories] should be where that is [in the menu].” A participant at Kohl’s expressed his desire to have the product categories, which are available on the homepage (first image), immediately accessible in the main navigation (second image).

When testing mobile sites, when the main navigation collapsed product categories behind a “Shop” link or something similar, many participants had difficulty knowing where to start browsing products once they’d opened the main navigation.

The reasons why it’s so difficult for some users to access the product catalog when it’s collapsed behind a “Shop” link in the mobile main navigation aren’t always clear. However, many sites do a poor job of indicating primary vs. secondary paths on mobile, don’t provide visual styling to indicate hierarchy, or clutter the main navigation with ads, all of which makes it unnecessarily difficult for users to know where the primary path is to simply start browsing products.

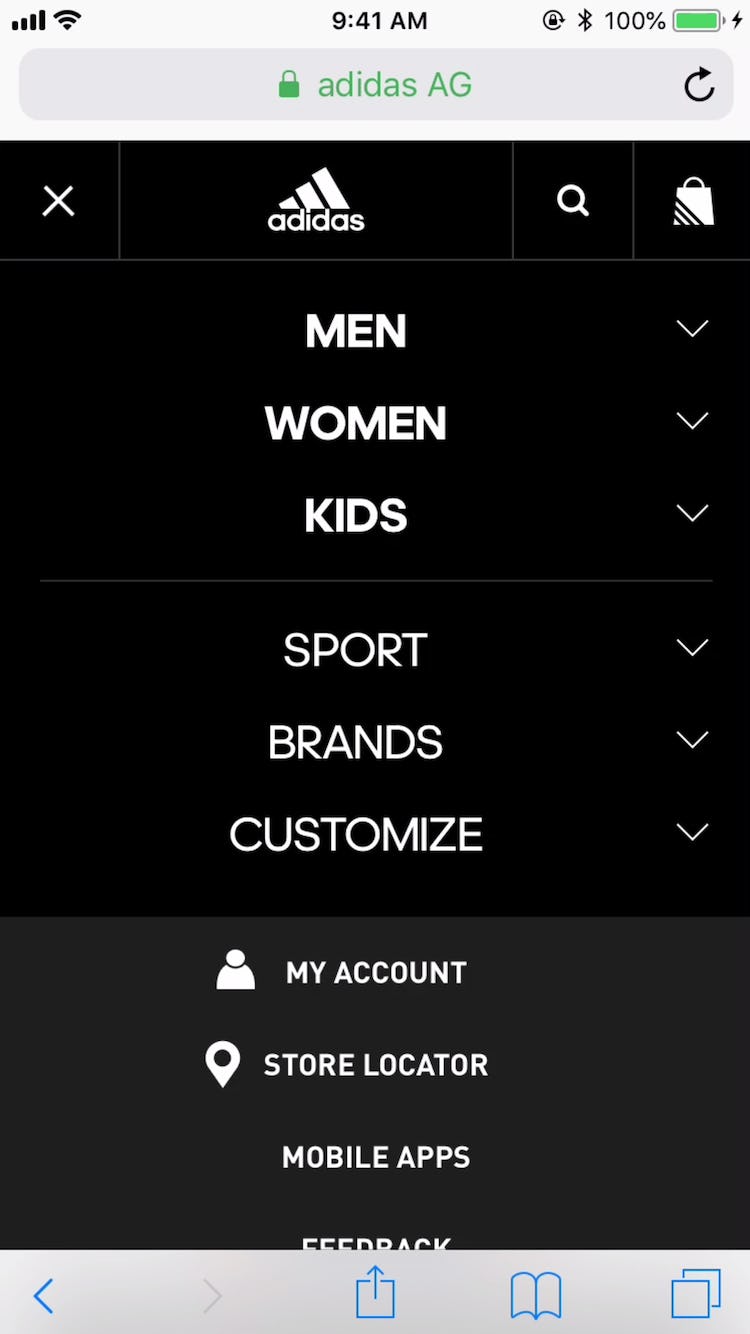

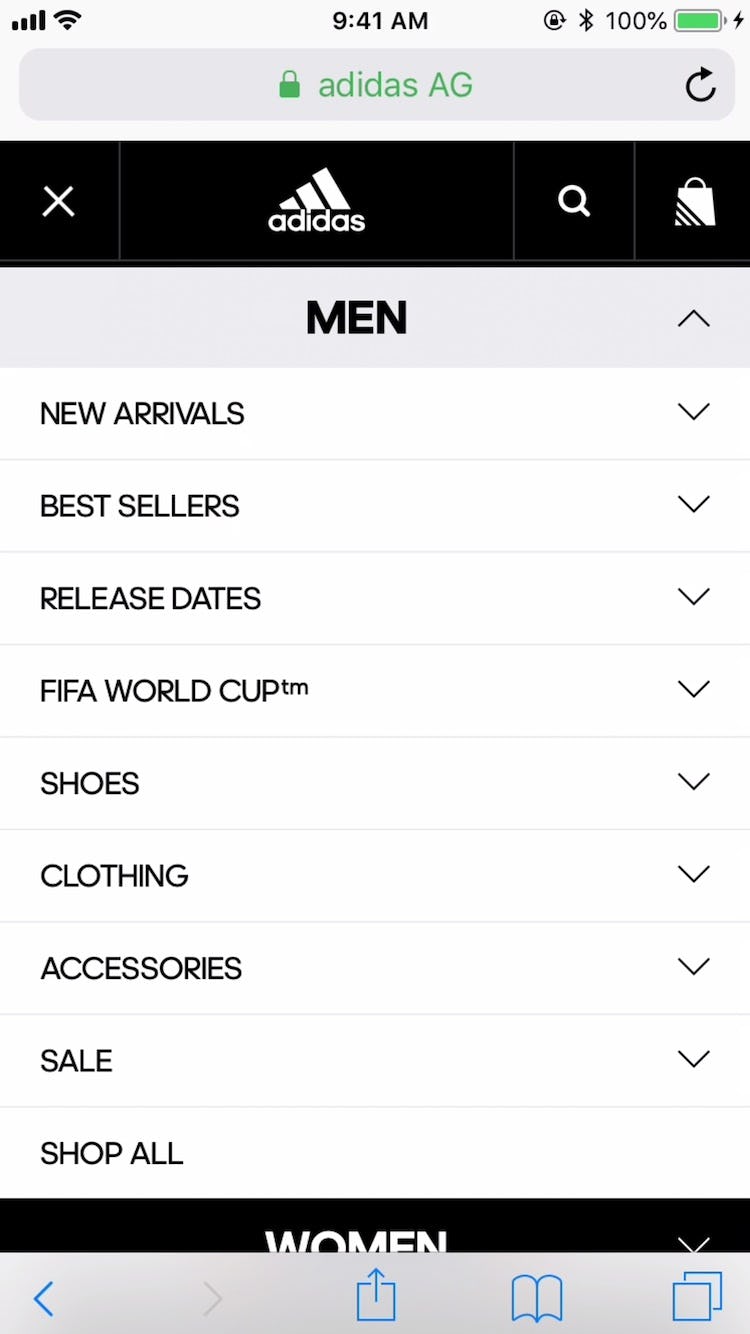

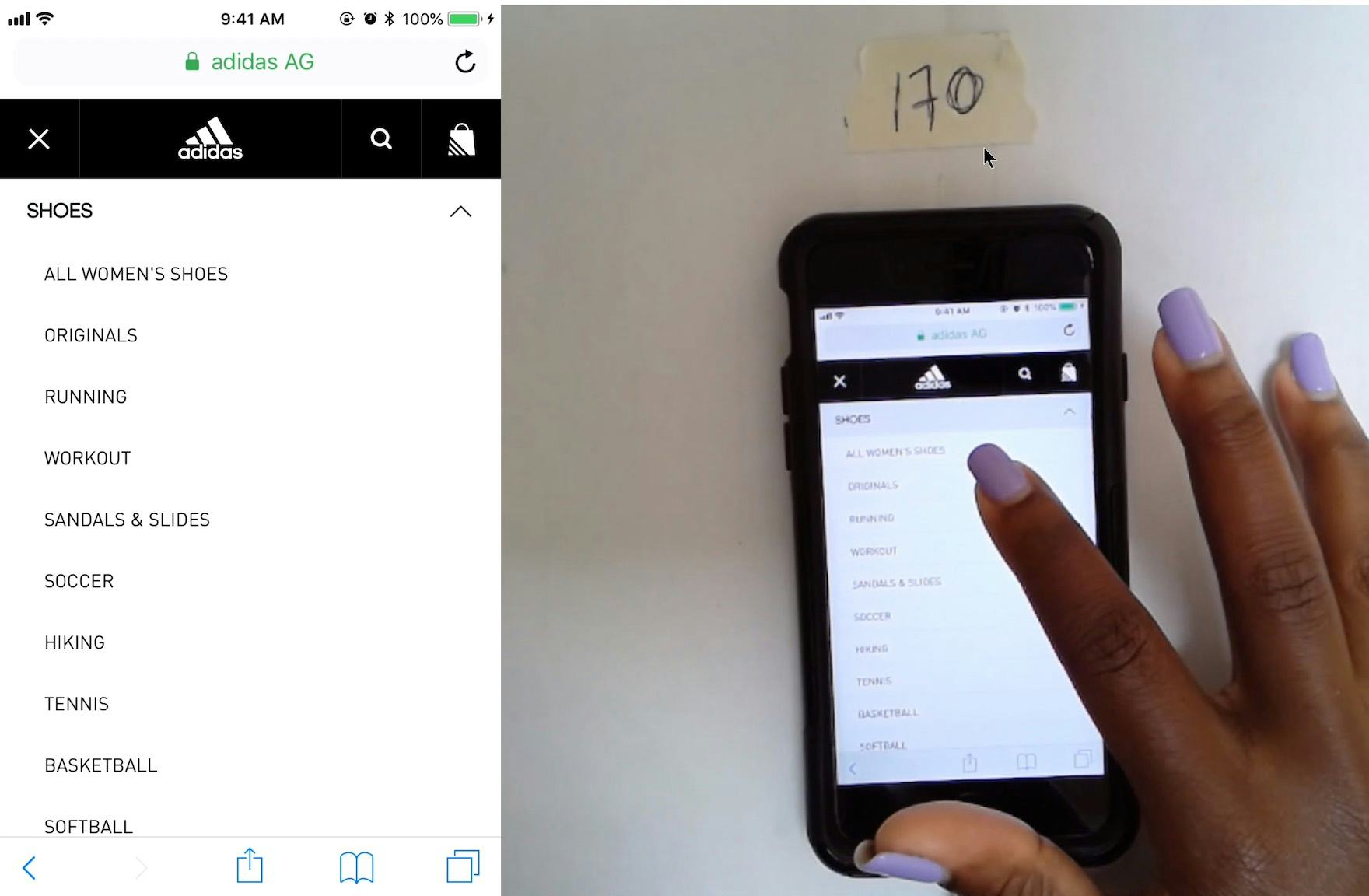

“So this time I’m going to the categories because they are little bit more easier to navigate. I clicked ‘Men’s’ and ‘Clothing’, and it directs me right to the hoodies and sweatshirts. So it’s really easy and I don’t have to use the search bar.” A participant at Adidas easily navigated to a product list using the main navigation, where the first level of product categories were shown directly — whereas at a previous site (Target) he had difficulty using the main navigation, as all product categories were collapsed in a “Categories” option.

The commonly used design solution observed to perform the best is simply to make the first level of the main navigation consist of product categories.

Displaying product categories directly in the main navigation gives users a way to begin immediately browsing product categories, which is especially important for mobile users.

3) 54% of Mobile Sites Don’t Provide a “View All” Option at Each Level of the Product Catalog

Many mobile users want the broadest scope within a category possible; for example “All Men’s Shoes” or “All Backpacks”.

However, as there’s no hover option for mobile users, mobile sites have to determine whether a user tapping a main category should expand the subcategories within it, or take users to the landing page for the broad main category item (e.g., a broad product list or an intermediary category page).

In practice most mobile sites choose the design pattern where users keep drilling down the category taxonomy as they tap on a main category option, and only take users to the category’s landing page or product list when there are no additional layers of subcategories left to reveal.

Consequently, during mobile testing some subjects had difficulty navigating to the broadest product scopes, making it hard for them to access the most relevant category pages and product lists.

Furthermore, some users become stuck in much too narrow and deeply nested subcategories.

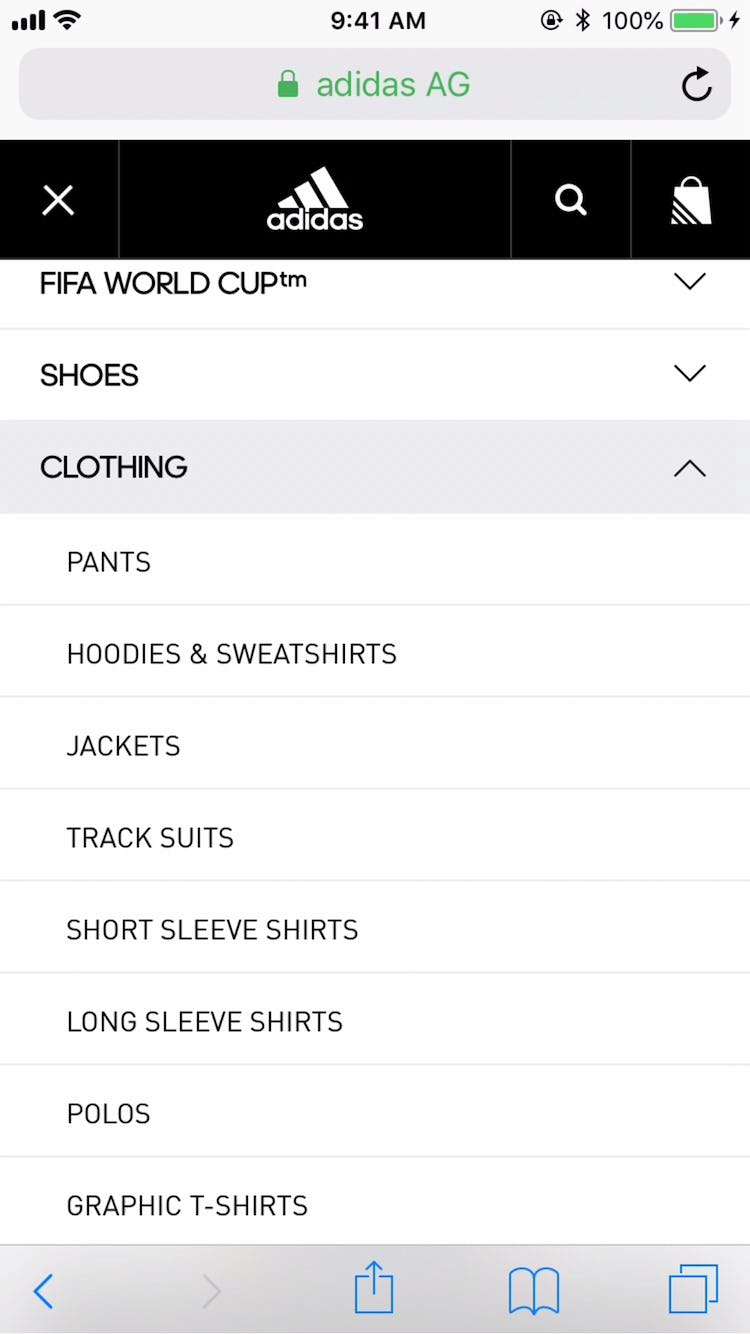

Adidas includes a “View All” menu option at each layer in the hierarchy, ensuring users can easily access a product list containing all the products available in a particular subcategory.

What was observed to perform consistently well during testing was to have a “View All” menu item nested within every product category in the hierarchy.

For example, a site with the category “Women” and the subcategories “Clothing” and “Coats” would have a “View All” menu item at each level — thus, “View All Women’s”, _“View All Women’s Clothing”, “View All Coats”.

This approach provides users with options when it comes to viewing products — either to keep drilling down until the right subcategory is reached, or to stop and view the current list of products.

For inspiration on “perfect” Mobile Main Navigation implementations, see Gap, Adidas, The Vitamin Shoppe, and Zooplus.

Mobile Search: Autocomplete

The Mobile Search Autocomplete performance for most e-commerce sites is just above “mediocre”, with 54% of sites overall performing “decent” or higher.

Autocomplete query suggestions are implemented on 96% of e-commerce sites and should, therefore, be considered a “web convention” for e-commerce search fields.

Generally, mobile sites perform well by quickly loading autocomplete suggestions, not using redundant or irrelevant autocomplete options, and supporting keyword queries.

However, there are 2 aspects that could be much improved:

4) 48% of Mobile Sites Don’t Offer Relevant Autocomplete Suggestions for Closely Misspelled Search Terms and Queries

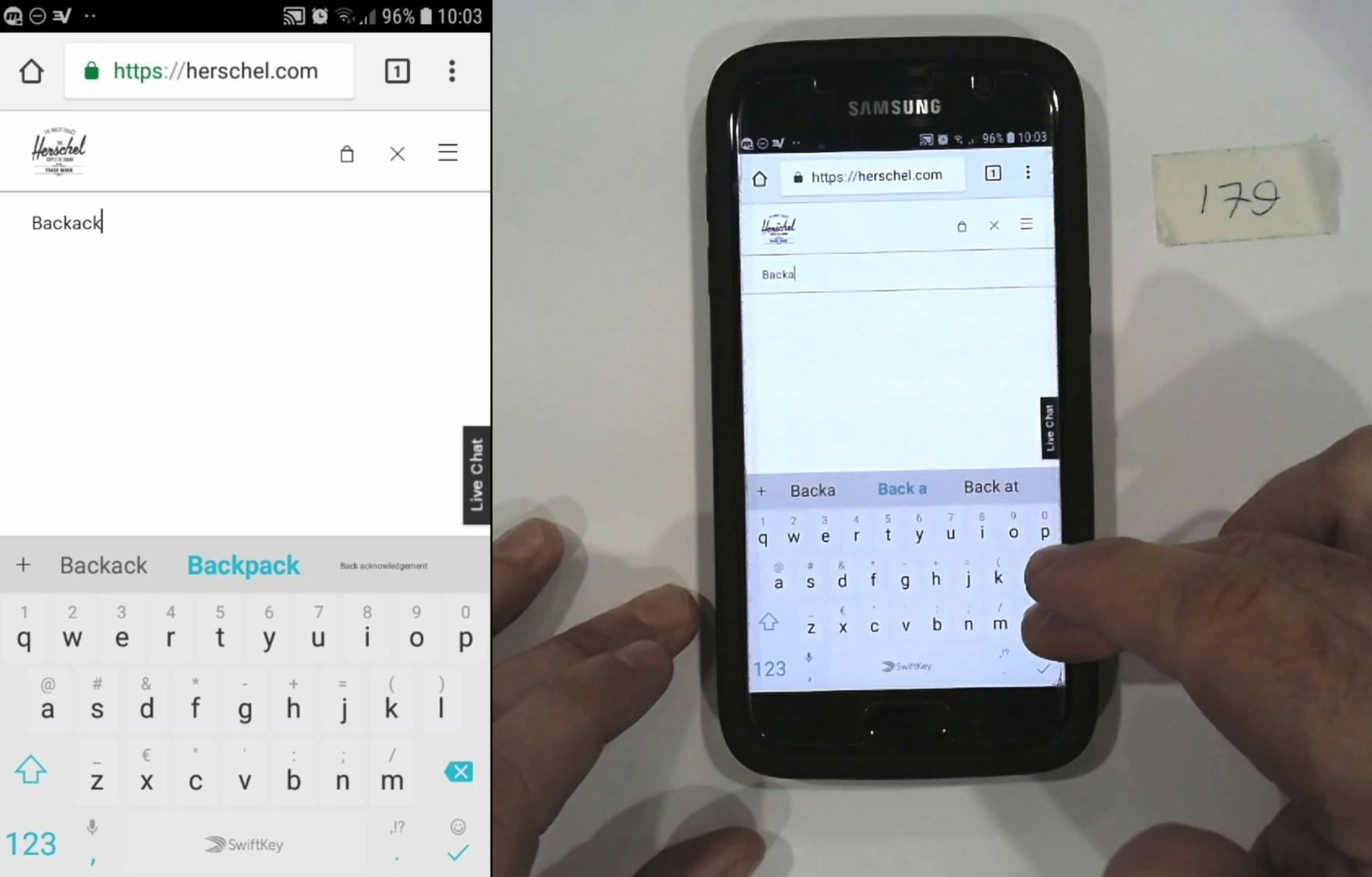

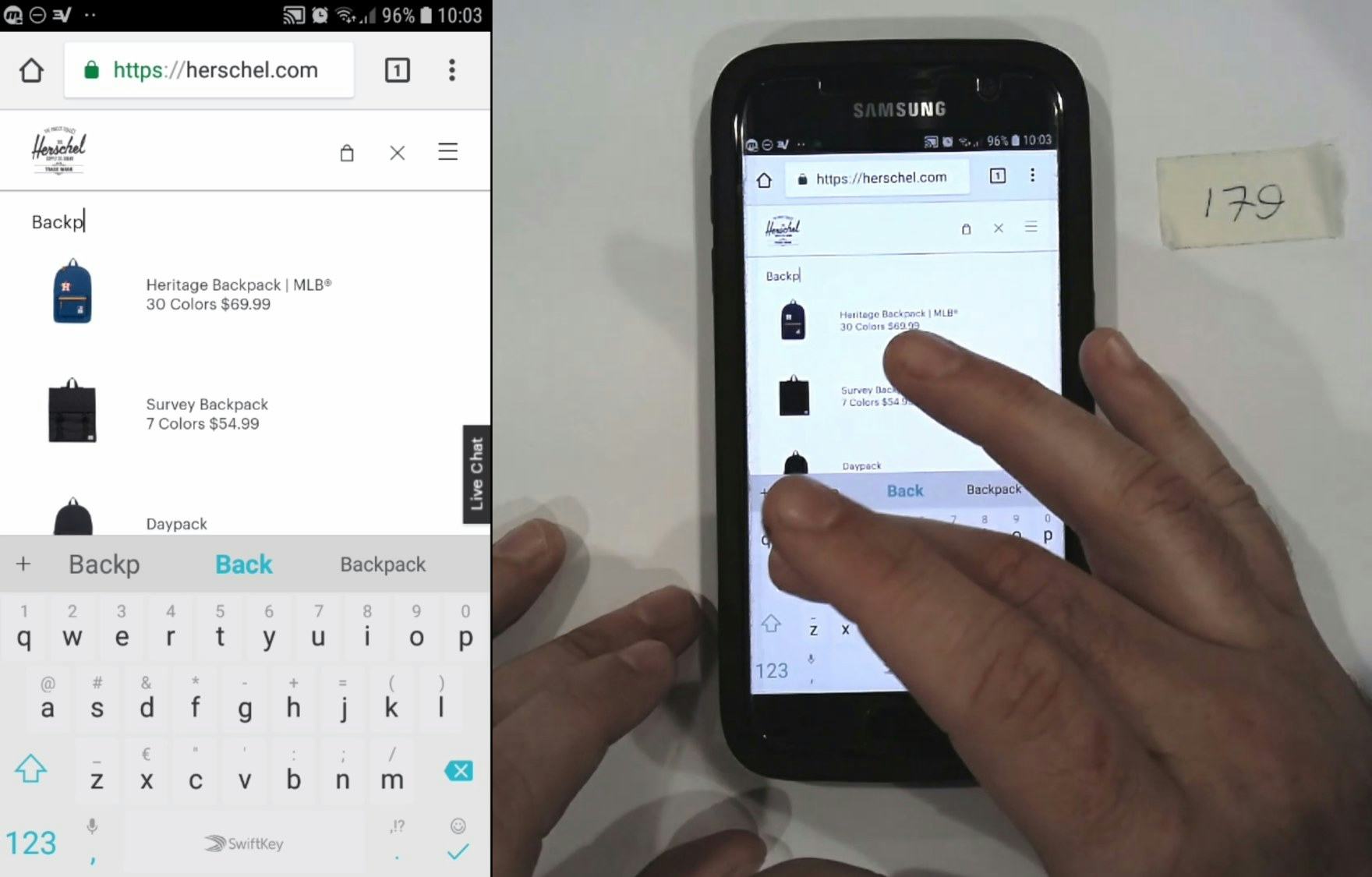

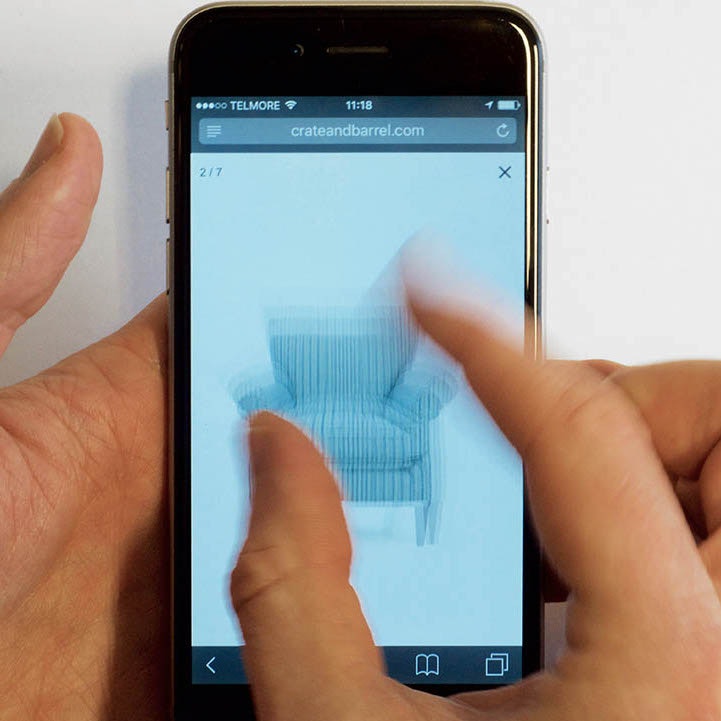

In an attempt to find backpacks on Herschel, a participant mistyped his query, omitting a letter, and the product suggestions he’d previously seen disappeared (first image). He deleted characters until the point where he could insert the missing letter “p” and added it, at which time backpack product suggestions began to appear (second image). Autocomplete should be able to intelligently interpret such obvious misspellings, especially at a site like Herschel where much of the product catalog is backpacks. Failing to adequately support misspellings in autocomplete leads to more friction to find a product or product list — especially on Mobile, where typing in general, not to mention editing queries, is more difficult.

During testing, nearly all participants relied on the guidance of autocomplete suggestions at some point when devising queries, but those suggestions often failed users if queries contained even the slightest spelling error (e.g., searching “furnture” instead of “furniture”).

Search queries typed with less-than 100% accuracy were common during Mobile testing, and Mobile keyboard use was observed to be especially error-prone.

Yet users’ misspelled queries were frequently met with autocomplete suggestions that were irrelevant or that disappeared once an error was detected, effectively removing the very guidance that the suggestions are intended to provide.

Since autocomplete plays a key role in early search interactions, unexpected suggestions due to minor typos can cause users to change their product-finding strategies by nudging them to seek other browsing methods or rework queries.

In the worst cases, unexpected or missing autocomplete suggestions contribute to abandonment downstream if alternate product-finding strategies don’t quickly lead to relevant results.

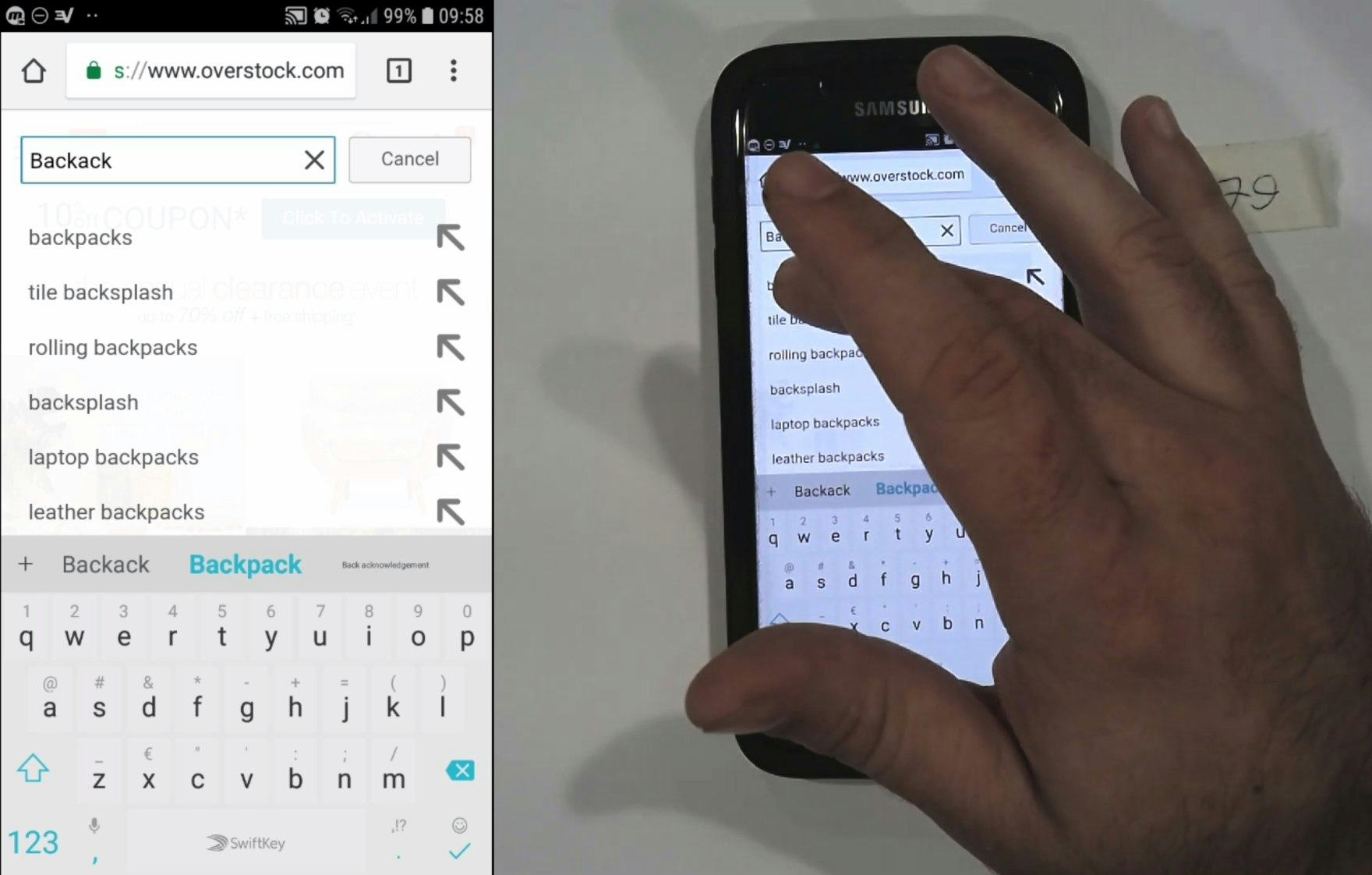

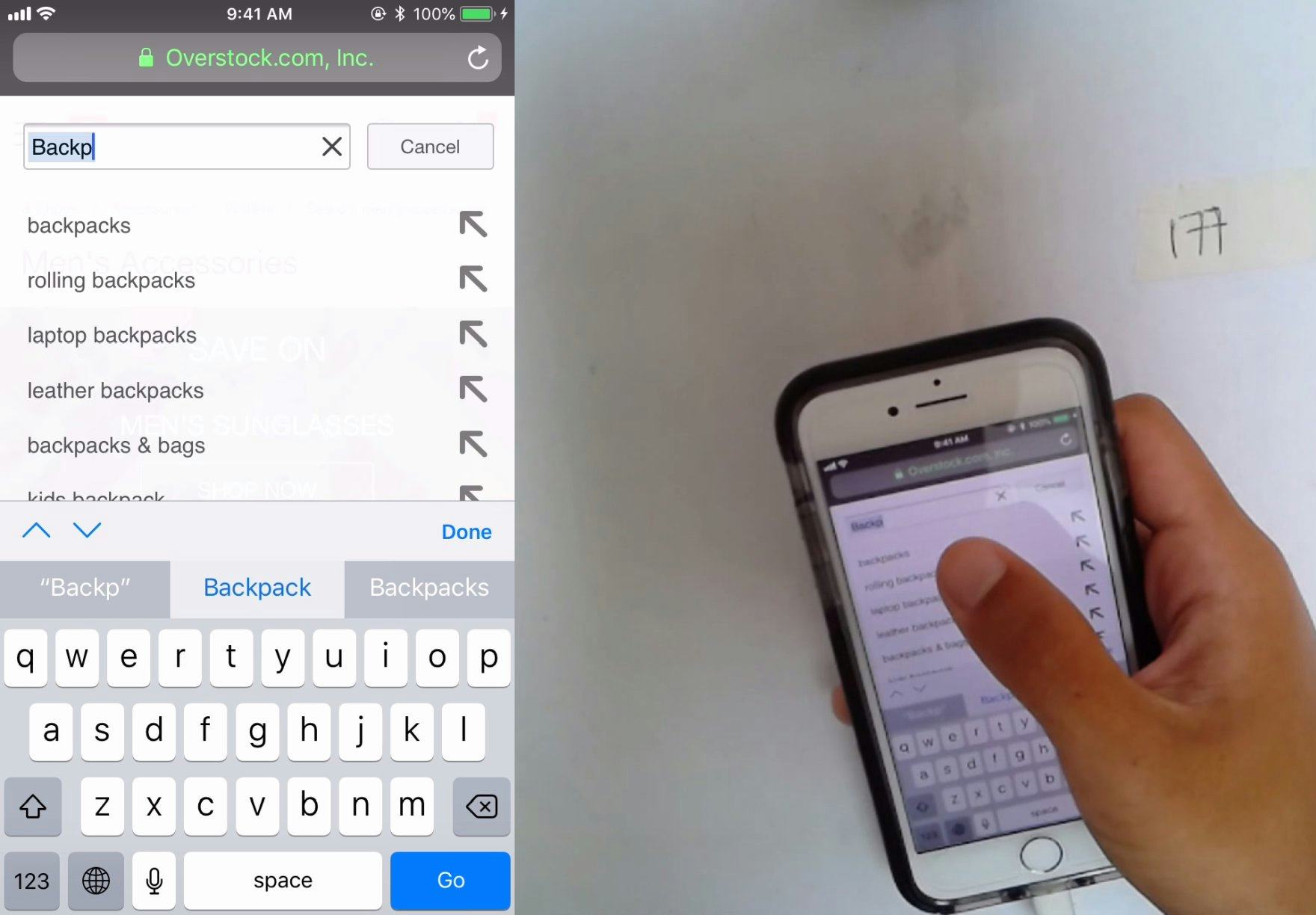

A participant at Overstock typed the query “backack” and left off the letter “p” — the same error a different user made at Herschel — but here Overstock supports what is likely a quite common misspelling and the user was able to submit the query uninterrupted by tapping the first autocomplete suggestion. Anticipating common misspellings within autocomplete and suggesting the correct spelling eliminates any query refactoring, allowing users to move smoothly into product exploration with a single tap.

Since spelling errors in search queries do occur with significant frequency, autocomplete’s relevance can be enhanced by mapping misspelled words to meaningful autocomplete suggestions.

There are existing spell-check solutions (many of them freely available online), which means common misspellings should be relatively cheap to catch.

However, depending on the search engine and autocomplete implementation, it may not be feasible to integrate an off-the-shelf solution.

Additionally, misspellings of brand names or highly specialized products may be difficult to catch.

Depending on the search engine and autocomplete implementation, careful monitoring of autocomplete query logs or search logs, or both, should shed light on misspelled queries that users enter into the search field, which can be a good starting point for analysis and prioritization of improvement efforts for autocomplete spelling suggestions.

5) 78% of Mobile Sites Don’t Include the Search Scope in the Autocomplete Suggestions

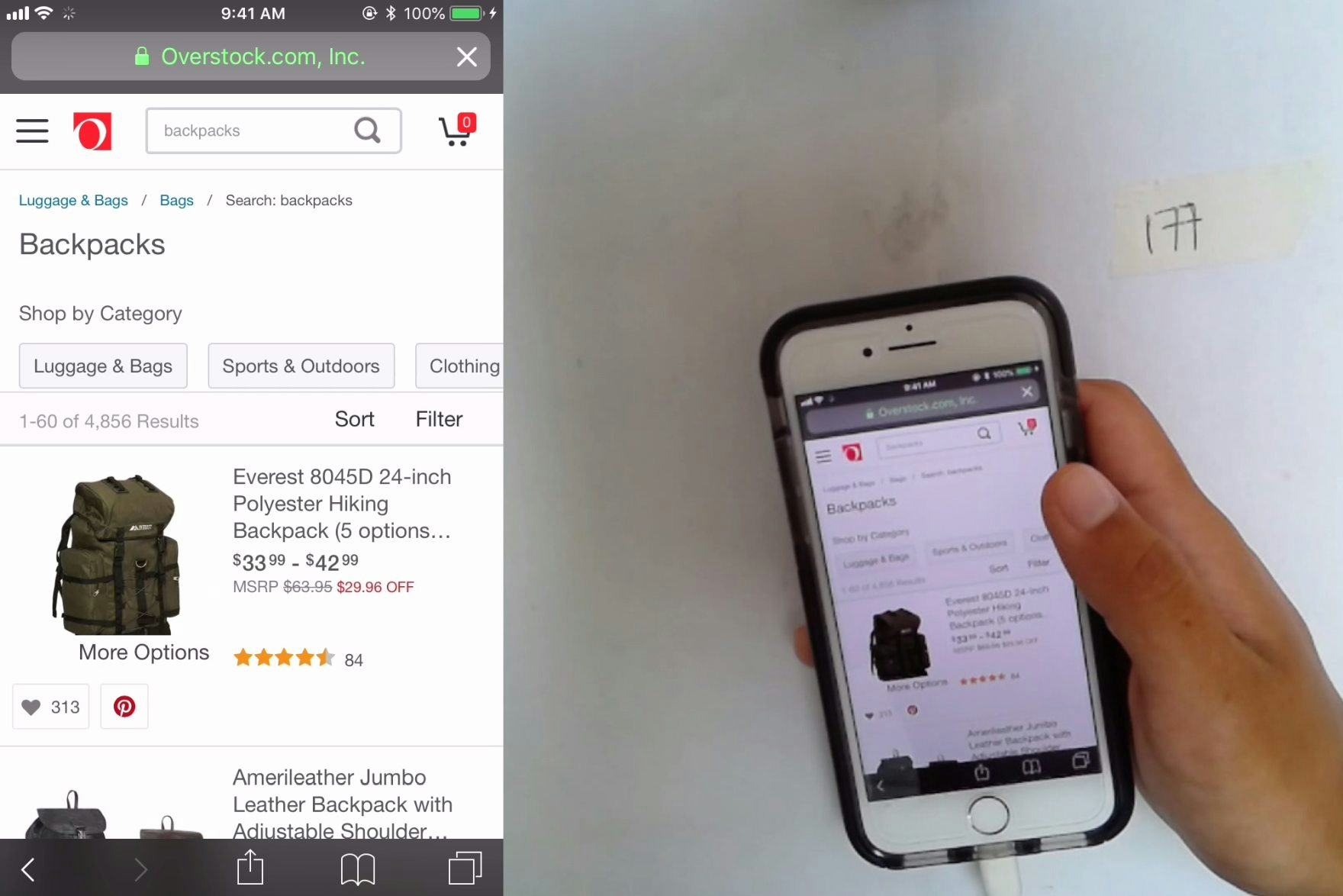

“Oh my goodness.” At Overstock, where autocomplete category scope suggestions were not present, a participant selected a query suggestion for “backpacks” (first image). When he reached the search results, he was overwhelmed by the 4,856 products presented (second image). From there, he had further difficulty with the promoted filter options and selected “school backpacks”, which was overly narrow, presenting only 2 products (“So, only two results?”). He spent several minutes attempting and then backing out of various filter options to narrow the large results set, all of which could have been avoided through presentation of relevant category search scope suggestions while he was devising his query.

Applying a search category scope, such as a user seeking “Pots in Gardening” vs. “Pots in Kitchen”, is not a natural part of most users’ thought process — rather, they’re thinking of the type of product they want and trying to come up with terms that may prove well-suited for producing such results.

However, once users are exposed to category scope suggestions, they can be a useful way to preselect a narrower and more relevant list of products, instead of conducting a sitewide search.

Without category scope suggestions or when category scopes are not obvious, users can select sitewide query suggestions that span many categories and end up arriving at an overwhelming number of results.

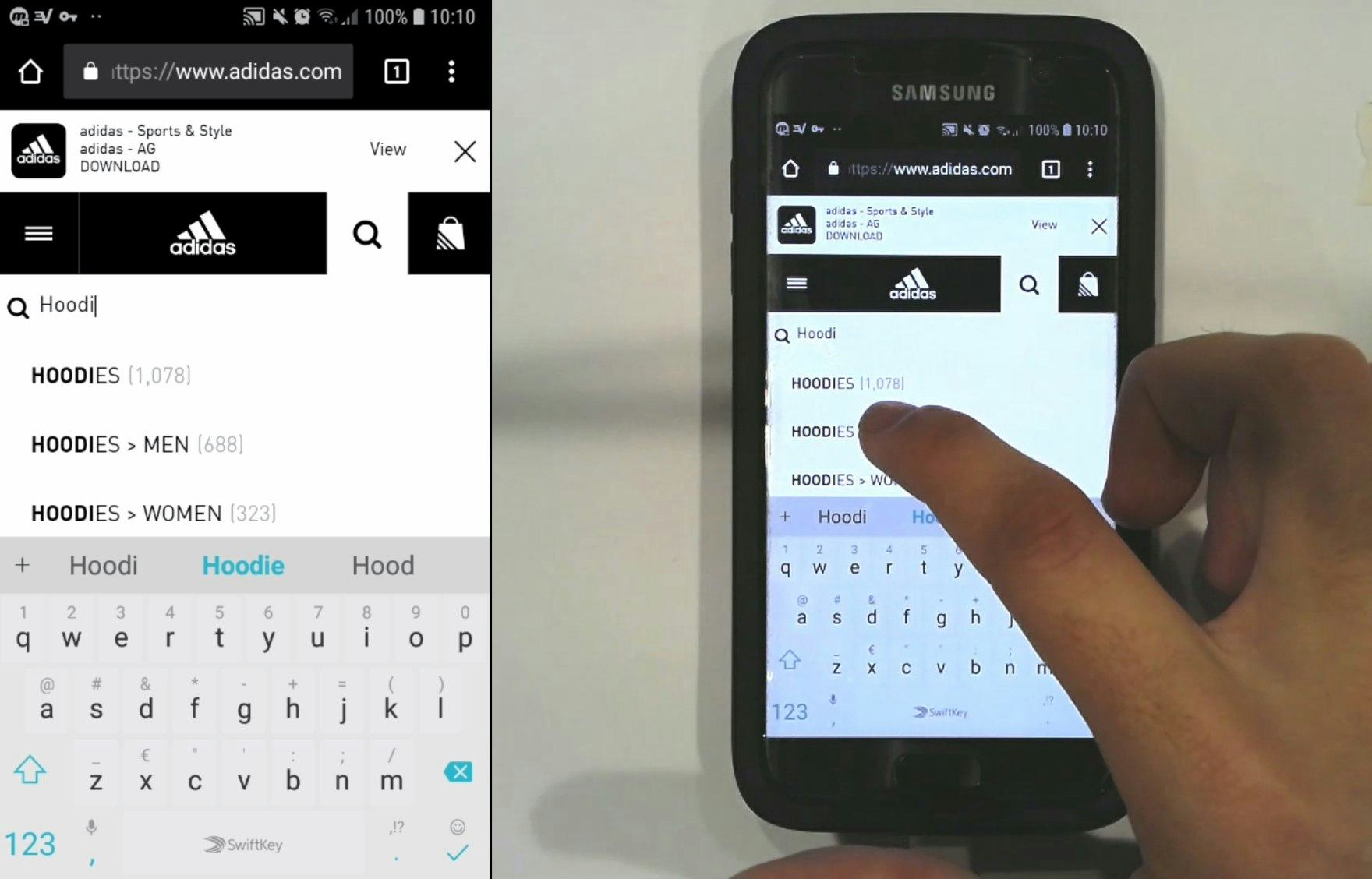

“Oh, this is handy. I was looking up ‘hoodies’ and they’re giving ‘Men’, ‘Women’. I like that.” A user at Adidas was seeking “hoodies” and was pleasantly surprised by the category scope options that helped him limit his search results in advance of submitting the query. The category scope suggestion allowed him to focus only on products in “Hoodies > Men”.

The overall goal of category scope suggestions in autocomplete is to help users restrict searches to a smaller subset of relevant results in advance.

When well implemented, category scope suggestions help users avoid having to wade through excessive and irrelevant results, ultimately saving time and helping them home in on the most relevant results more quickly.

If seeking inspiration, consider looking closer at Walmart, J.C. Penney, and Best Buy.

For additional inspiration, see the Page Design tool where we have collected 170+ design examples of the Mobile Search Autocomplete.

Mobile Search: Results Logic & Guidance

When it comes to Mobile Search Results Logic & Guidance, we find that 62% of e-commerce sites perform below “decent”, and Results Logic & Guidance continues to be the worst-performing topic within Mobile On-Site Search.

That said, this is a notable improvement from 2020, where 73% were below “decent”.

In particular, there are 2 issues hindering sites’ Search Results Logic & Guidance performance.

6) 64% of Mobile Sites Don’t Suggest Alternate Queries and Paths

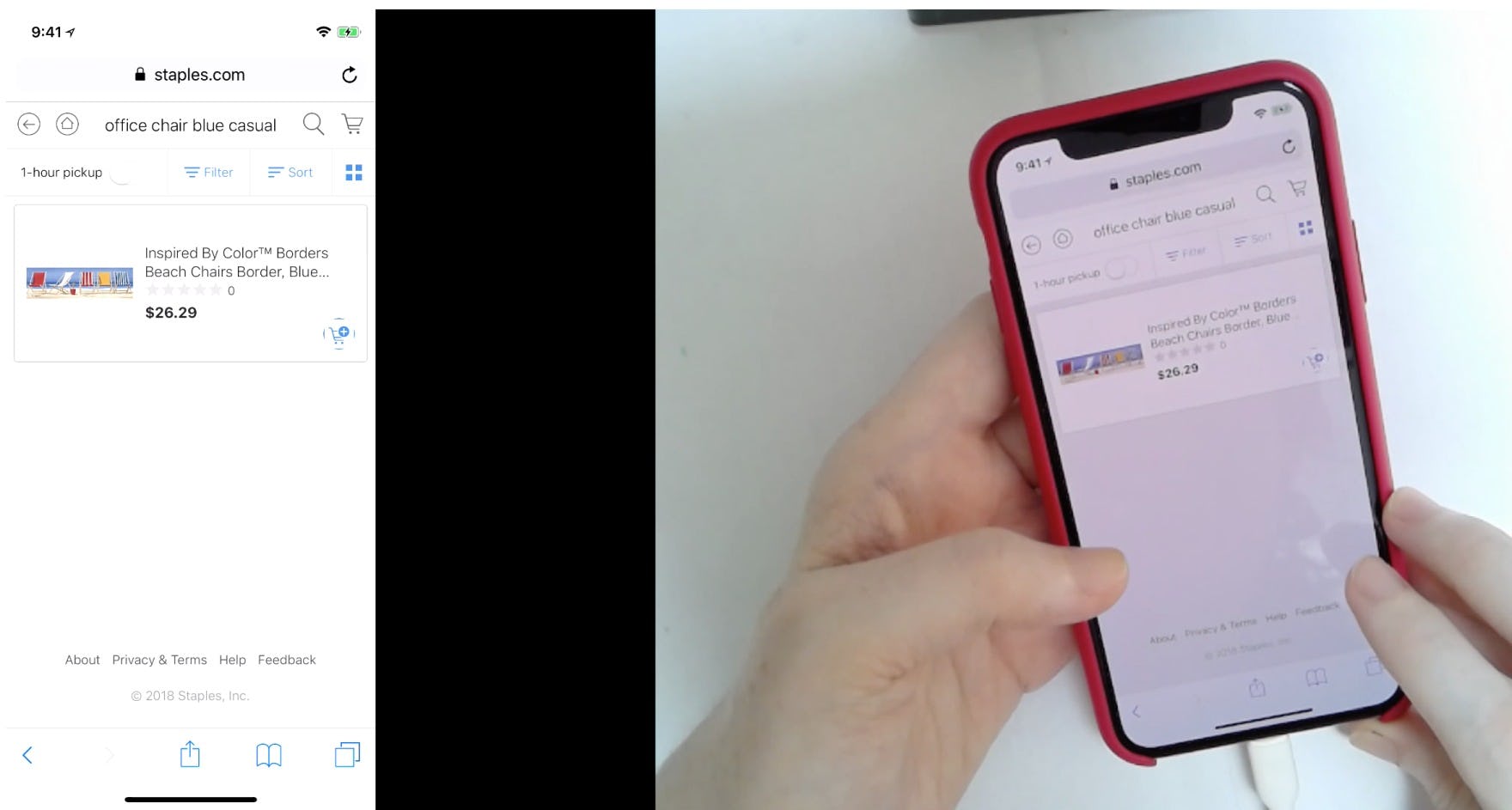

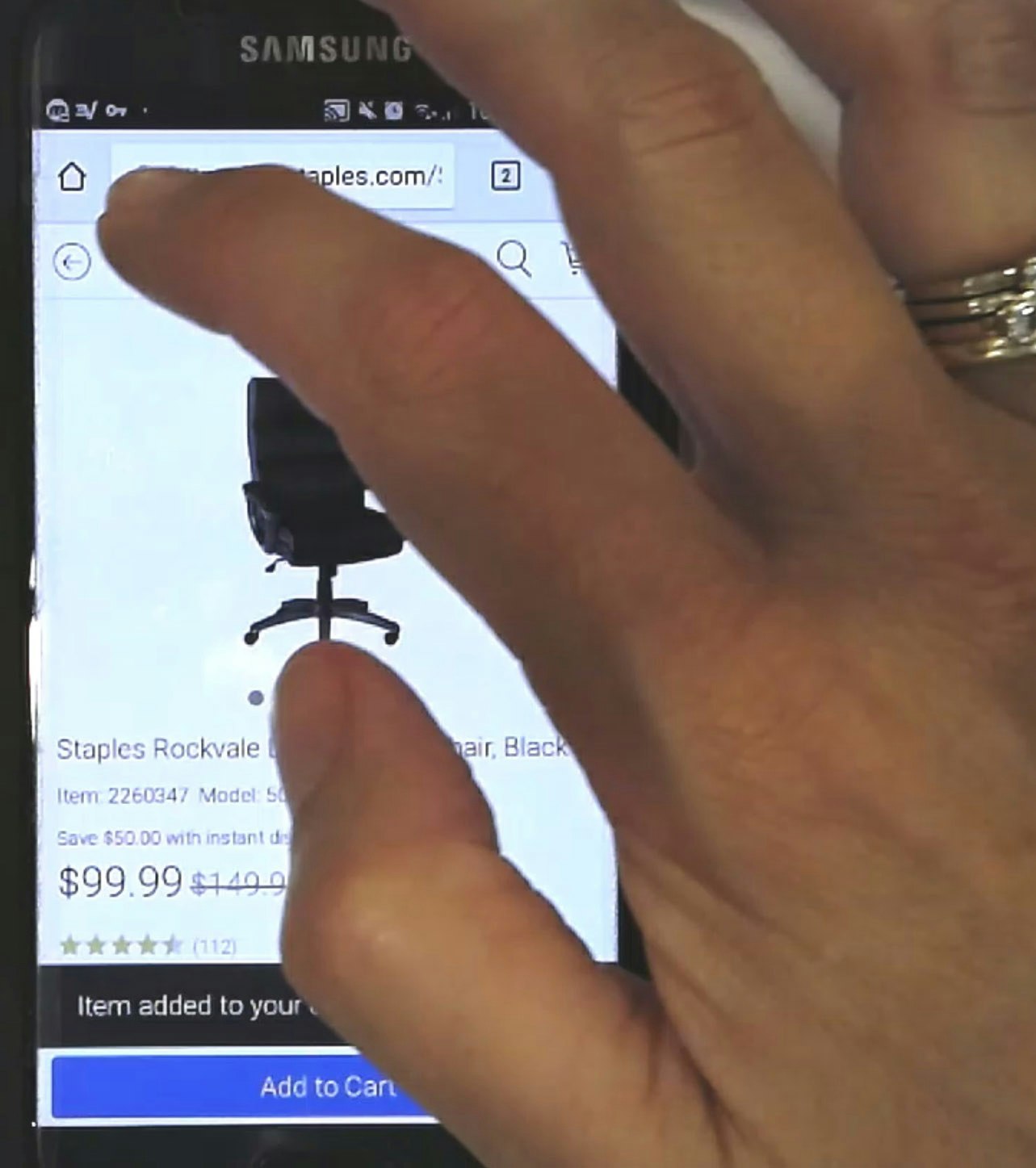

“In all honesty I’d probably already move on from this site because it’s super bizarre why it pulled up a painting when I typed ‘office chair’.” This participant on Staples searched for “office chair blue casual”, which returned only a single irrelevant result. Without any clear paths to more relevant related queries, she considered leaving the site.

Unless a site is narrowly targeted at users with a very high level of domain knowledge, many users will often use terminology that differs from the site’s.

Obviously, the search engine being able to handle synonyms is a great start, yet there are cases where synonyms tend to be insufficient since the user’s terms are approximations or the user is searching for neighboring concepts.

Without clear suggestions for related and adjacent queries, some users will miss out on relevant search results and fail to find a suitable product.

On the other hand, testing reveals that exposing users to alternate queries, which are relevant to their original search but broad enough in scope to return quality results, gives users who might otherwise reach an impasse valid and reliable options to explore.

Alternate queries may point the user toward another (related) set of products, recommend the removal of a model name or brand from an overly specific query, or suggest searches for associated and compatible products.

In all cases, alternate queries help users recover from suboptimal search results by shifting or broadening the scope of their search.

7) 45% of Mobile Sites Don’t Autodirect or Guide Users to Matching Category Scopes

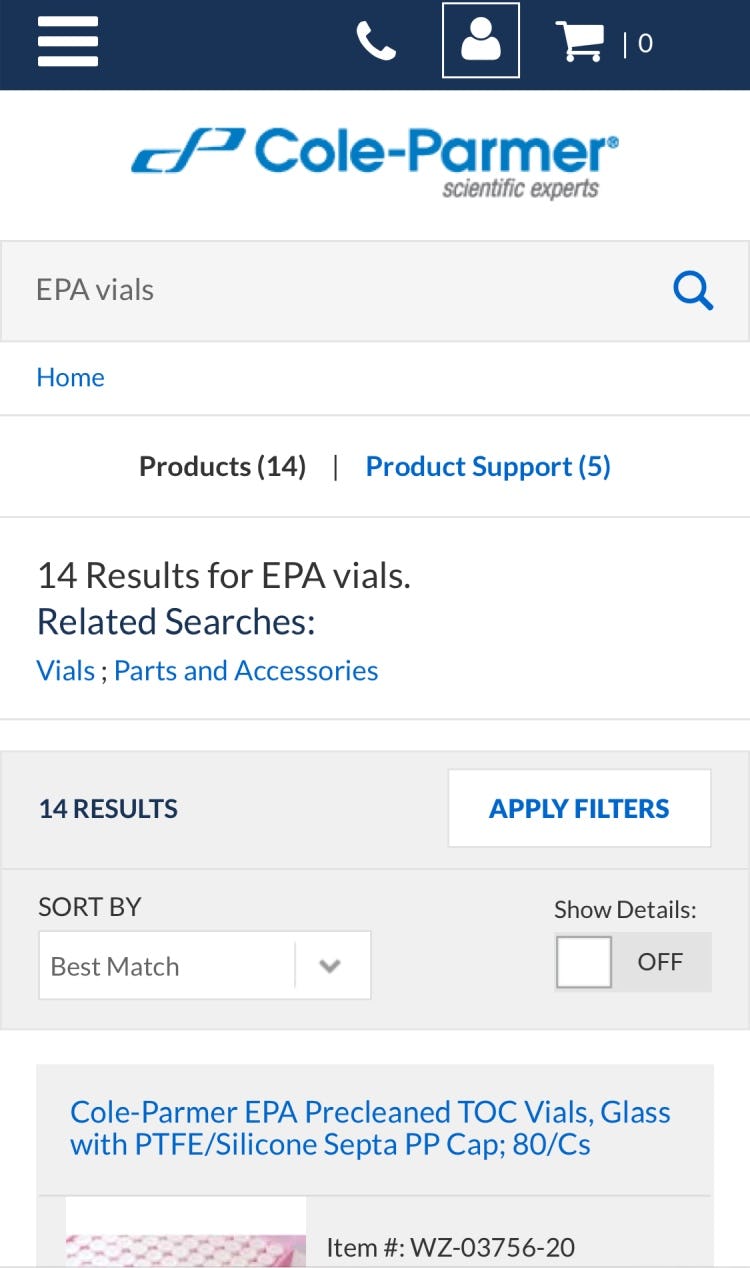

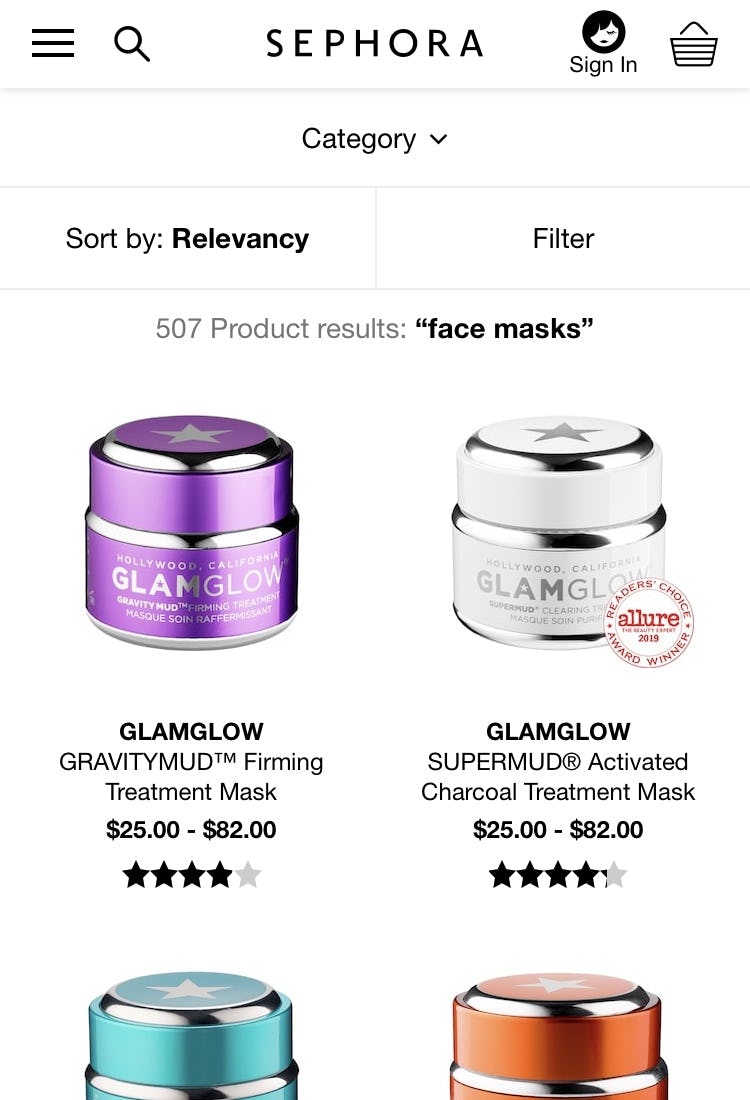

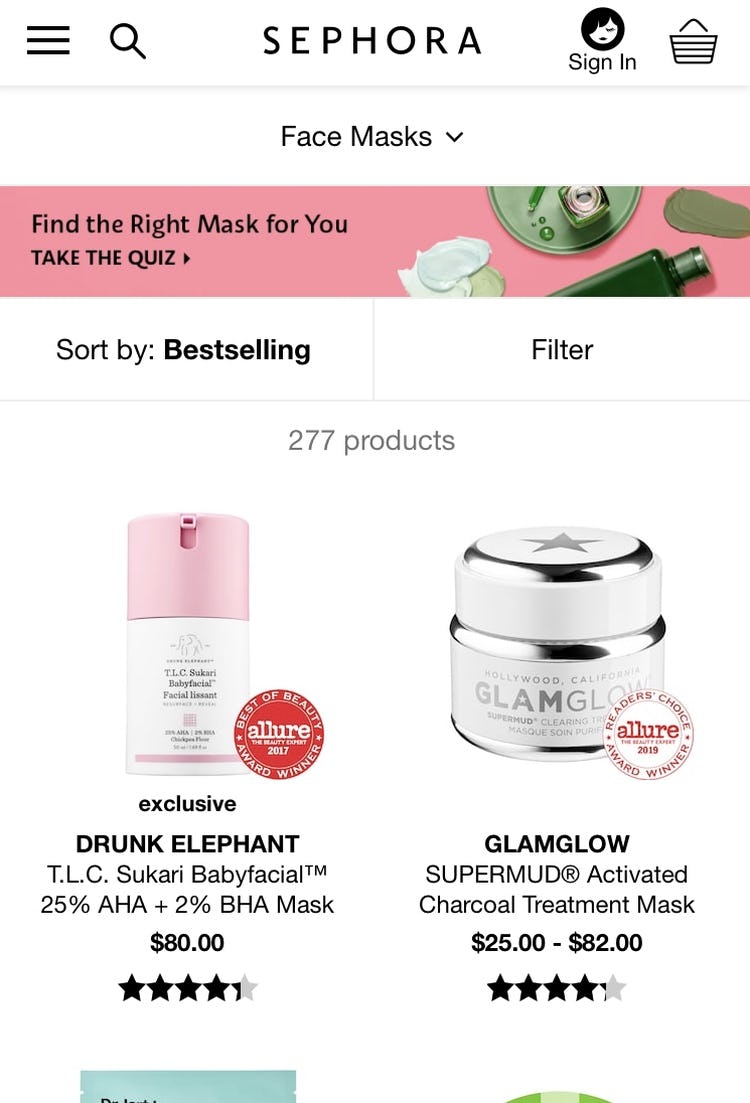

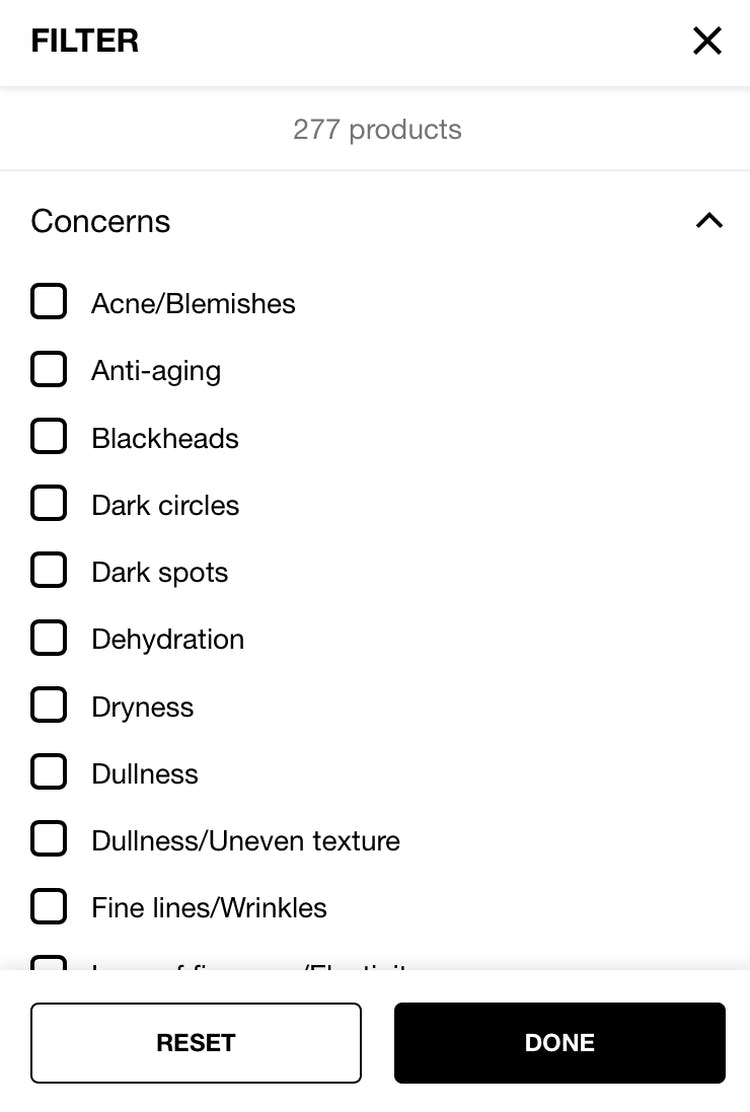

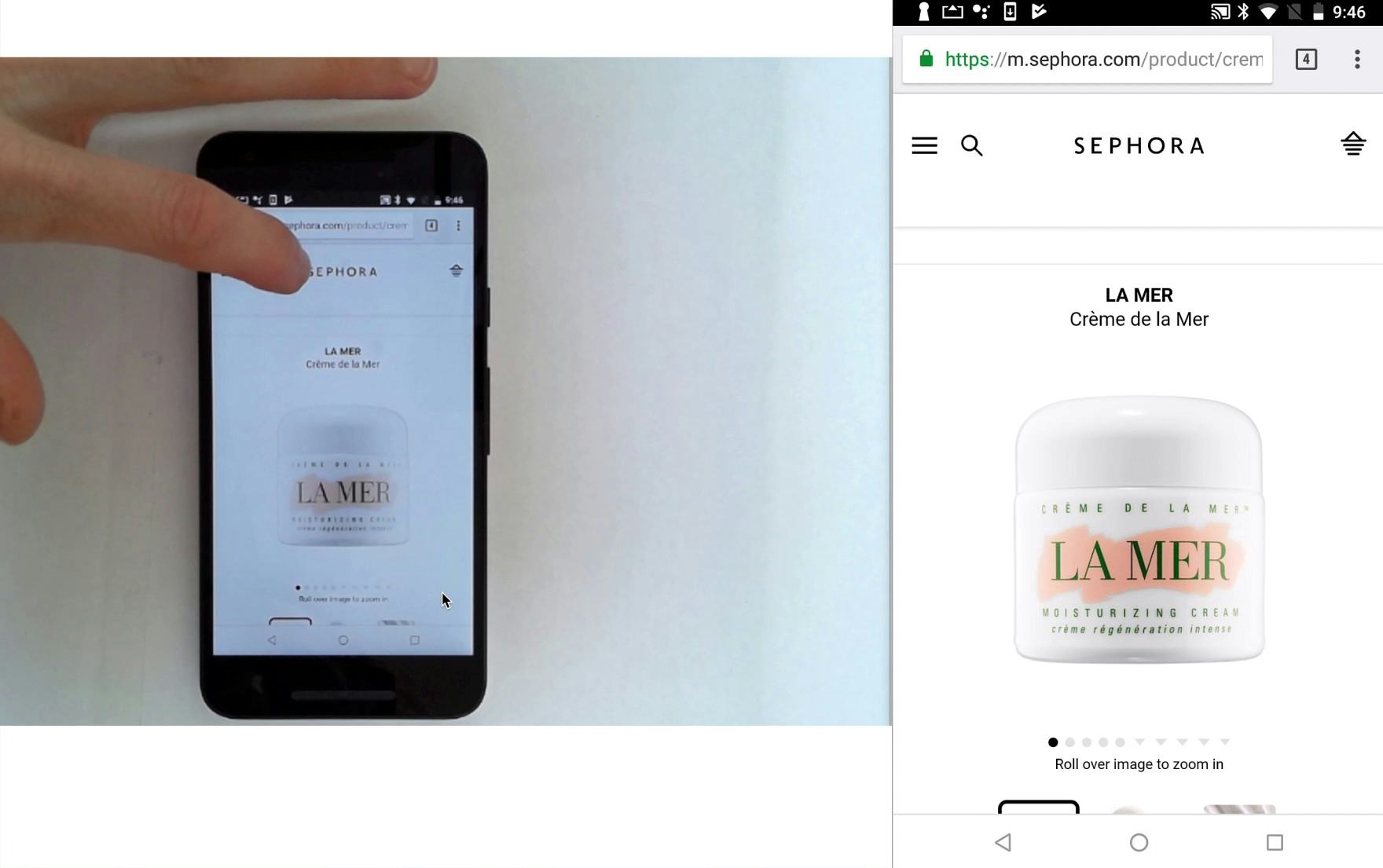

At Sephora, when searching for “face masks”, a query that precisely matches an available product subcategory, users receive almost twice the number of results (first image) compared to if they navigate to the “Face Masks” subcategory using the main navigation (second image). The difference in the number of items available indicates the search results include many irrelevant, or merely tangentially related, products. Furthermore, when viewing search results for “face masks” users can only filter by “Brand” and “Price”, but navigating to the “Face Masks” subcategory page reveals an additional key filter for skin “Concerns” (third image). Finally, the “Face Masks” subcategory page also includes a product-finder quiz (second image), which can also help direct users to suitable products, but which isn’t available on the search results page.

When users search for products, they frequently query on terms that either directly map or strongly relate to a scope or category — for example, searching for “laptops” at an electronics site with a “Laptops” subcategory.

Category-specific pages and results often feature benefits that standard search results pages lack, including clear subcategory navigation, contextual product filters, and links to relevant content such as product guides or finders.

However, on many sites, search users experience a different, subpar product results listing compared to users who navigate to the same scope or category using the global navigation.

Autodirecting users to categories or subcategories when there’s a 1:1 match with the user’s query, or guiding users on the results page to likely relevant categories or subcategories if there isn’t, will in the end make it easier for users to navigate search results and find products they’re looking for.

For inspiration, Crate and Barrel, Hayneedle, and Williams Sonoma have “perfect” Search Results Logic & Guidance implementations.

Mobile Checkout: Validation Errors & Data Persistence

For Mobile Validation Errors & Data Persistence, 58% of sites perform “decent” or higher, while the rest suffer from somewhat severe usability issues.

This is despite the fact that we observe that sites with an even slightly subpar error-recovery experience are at great risk for causing needlessly many checkout abandonments, as users get confused and stuck to a degree where they either can’t complete the checkout or don’t dare to.

While most mobile sites retain user-inputted data and credit card info, the user’s error-recovery experience remains an overlooked area in e-commerce UX.

In particular, the two issues below have been observed to trip up many test participants.

8) 64% of Mobile Sites Don’t Properly Introduce, Position, and Style Error Messages

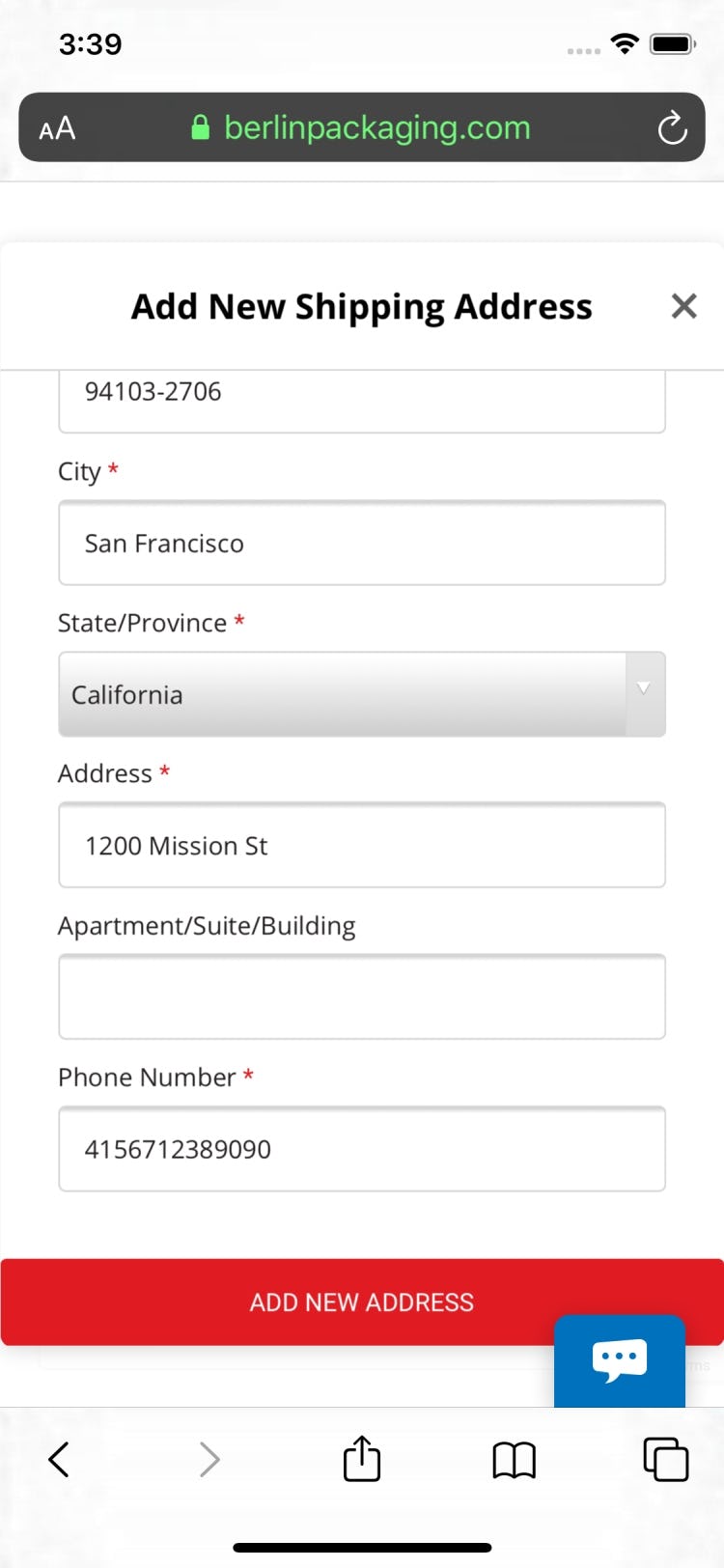

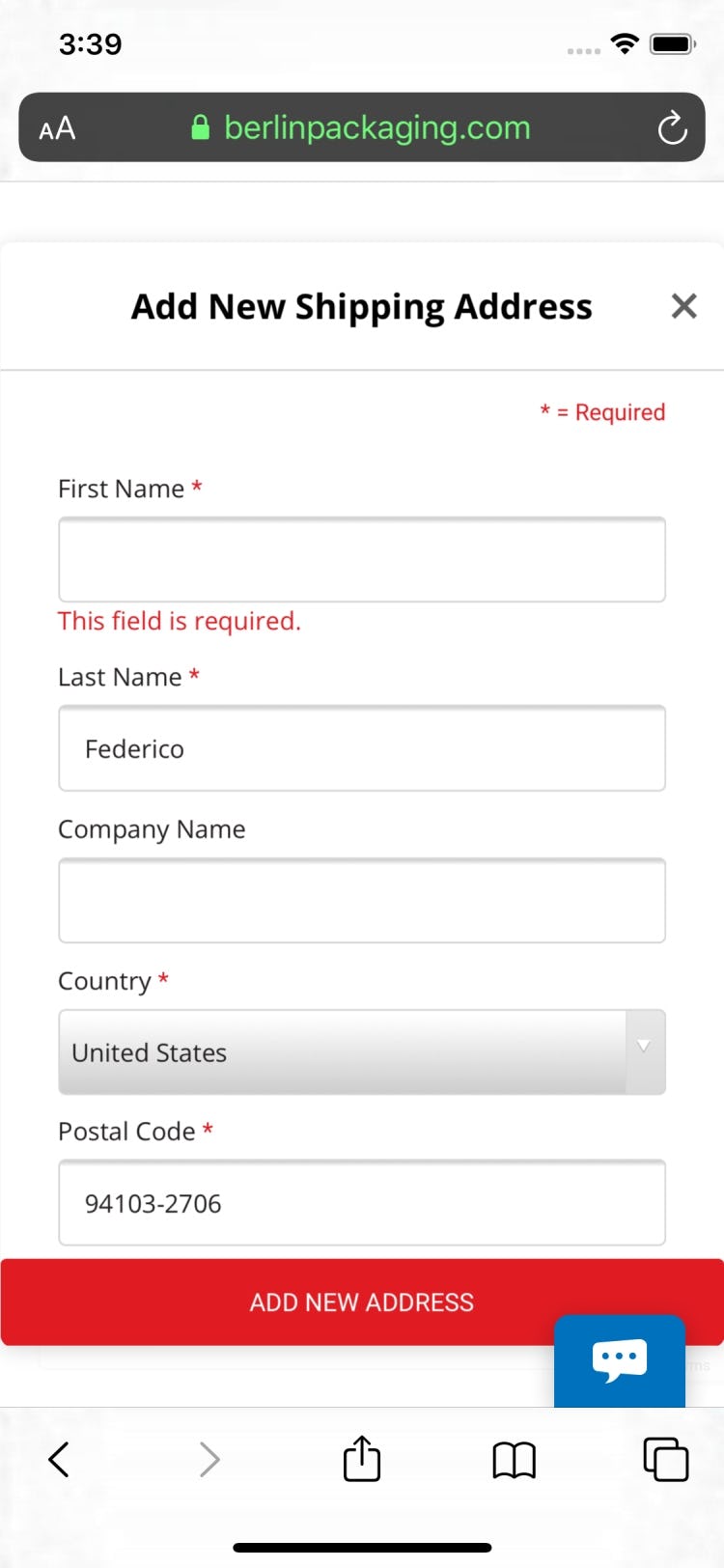

At Berlin Packaging, a user who submits the form with an error is simply left at the primary button (first image) — it’s unclear that an error has even occurred. Only when users scroll to the top of the form do they see the error message (second image).

During checkout testing, participants experiencing errors was a common occurrence.

While errors are more or less inevitable for at least some of a site’s users, what’s key is the user’s error-recovery experience.

The first step in users being able to resolve an error and proceed with their purchase is understanding that an error has even occurred, and what input or inputs caused the error.

Requiring users to hunt down the fields themselves not only leads to user frustration but was also observed to lead to checkout abandonment, as users were unable to resolve the errors.

Moreover, on Mobile sites, it’s even more difficult to recover from errors compared to desktop sites, due to the inherent issues of Mobile e-commerce UX.

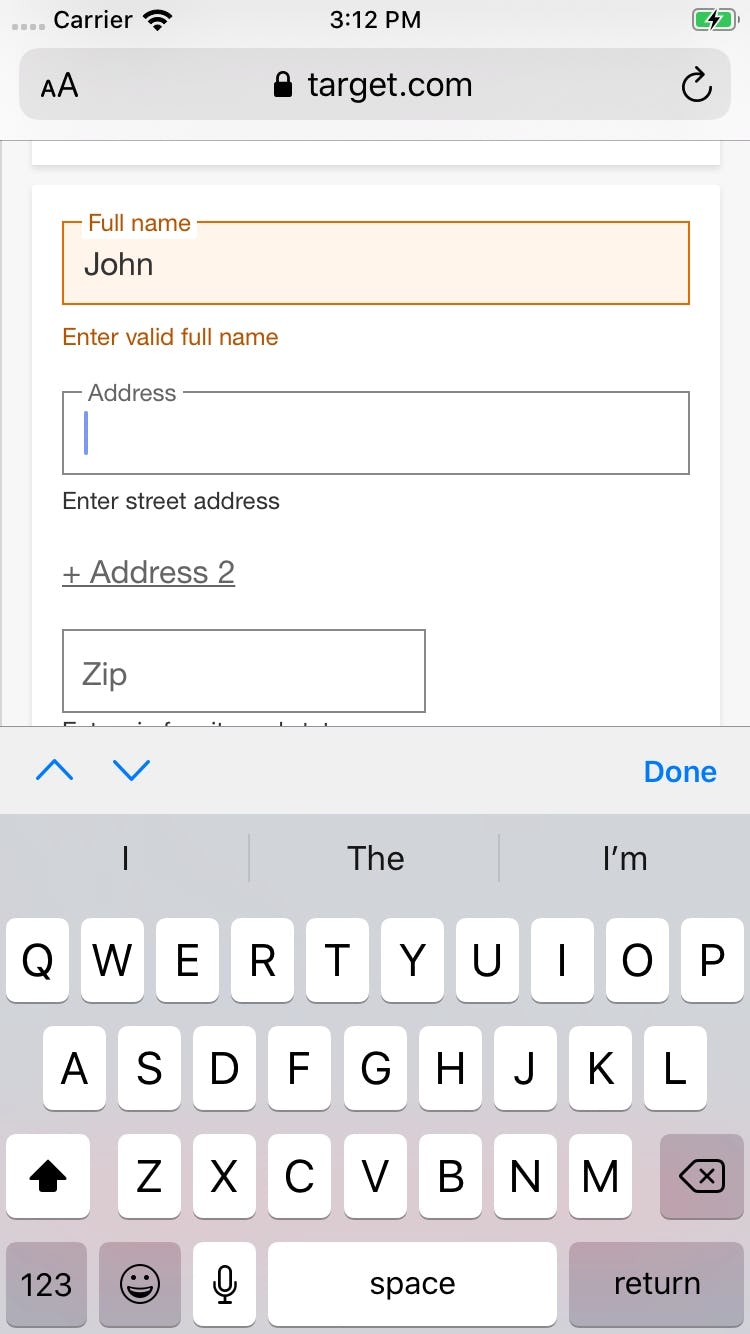

Target provides live inline validation to users (first image). Additionally, however, there’s a general error message at the top of the screen, listing all the fields that have issues, after the user tries to submit the page (second image).

Our testing has revealed some very consistent patterns for how well-performing error messages should be positioned and styled.

Under all circumstances, the incorrect field must always be marked up, typically by using red field borders, a red field background color, or red arrows.

This will immediately grab a user’s attention, and is the conventional styling for erroneous form fields.

Additionally, the error message must always be displayed right next to the erroneous field to allow users to understand what went wrong and how to correct it. However, the exact implementation depends on how many errors there are on the page.

If there is only one error on a page, autoscroll can be utilized in order to present the error to the user right within their viewport.

But when there are multiple errors on pages taller than one viewport, it becomes a little more complicated.

We see during testing that simply scrolling users to the first error performs poorly, as it makes them likely to overlook the subsequent errors.

A better-performing technique is to take users to the top of the page and inject an error statement outlining the multiple errors that have been detected, and potentially what they are.

This is of course in addition to then highlighting each of the fields throughout the page, each with their own unique error message.

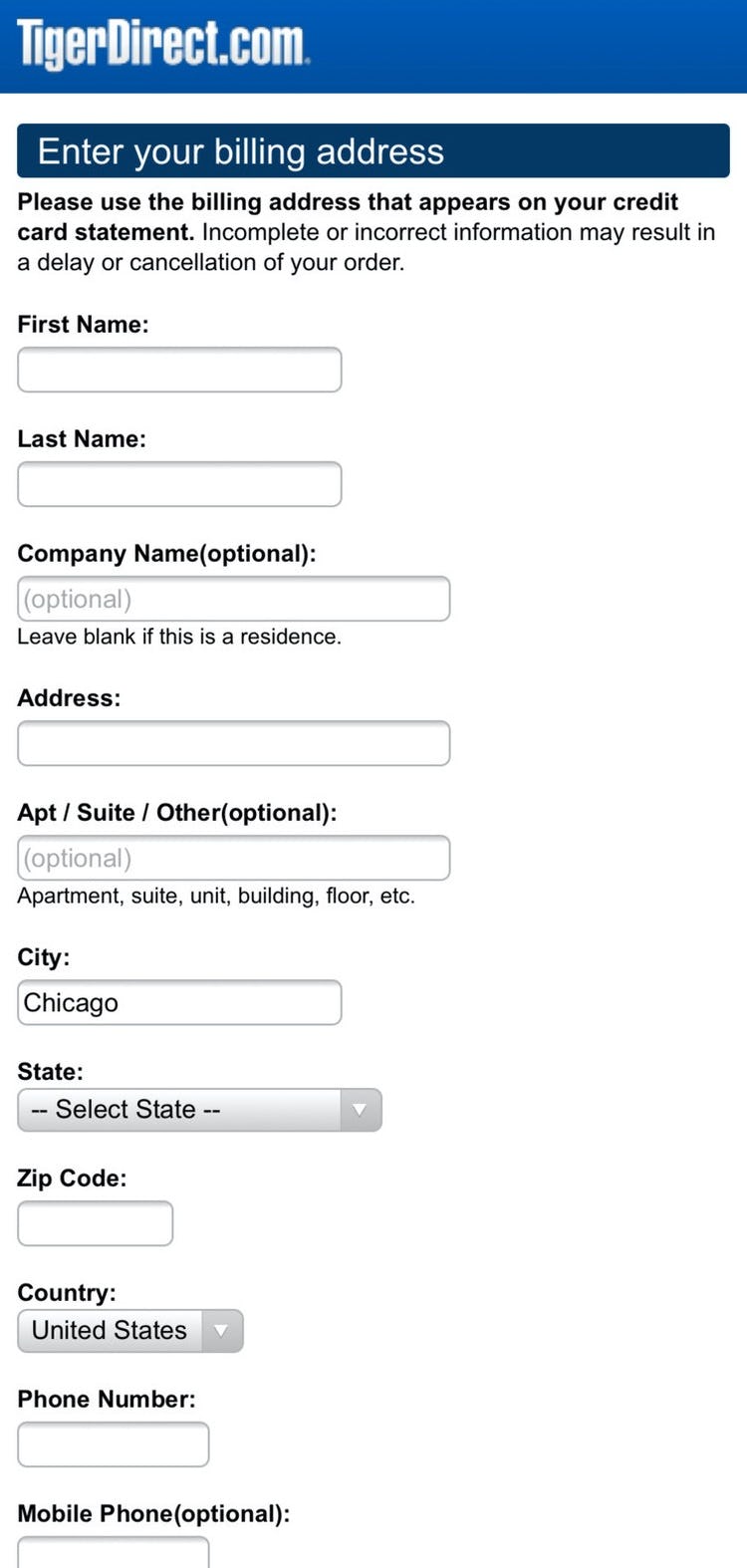

9) 18% of Mobile Sites Don’t Have an Address Validator or Address Lookup Feature

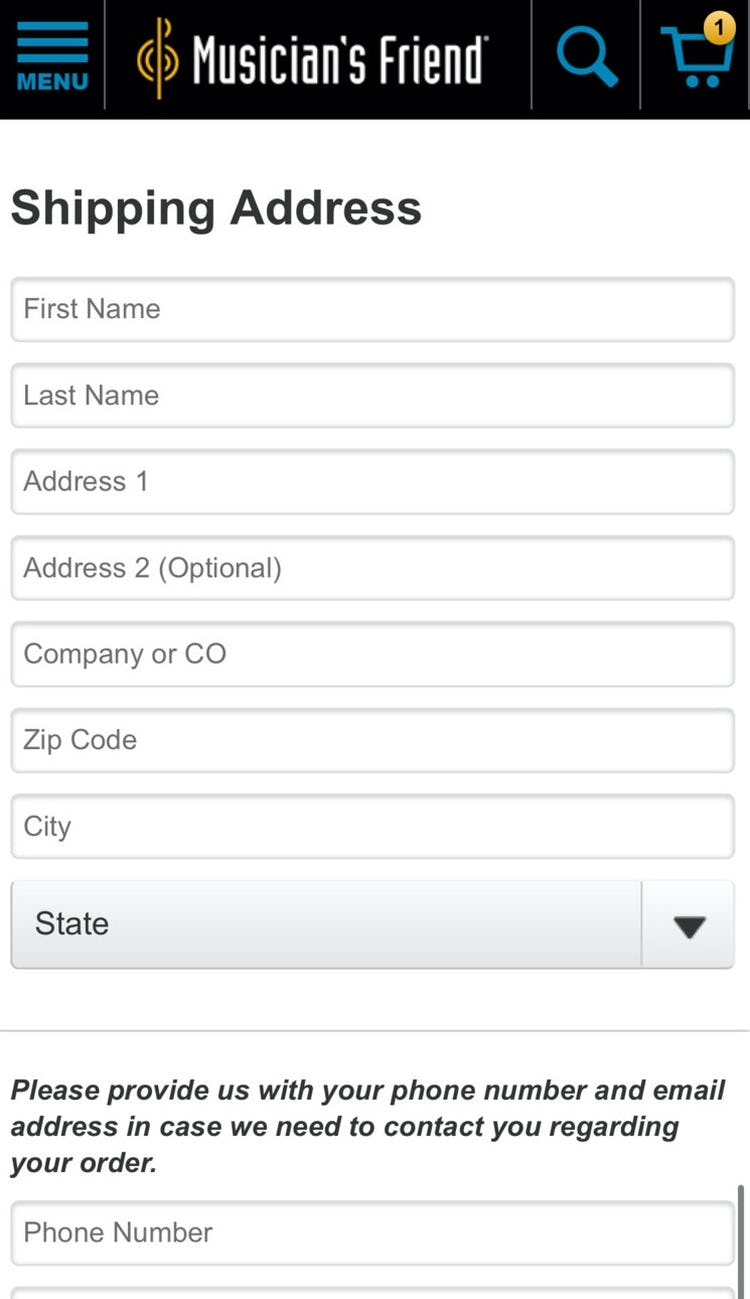

Neither Tiger Direct (first image) nor Musician’s Friend (second image) have address validators, making it more difficult for users who make mistakes when entering their address to complete their order successfully.

Inaccurate addresses cause multiple, cascading issues.

Users may have problems receiving their orders, or they don’t receive them at all, or they don’t receive them on time.

Sites have to provide extensive customer support when there are delivery issues, and often face broken customer experiences and the consequent negative site reviews, with the end result being lost a sale due to returned undelivered order.

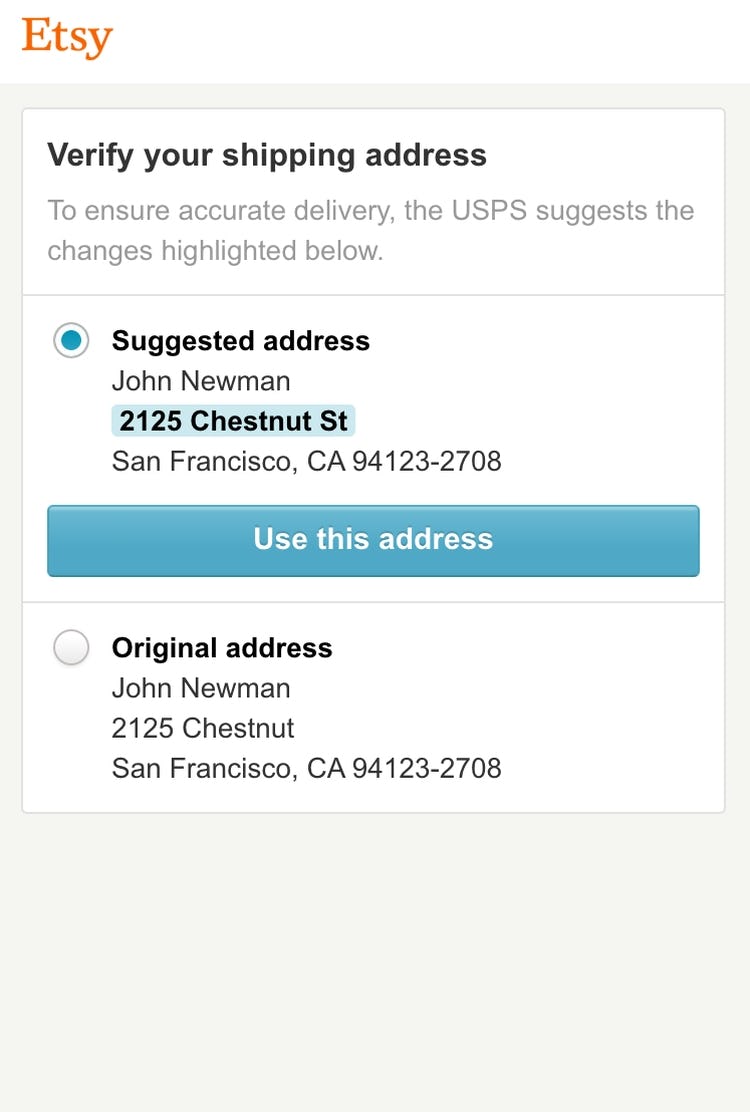

An address validator functions by querying an address database (e.g., the USPS) to ensure that the address the user typed matches the address the postal service has on file.

While not perfect, they do allow sites to perform a quick check of a user’s typed address.

During Mobile testing we’ve found that, due to keyboard autocorrect and the difficulty of typing on a small touch keyboard, users make errors far more frequently when entering their address on Mobile devices.

Furthermore, users on Mobile devices have more difficulties noticing errors due to the lack of page overview caused by the small screen.

Mobile Sitewide Design: Sitewide Design & Interaction

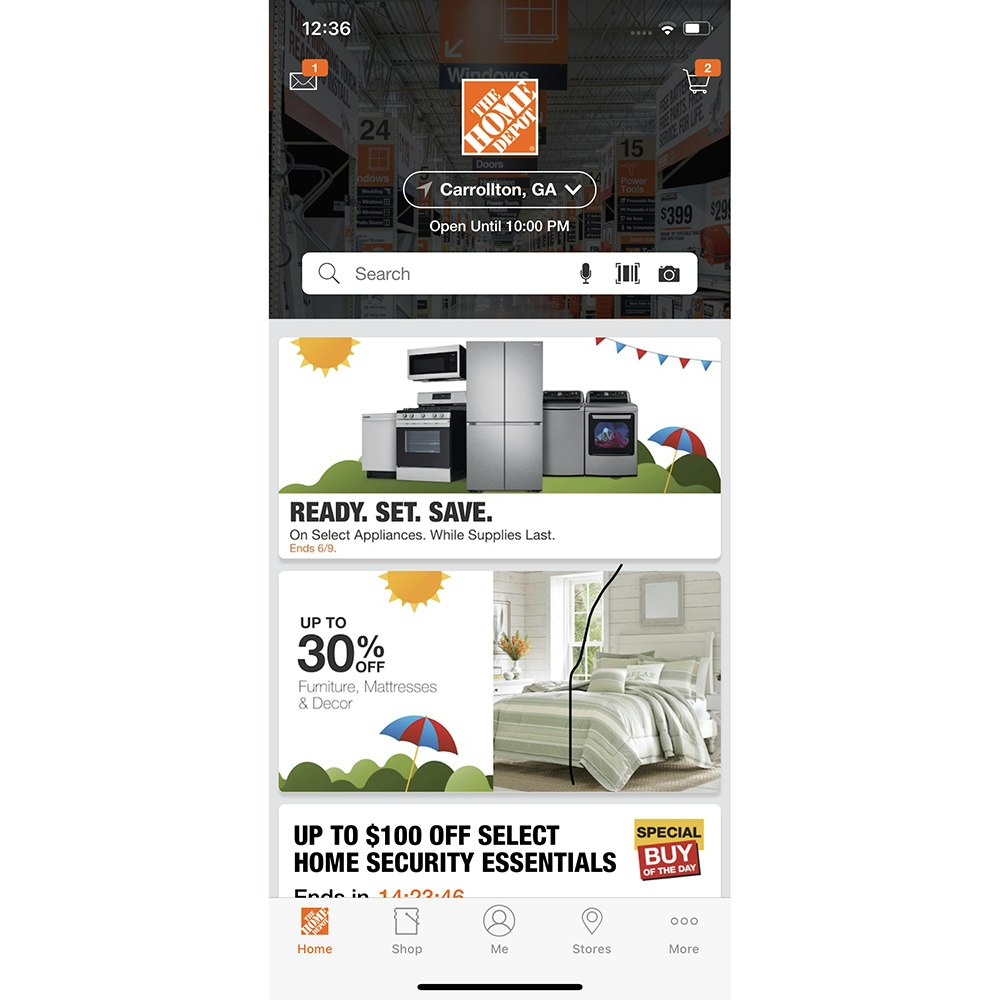

The average site’s Mobile Sitewide Features & Navigation performance is “mediocre”, with a fairly wide spread, and 42% of sites performing “decent” or higher.

Most sites are adept at common web conventions, such as correctly placing a prominent cart icon in the right-hand corner, breaking up footer links for scannability, having easily legible text on imagery, and minimizing bugs and overlay dialogs.

However, there are 2 implementations that sites still tend to get wrong when it comes to Mobile Sitewide Features & Navigation.

10) 84% of Mobile Sites Don’t Always Provide Load Indicators Whenever New Content Is Loading

When there are no load indicators, users on Mobile devices tend to very quickly assume that whatever action they’ve just attempted (e.g., tapping on a list item in the product list) was not registered.

Therefore, users tap again, which often leads to tapping inadvertently on some other content or simply starts the loading process over again.

Consequences of not having load indicators can be minor — users tend to quickly recover after, for example, tapping on unintended content — but they are cumulative.

During testing, users were observed to have multiple issues related to missing load indicators while on the same site — a consistent drag throughout the user’s entire browsing experience.

Therefore, always provide high-contrast load indicators whenever new content is loading.

Moreover, to ensure the load indicators perform well, it’s also important to display the load indicator immediately after a user’s action (< 1 second), use a conventional design, and update the load indicator after 10 seconds.

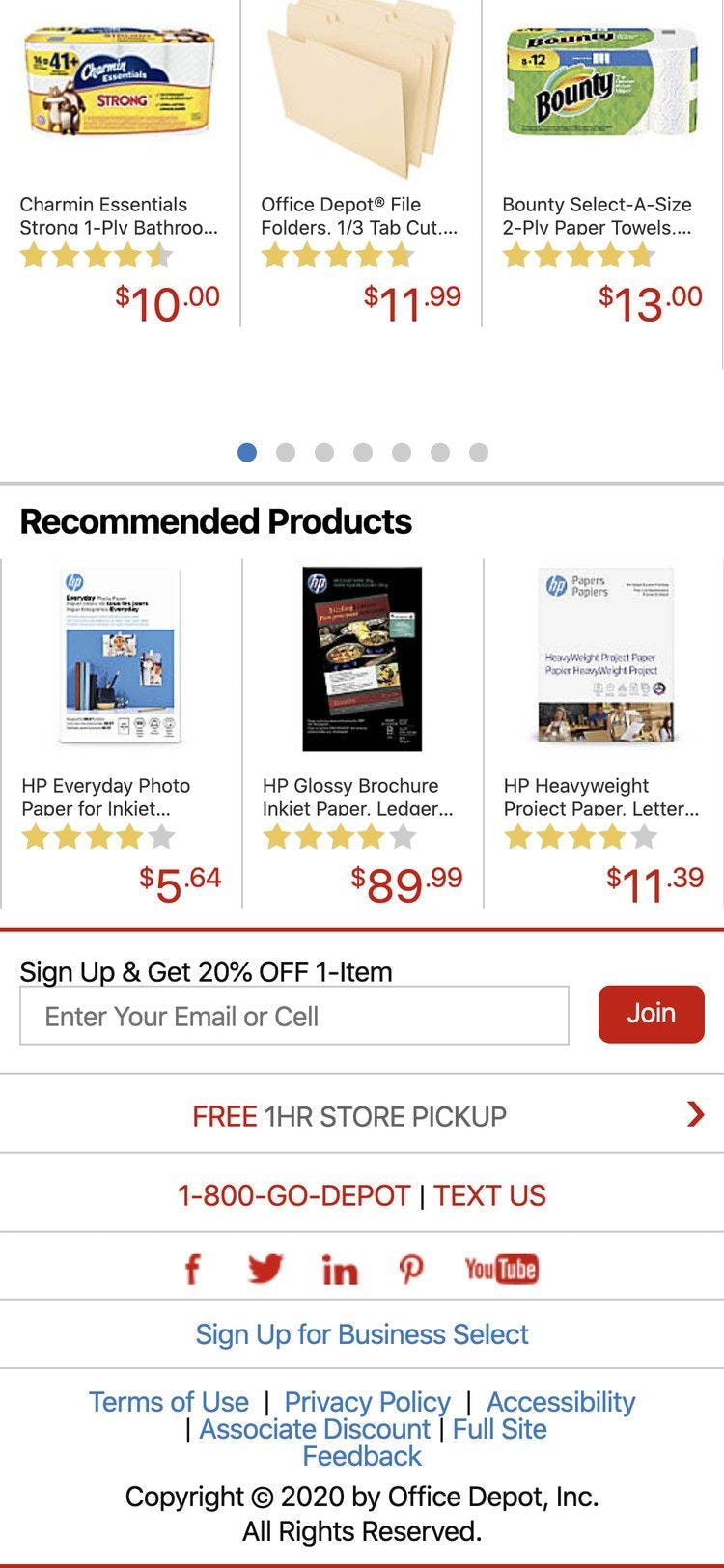

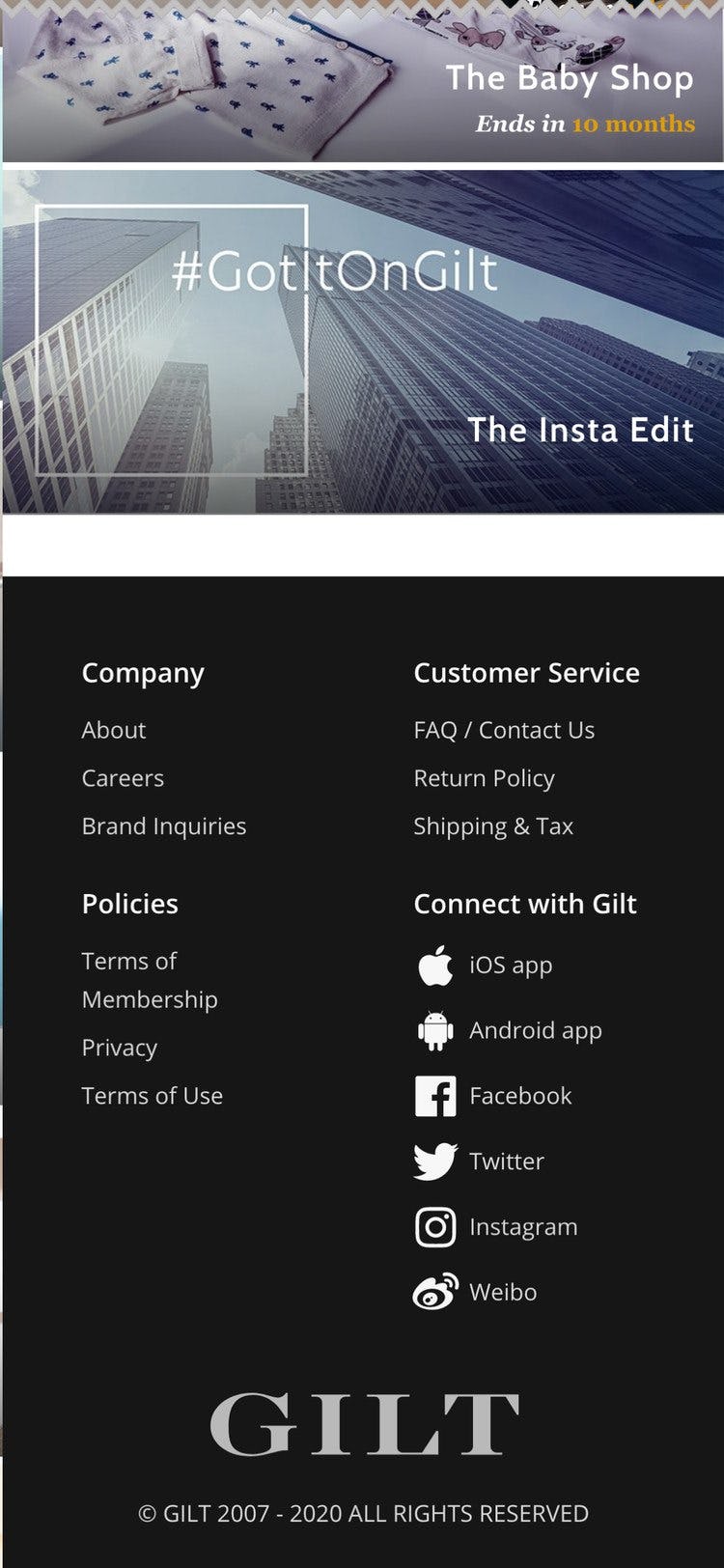

11) 54% of Mobile Sites Don’t Have Direct Links to “Return Policy” and “Shipping Info” in the Footer

In testing, we often observe that users need information about the site’s return policy or shipping options before they are able to make a purchase decision.

While this information can (and should) be available through multiple paths — for example, via the site header, on the product page, or via sitewide search — testing revealed that a subgroup of users consistently will head to the footer when seeking such information.

When it’s not there, users must go “information hunting”, which, depending on how easy it is to find the information elsewhere on the site, results in an often substantial delay in product browsing.

More seriously, if users have difficulty quickly accessing basic returns and shipping information, some may reconsider whether they want to use the site to make their purchase.

Providing links to the return policy and shipping information in the footer is a small and simple implementation that can greatly help users seeking information specific to their order.

Of all the benchmarked sites, IKEA and Sephora show a “perfect” performance in this topic.

Mobile Sitewide Design: Touch Interfaces

Mobile Touch Interfaces is one of the worst-performing topics for mobile websites: only 8% of sites offer “decent” or stronger performances, and 56% of sites have a “broken” performance.

On the positive side, almost all sites have optimized their content for mobile, including strong pinch-to-zoom functionality.

However, many sites struggle with these common violations:

12) 82% of Mobile Sites Don’t Provide Enough Space between Tappable Elements

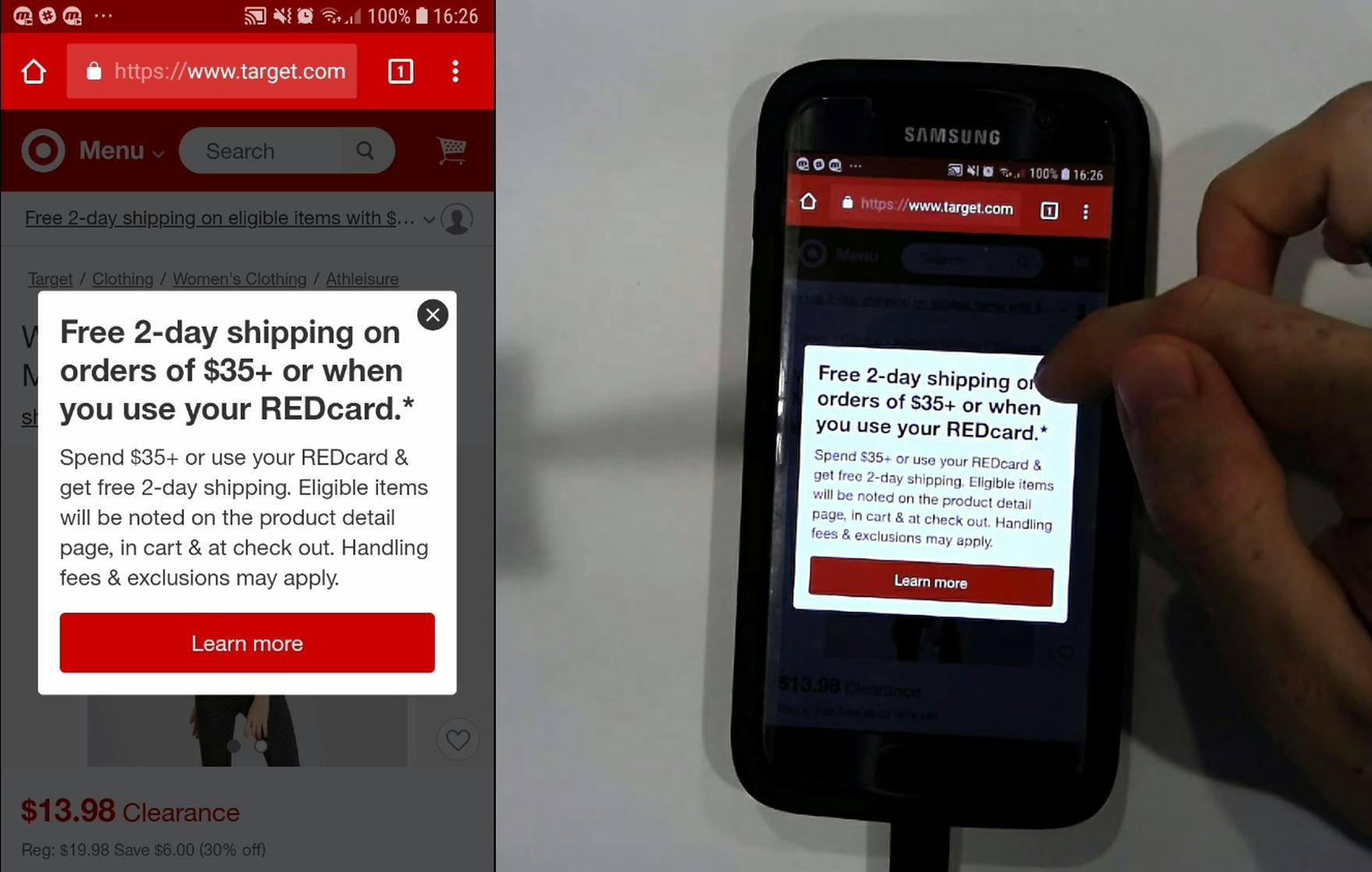

A participant at Target unintentionally tapped a free shipping offer, which was placed immediately adjacent to the element he was trying to tap (the profile icon) (first image). He was then shown the free shipping overlay (second image). Unintentional taps such as this can lead to anything ranging from mild annoyance and slight detours (as in this case) to abandonments if users become completely disoriented.

The issue of spacing between tappable elements is closely related to the size of tappable elements (see below).

Both issues often combine to make it difficult for the end user to reliably navigate the Mobile interface (yet it should be noted that the two issues of sizing and spacing are unique and can occur separately).

In short, inadequate spacing between elements will lead to mistaps, unintended detours, and even abandonments.

Furthermore, inadequate spacing has been a persistent issue during all our Mobile testing: it has been observed extensively ever since our first Mobile testing in 2013, and remains an issue even today.

So what is adequate spacing? Some device manufacturer’s design guidelines stipulate a minimum spacing of 2mm and Baymard’s testing supports the same general recommendation.

However, in cases where the consequence of unintentionally tapping an element due to spacing issues are graver, spacing should be much higher (~10 mm).

Finally, elements should never be placed right at the very edge of the screen, as that area is typically unresponsive and users will thus have difficulty selecting those elements.

13) 88% of Mobile Sites Don’t Make Tappable Elements Sufficiently Large

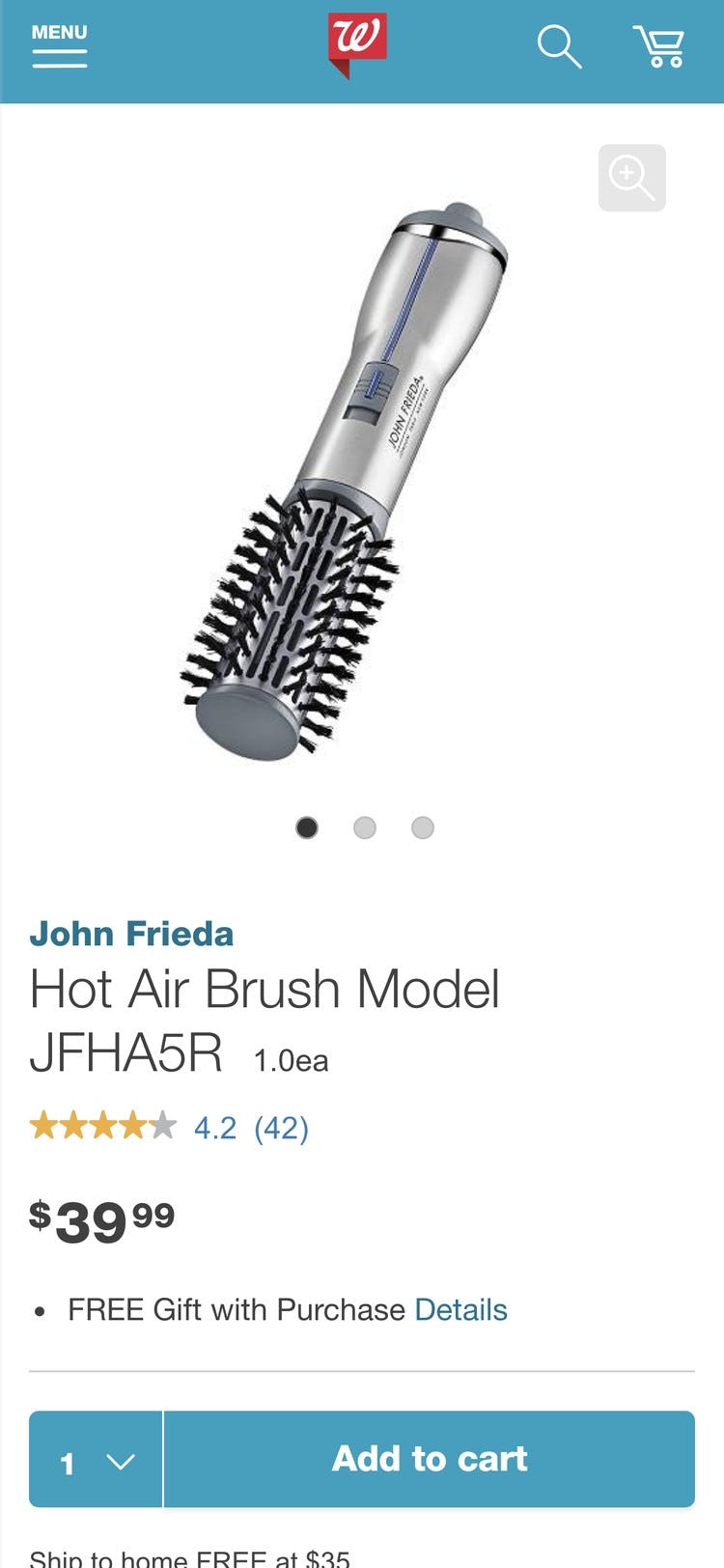

A participant at Sephora struggled to tap the search icon, making several attempts before tapping directly on the magnifying glass. The hit area of the search icon is ~3.3mm x 3.6mm. Note that the main menu and shopping cart icons are similarly undersized.

Despite hit area sizing being a “basic” for Mobile design, we time and again observe sites implementing elements and links with overly small hit areas.

Again, just as is the case with inadequate spacing of elements, the disruption to the user can range from mild annoyance at having to tap multiple times before they hit the right spot, to severe frustration and abandonment if they mistap and end up in another area of the site or lose data (e.g., during checkout).

Yet the solution to these issues is straightforward: have at least a hit area of 7mm x 7mm (measured on the smartphone display).

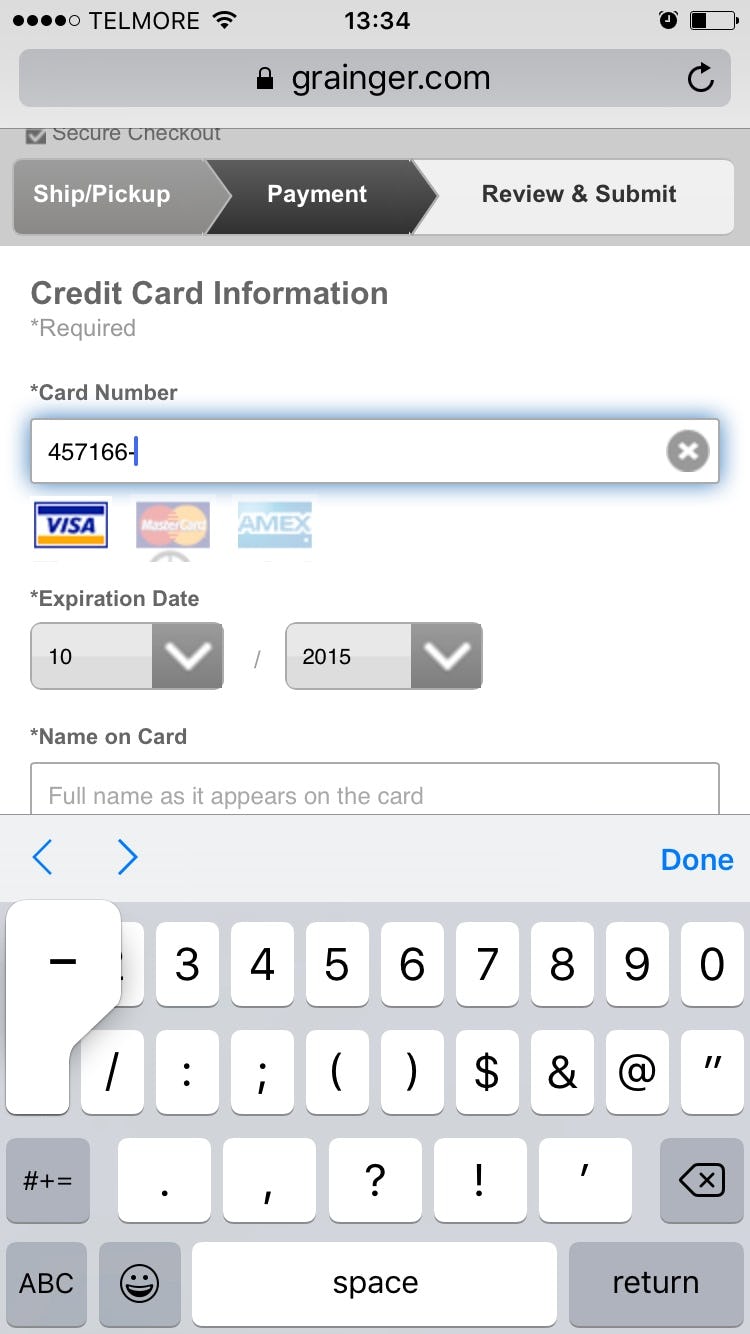

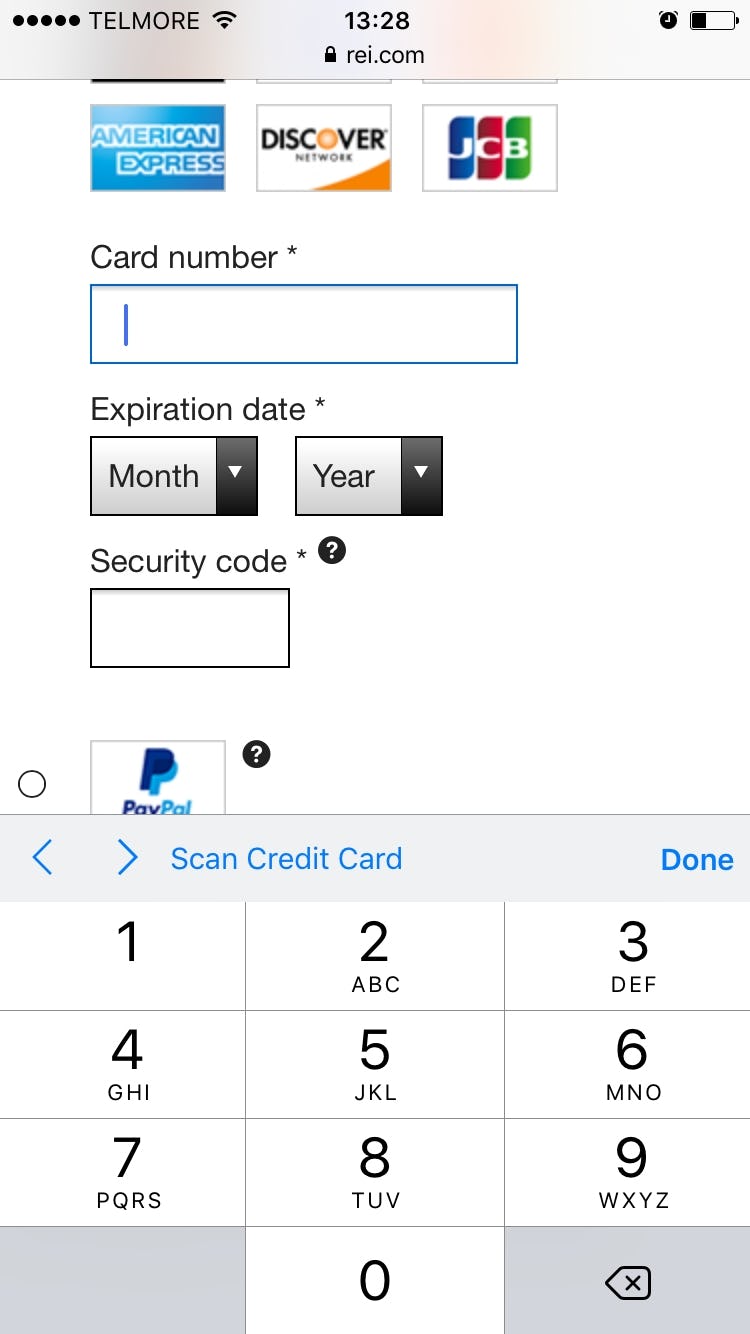

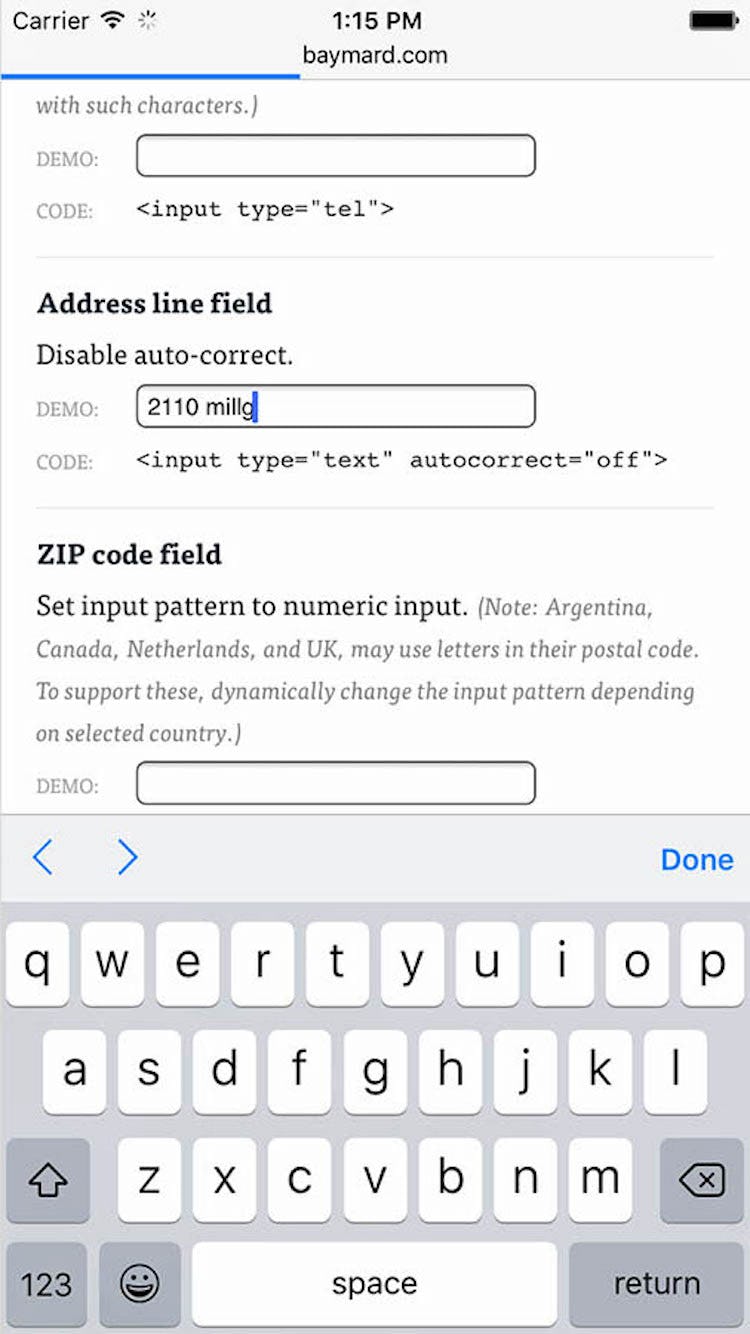

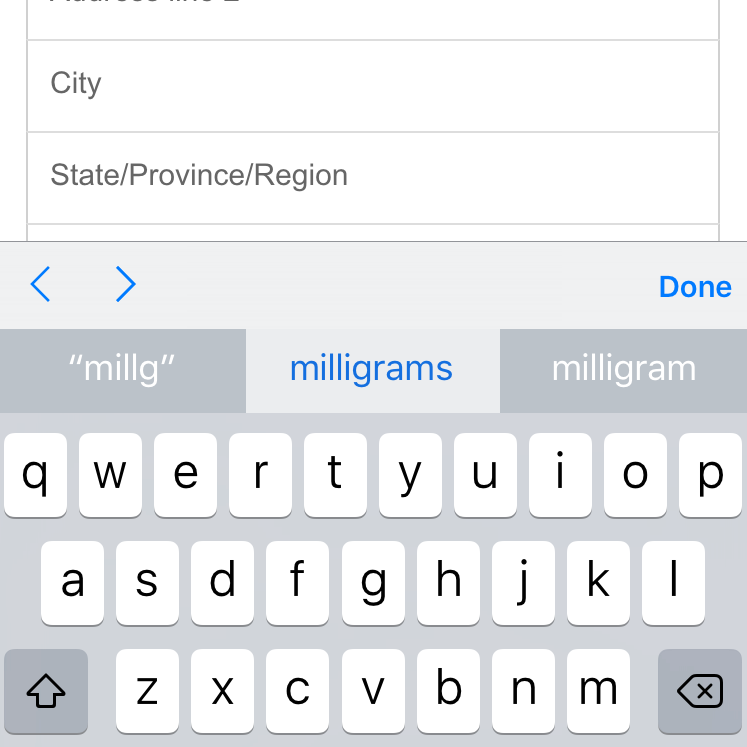

14) 65% of Mobile Sites Don’t Use the Right Keyboard Layout for Relevant Fields

By changing an attribute or two in the code of the input fields, you can instruct a user’s phone to automatically show a specific type of keyboard that is optimized for the requested input.

For example, you can invoke a numeric keyboard for the credit card field, a phone keyboard for the user’s phone number, and an email keyboard for their email address.

This saves the user from having to switch from the standard keyboard layout and, in the case of numeric inputs, minimizes typos, as these specialized keyboards have much larger keys that reduce the chance of accidental taps.

Technically there are a few different ways to invoke the numeric keyboard layouts, and there are also slight distinctions between those keyboard layouts, with slightly different behaviors across platforms (iOS, Android, etc.).

In general, there are two HTML attributes that will invoke numeric keyboard layouts, namely the type and pattern attributes.

For example, for any numeric fields use

<input type="text" inputmode="decimal" pattern="[0-9]*" novalidate autocorrect="off" />

For a complete list of field and code combinations for all field types commonly found in a checkout flow, see baymard.com/labs/touch-keyboard-types.

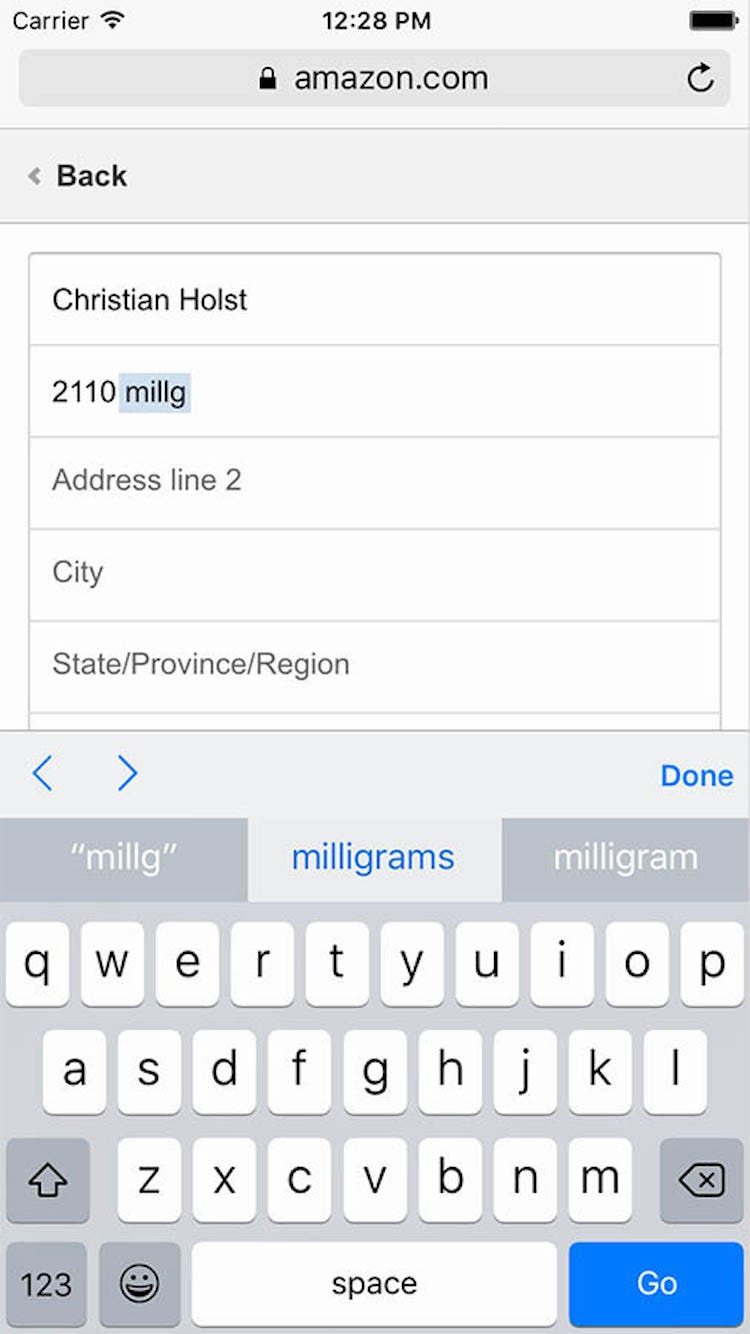

15) 89% of Mobile Sites Don’t Disable Mobile Keyboard Autocorrect When Appropriate

Autocorrect is one of several mobile features that can assist (or, in some cases, hinder) a user’s typing, and is enabled or disabled in their device keyboard settings.

When enabled, it is supposed to automatically correct misspelled words as they are typed or submitted (e.g., correcting “camra” to “camera”).

However, autocorrect often works very poorly for abbreviations, street names, email addresses, person names, and similar words that are not in the dictionary.

We’ve observed how the keyboard autocorrect feature has caused significant usability issues throughout multiple years of Mobile usability testing, and has resulted in a great deal of erroneous data being submitted.

That said, typing is quite difficult on mobile and autocorrection does prove very helpful in scenarios when it corrects invalid input to valid input.

Therefore, autocorrect shouldn’t be disabled on all fields.

Instead use discretion and disable it on fields where the autocorrect dictionaries are weak.

This typically includes names of various sorts (e.g., street, city, person names) and other identifiers (e.g., email address).

Notice how Amazon’s mobile site hasn’t disabled autocorrect for the address field, causing users’ valid street name entries to be overwritten by keyboard autocorrect.

The keyboard autocorrect can however be disabled, ensuring that what users type is not overwritten — as seen here.

You can disable keyboard auto-correct by adding the autocorrect attribute to the input tag and setting it to off, like this:

<input type="text" autocorrect="off" />

(Note: deciding what to do with regards to the search field is a complicated matter; Premium users see the last section in guideline #948.)

Of all the benchmarked sites, only IKEA shows a “perfect” performance in this topic.

Many Opportunities Exist to Improve Mobile UX

This high-level analysis of the current state of Mobile UX focuses on only 6 of the 46 Mobile topics included in our e-commerce UX benchmark analysis.

The 40 other topics should be reviewed as well to gain a comprehensive understanding of the current state of Mobile UX, and to identify additional site-specific issues not covered here.

Although our benchmark has revealed that no sites have a completely broken Mobile UX, it’s clear that there’s much room for improvement, as 54% of sites perform “mediocre” or worse, while no sites have a “state of the art” Mobile experience.

Avoiding the 15 pitfalls described in this article is the first step toward improving users’ Mobile experience:

- Highlight the current scope in the main navigation (90% don’t)

- Make product categories the first level of the main navigations (32% don’t)

- Provide a “view all” option at each level of the product catalog (54% don’t)

- Offer relevant autocomplete suggestions for closely misspelled search terms and queries (48% don’t)

- Include the search scope in the autocomplete suggestions (78% don’t)

- Suggest alternate queries and paths (64% don’t)

- Autodirect or guide users to matching category scopes (84% don’t)

- Properly introduce, position, and style error messages (64% don’t)

- Have an address validator or address lookup feature (18% don’t)

- Provide load indicators whenever new content is loading (84% don’t)

- Have direct links to “return policy” and “shipping info” in the footer (54% don’t)

- Provide enough space between tappable elements (82% don’t)

- Make tappable elements sufficiently large (88% don’t)

- Use the right keyboard layout for relevant fields (65% don’t)

- Disable mobile keyboard autocorrect when appropriate (89% don’t)

Note: In comparison, in most of the other e-commerce UX studies we’ve conducted at Baymard Institute the average UX performance also amounts to “mediocre”, but also tends to have a wider spread of variation and performance scores (see our overall e-commerce UX benchmark).

Also, note that this is an analysis of the average performance across 57 top-grossing US and European e-commerce sites.

When analyzing a specific site there are nearly always a handful of critical UX issues, along with a larger collection of worthwhile improvements to make.

This is the case even when we conduct UX audits for Fortune 500 companies.

For inspiration on other sites’ implementations and to see how they perform UX-wise, head to the publicly available part of the Mobile e-commerce UX benchmark. Here you can browse the Mobile implementations of all 57 benchmarked sites.

For additional inspiration consider clicking through the Mobile Page Designs (click the “Mobile” filter for the specific page design you’re viewing), as these showcase Mobile implementations at 149 leading US and European e-commerce sites and can be a good resource when considering redesigning a Mobile site — of what to emulate, but also of what to avoid.

This article presents the research findings from just a few of the 650+ UX guidelines in Baymard Premium – get full access to learn how to create a “State of the Art” e-commerce user experience.